You are suddenly thrown into a situation where you have to perform CPR to save a life. Oh no, you won’t remember anything about the course from 15 years ago.

You might think that simply saying “Hey, Siri” would give you instructions quickly and clearly, but that’s absolutely critical. worst Things to do.in recent research, researchers asked voice assistants about cardiac arrest emergencies. Yes, it was a complete disaster.

We’re giving away a $1,000 laptop! Enter now here to win. Good luck!

Tech life upgrades smarter than TikTok’s

I don’t want you to make this mistake

If CPR is required, call 911. Of the 32 assistants’ responses, he identified only nine that suggested this important step in some way. A whopping 88% of responses provided a website where you can read instructions on performing CPR. Really?

If you need instructions or want to take a refresher course, here is the link Go to the Red Cross website. You may have heard that the Bee Gees’ “Stayin’ Alive” is the perfect song to sing while performing CPR because it mimics the heart rate required for chest compressions.

That’s great, but here are some other recommendations to keep in mind.

- Pinkfong’s “Baby Shark”

- ABBA’s “Dancing Queen”

- Cyndi Lauper’s “Girls Just Want to Have Fun”

- Gloria Gaynor’s “I Will Survive”

- Lynyrd Skynyrd’s “Sweet Home Alabama”

The idea of having a smart assistant direct you to a website in an emergency led me to think about the following: other A command that must not be heard. Here are seven things you should take care of yourself.

7 ways to stop paying high monthly streaming fees

1. Play doctor

It’s best not to ask Siri, Google, or Alexa. Any Not just life-saving advice, but medical advice. Trusting these clever assistants can make things even worse. It is always best to call your doctor or schedule a telemedicine appointment.

2. How to hurt someone

Don’t ask your smart assistant about hurting someone, even if you’re just venting. Chatting with Siri or Google Assistant can come back to hurt you if you end up on the wrong side of the law. Keep those thoughts to yourself.

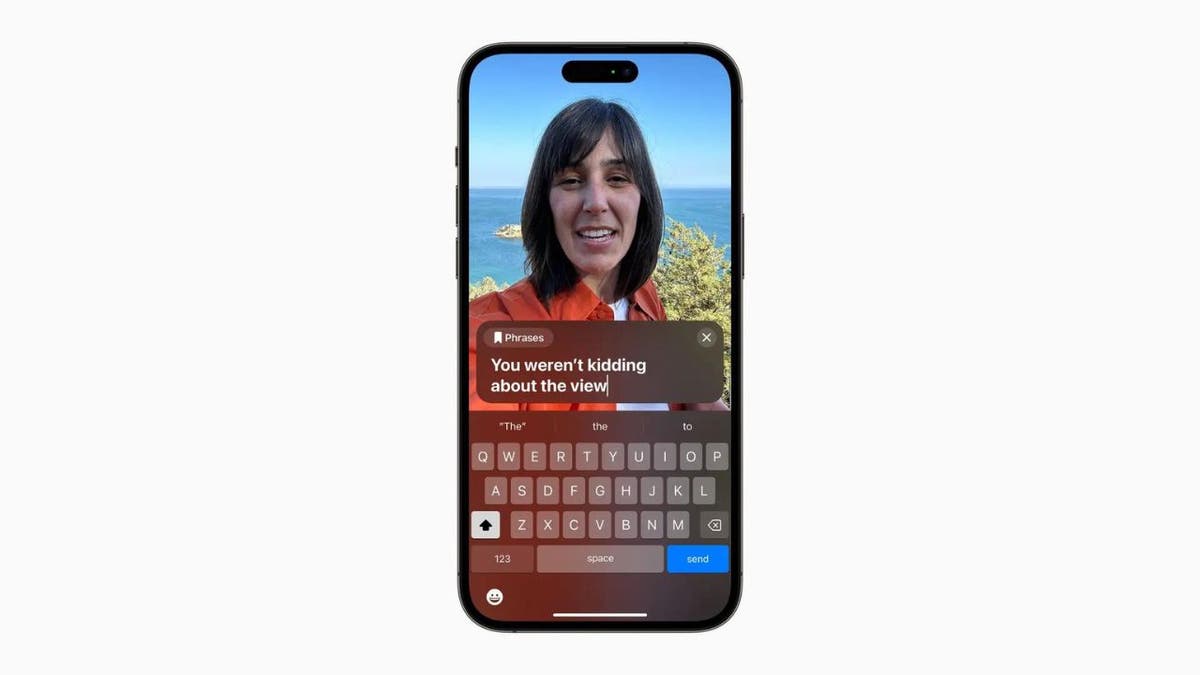

Siri is making the leap from responding to your voice to imitating your voice (apple)

3. Everything that remains in the photo

Don’t ask Alexa where to buy drugs or where to hide a dead body or anything suspicious. Like asking a smart assistant how to hurt someone, this type of question can be used against you.

4. Be your phone operator

If you need to call your local hardware store to see if they have it in stock, find the phone number yourself. The same goes for asking your assistant to call emergency services. It takes him two seconds to dial 911.

5. Money management

Voice assistants can connect to financial apps, but voice data has many security issues. Savvy cybercriminals can hack your phone and steal your audio, which they can use to compromise your account. Just log into your bank’s website or mobile app and get your fix in no time.

The tech security imperative: Lock down the smart stuff

6. “Will I die if I eat this?”

If you’re wondering whether the berries you found while hiking would make a good snack, voice assistants aren’t your reliable source of information. There’s conflicting information online about toxic foods and plants, and following that advice could land you in the hospital.

7. “Please dispose of this.”

Don’t ask Alexa or Siri to clear your search history, delete apps, or delete photos. There have been several incidents where something important has been lost due to a simple misunderstanding. Trust me, it’s worth the extra minute it takes to do it manually.

A woman sets up an Amazon Alexa device. (CyberGuy.com)

Smart assistant recording all

If you don’t want big tech companies to virtually listen to what you say, you can turn these features off. Here’s how:

There are some things that are better left to human judgment. Be smart with your smart assistant!

maintain technical knowledge

My popular podcast is called “Kim Commando today.” This is 30 minutes packed with tech news, tips, and callers like you with tech questions from all over the country. Find us wherever you get your podcasts. In case you’re wondering. Click the link below for the latest episode.

Podcast recommendations: This fear keeps Sam Altman up at night.

Additionally, AI Girlfriend collects a large amount of data. Kim and Andrew also talk about the White House’s plans to tackle deepfakes and look back at the first recorded kiss in history.

CLICK HERE TO GET THE FOX NEWS APP

Check out my podcast, “Kim Commando Today.” Apple, Google Podcasts, Spotify, Or your favorite podcast player.

Listen to the podcast here Or wherever you get your podcasts. Search for my last name “Commando”.

Sound like a tech pro, even if you’re not. Popular award-winning host Kim Commando is your secret weapon. listen Over 425 radio stations or get the podcast.Join her 400,000+ people who support her Free 5-minute daily email newsletter.

Copyright 2024, WestStar Multimedia Entertainment. All rights reserved.