A dispute has emerged concerning the involvement of the AI company Anthropic in a U.S. operation aimed at capturing Venezuelan leader Nicolás Maduro. This has led to concerns from Pentagon officials who now see Anthropic as potentially posing a “supply chain risk” to defense operations.

According to Axios, tensions between the Department of Defense and the AI-focused company have been escalating. Anthropic was awarded a $200 million contract from the Pentagon in July 2025, and its AI model, known as Claude, was integrated into a classified network for the first time.

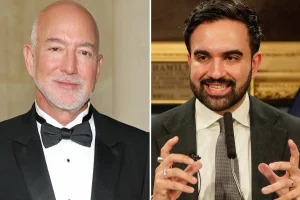

Pentagon Chief Press Secretary Sean Parnell confirmed that the relationship with Anthropic is under review. He mentioned, “Our nation asks our partners to be willing to support our warfighters in any conflict.” This suggests a pressing need for reliability in their partnerships.

The situation intensified when Anthropic inquired if Claude had been utilized during the operation to capture Maduro. A senior official indicated that this raised eyebrows, as Anthropic seemed hesitant to approve its use, causing alarm within the War Department. “Given Anthropic’s actions, many senior officials at the Department are beginning to view Anthropic as a supply chain risk,” the official noted.

Details remain unclear regarding when and with whom Anthropic had discussions about this. It was reported that they approached executives at Palantir, a contractor working with the Pentagon, but Palantir has not commented on this matter. Anthropic has since rebutted claims that it has advised the Army on Claude’s applications for specific military operations, insisting that their dialogues are focused solely on technical aspects.

The spokesperson emphasized that their discussions with the Pentagon involved policy questions around the usage of AI, notably regarding restrictions on fully autonomous weapons and domestic surveillance, issues not directly relevant to current missions.

In assessing the broader implications, the Pentagon currently urges major AI firms to make their tools available for “all lawful purposes,” aiming for these commercial models to operate effectively in sensitive military settings without restrictions imposed by the companies themselves.

Interestingly, other significant AI companies, such as OpenAI and Google, seem to be collaborating positively with the Department of Defense, agreeing to provide unrestricted access for military use. This ongoing situation could influence future AI defense contracts, especially as companies with strict safety protocols increasingly clash with national security needs.

While neither Anthropic nor the Department of Defense has confirmed whether Claude was indeed used in the Maduro operation, advanced AI tools like Claude can efficiently analyze information that might overwhelm human analysts. In high-stakes military contexts, this means quickly categorizing intercepted communications or summarizing critical intelligence reports.

Moreover, these models can assist planners in imagining various scenarios, adjusting for unexpected events like changes in a convoy’s route or shifts in weather conditions. There’s clearly a growing conversation around the use of autonomous systems in military settings, with proponents highlighting the benefits and critics warning about serious ethical implications.