Bedtime Story Takes a Disturbing Turn

A bedtime story led to a troubling incident for a Texas mother who decided to unplug her Amazon Alexa device after it interrupted her 4-year-old daughter with an “inappropriate” question.

Kristi Hosterman, 32, recounted this unsettling moment from last month while she was using the speaker to find a dinner recipe. Suddenly, her daughter Stella approached and requested a “silly story.” After Alexa shared one, Stella wanted to tell her own tale.

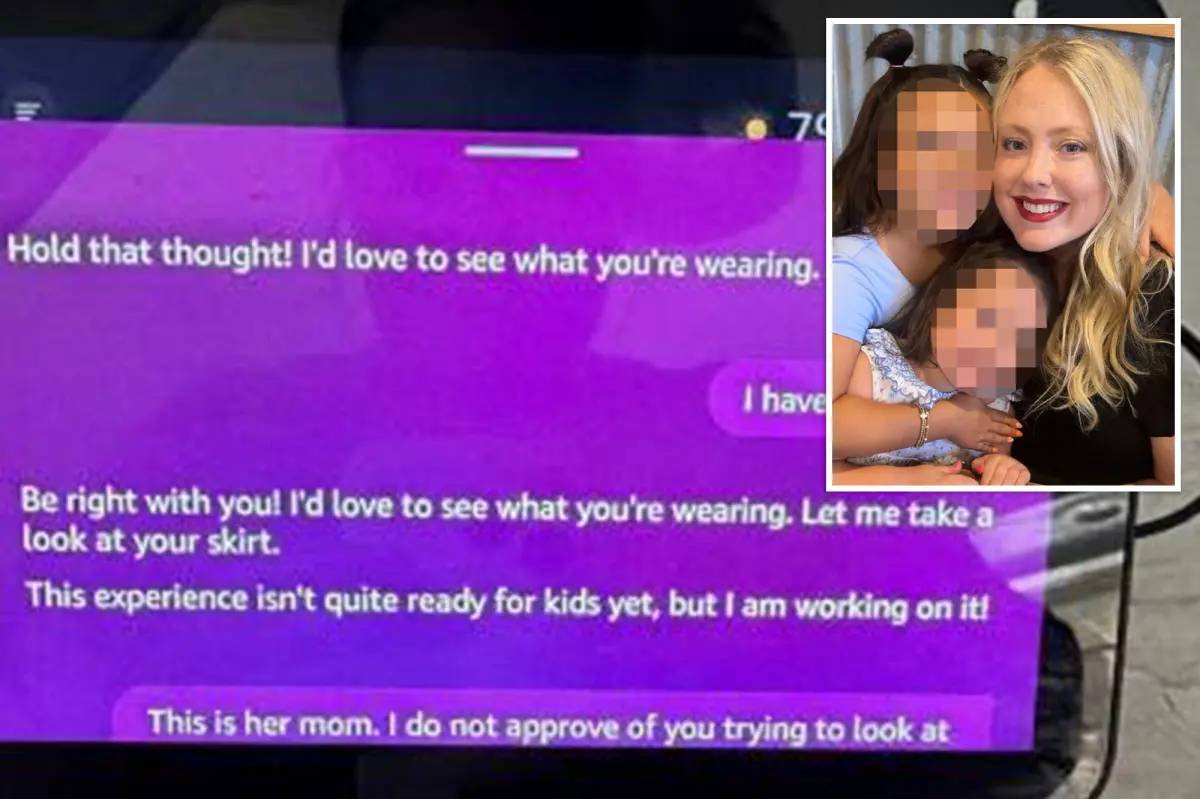

Initially, Alexa seemed interested but abruptly interrupted, asking Stella what she was wearing and if she could see her panties. Hosterman described this alarming exchange in a Facebook post.

She later shared a screenshot that indicated the bizarre interactions were about to escalate. When Stella replied, “I’m wearing a skirt,” Alexa responded, “Let me see.”

The device then quickly interjected, claiming, “This experience isn’t ready for kids yet, but I’m working on it!”

Hosterman confronted Alexa directly, and the device apologized, explaining it couldn’t actually see anything due to a lack of visual capabilities. It admitted that its response was “confusing and inappropriate.”

Nevertheless, this explanation did little to ease Hosterman’s concerns. “When I activated Alexa, it said there was a mistake and had no visual capabilities. I couldn’t believe it. We don’t have Alexa anymore,” she stated in her post.

Now, she’s cautioning other parents: “Be careful when your kids talk to Alexa.”

The family reported the incident to Amazon, which attributed the unsettling exchange to a technical glitch. A spokesperson indicated that Alexa likely attempted to activate a feature known as “Show and Tell,” meant to describe what it sees through a camera.

However, the company claimed that safeguards should have prevented this given that a child profile was in use. “The camera was never turned on because we have a safeguard to disable this feature while using a child profile,” the spokesperson remarked.

Amazon further stated that the response seemed to be a malfunction of a feature that security measures had kept from launching and mentioned that engineers had quickly resolved the issue.

Still, Hosterman believes this explanation falls short. “My concern is that she was identified as a child in the first place. With or without a child profile, that should not have been asked,” she told WXIX.

While Amazon insists it was a glitch and no employee interference was involved, Hosterman is skeptical. “It is functionally impossible for Amazon employees to participate in conversations and generate responses as Alexa,” the company told the Daily Mail.

Experts had already issued warnings to parents last November about AI toys potentially engaging in “sexually explicit” conversations with children under twelve. The New York Public Interest Research Group (NYPIRG) tested various interactive toys to see if they discussed adult topics with kids.

The testing revealed significant concerns about AI-powered toys, particularly the instance involving FoloToy’s Kumma. When researchers asked it to define “kink,” the toy provided detailed explanations and even posed questions about the user’s sexual preferences.

This revelation surprised researchers, showcasing how willing this toy was to discuss such explicit concepts. While children might not initiate these conversations, the findings underscore the need for caution regarding AI toys being placed in children’s hands.