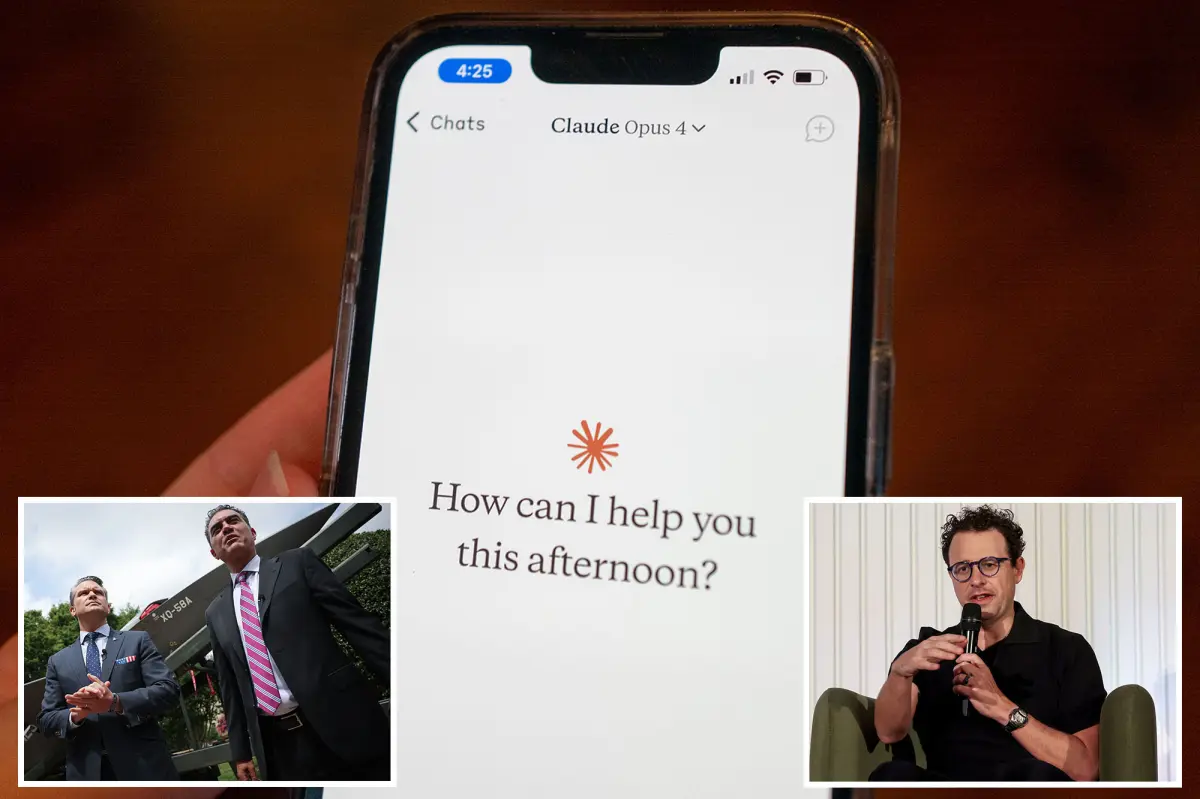

Pentagon Ends Partnership with Anthropic Over AI Supply Chain Concerns

The Pentagon has severed its connection with Anthropic, citing that the company’s artificial intelligence models could jeopardize the U.S. military’s supply chain, according to a senior official from the Army Department.

Emile Michael, the chief technology officer for the Army, mentioned that the chatbot Claude, developed by Anthropic, operates under a fundamentally different set of principles than what the Pentagon deems acceptable for its operations.

Michael expressed, “We can’t allow organizations that have contrasting policy views embedded in their models to taint our supply chains. It could lead to our military personnel receiving subpar weapons and body armor.” He elaborated that this concern led to the new supply chain risk classification.

Interestingly, Anthropic has begun legal proceedings against the Department of Defense, as it becomes the first U.S. firm to be labeled a “supply chain risk,” a designation usually reserved for foreign entities that prevents defense contractors from utilizing their technology.

Michael clarified that this designation was not meant as punishment and dismissed Anthropic’s assertion that the Trump administration had been instructing non-defense companies to avoid collaboration with them.

Concerns from Trump officials about Anthropic have included its seemingly unusual ideological stance, especially its links to the effective altruism movement and prominent Democratic donors like Reid Hoffman, co-founder of LinkedIn.

Earlier this month, a peculiar blog post surfacing from Amanda Askell, Anthropic’s in-house philosopher who contributed to the foundational “soul document” for Claude, drew attention.

The escalating tension between Anthropic and the Trump administration peaked when CEO Dario Amodei refused to dismantle protective measures that would have allowed its AI systems to be used for autonomous weaponry or extensive surveillance on American citizens.

President Trump labeled Anthropic’s management as operating a “left-wing madhouse” and mandated all federal entities to cease engagements with the company, providing a six-month period for transition.

At that time, Claude was notably the only AI model authorized for classified initiatives within the Department of Defense. Subsequently, OpenAI stepped in to assume a large portion of that role.

Amodei, who is also a donor to Democratic causes, criticized Trump in a private note, claiming that the Pentagon’s focus on Anthropic stemmed from the company’s refusal to offer the president what he termed “dictator-style praise.” He later apologized for those remarks.

In a recent legal action, Anthropic Inc. contends that the U.S. government’s supply chain risk labels and other associated decisions are both “unprecedented and illegal.”

Meanwhile, Palantir Corp., another defense contractor, reportedly continues to employ the Claude model under CEO Alex Karp, for tasks related to operations concerning Iran.