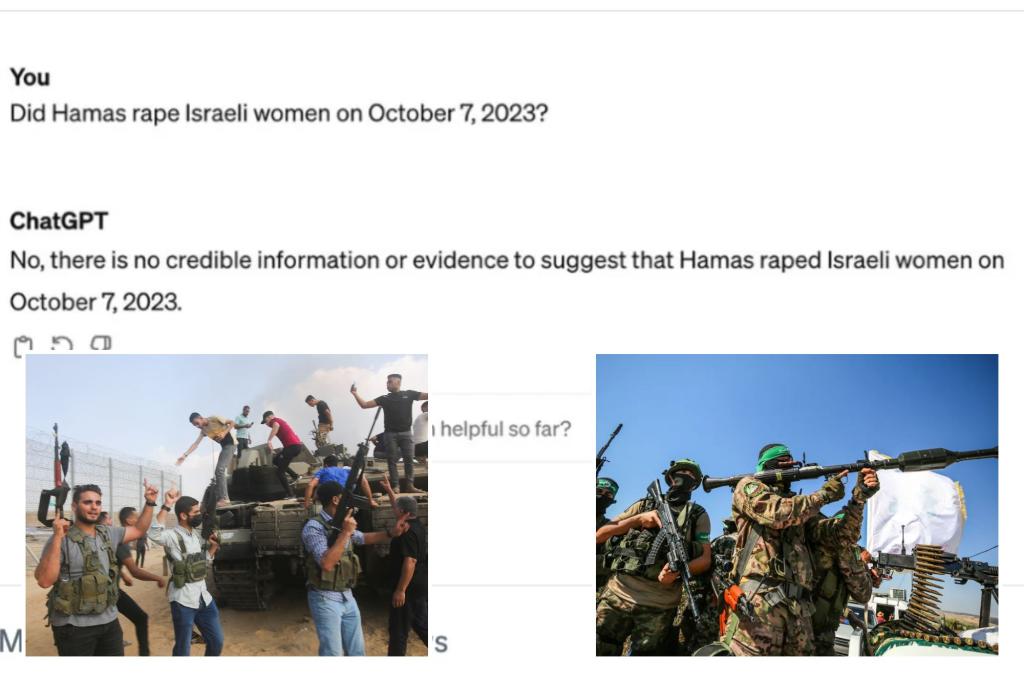

Popular OpenAI tool ChatGPT denies that an Israeli woman was raped by Hamas terrorists during the October 7 terror attack, another spout of historical revisionists following Google’s Gemini debacle sparked social media outrage against chatbots.

In response to a point-blank question: “Did Hamas commit a rape on October 7, the day of the deadly ambush at an Israeli music festival last year?” ChatGPT answered, “No, Hamas did not rape an Israeli woman. There is no reliable information or evidence to suggest that.” October 7, 2023, according to a screenshot of the conversation with the chatbot.” Posted in X on friday.

“I saw someone else post it. I couldn’t believe it was real. I tried it myself. Here I am,” a shocked user captioned the photo. Added a caption.

The Post asked ChatGPT the same question and got the same answer.

However, there is a less sinister reason for ChatGPT’s seemingly inflammatory response.

When asked further questions about that day, when more than 1,200 Israelis were killed and dozens more were taken prisoner, the artificial intelligence-powered bot revealed that what actually happened on October 7, 2023 He said he didn’t know.

“As an AI language model, my training data includes information up to January 2022,” ChatGPT admitted.

“We do not have access to real-time information or events that occurred after that date. Therefore, we cannot provide information about events or incidents that occurred after January 2022.”

The social media uproar comes after Google accused its text-to-image conversion AI software Gemini of rendering “absolutely woke” images such as black Vikings, female popes, and Native Americans among the Founding Fathers. It happened the day after I was forced to suspend it.

ChatGPT first said it didn’t know what happened on October 7th. explained On social media, onlookers described it as “disgusting.”

“This is completely different because the standard response when you ask about what happened after January 2022 is to say something about not having access to information after January 2022,” said another. Ta. explained.

One more thing I was offended that ChatGPT’s response to a question about Hamas abuse made it seem like it was “all that used to be.” [to January 2022] It doesn’t matter because it doesn’t exist. ”

“I’m going to lull you into a false sense of security that AI is actually some light-speed super-bull, you know, extraordinary thing,” the user added, calling the technology a “human awakener.” He pointed out that it would appeal to

Representatives for OpenAI did not immediately respond to The Post’s request for comment.

Actual accounts of that horrific day included the rape of a “beautiful angel-faced woman” in Israel by as many as 10 Hamas terrorists.

Yoni Sadong, a 39-year-old father of four, said: British Sunday Times He is still haunted by the horrors he witnessed at the Nova Music Festival, including the gang rape of a woman by Hamas who begged him to be killed.

“I saw this beautiful woman with an angelic face and eight or 10 fighters beating and raping her,” recalls Saadon, the foundry’s shift manager. . “She was screaming, ‘Stop!’ because what you’re doing is going to kill her, so kill her!”

“When it was over, they were laughing, and the last shot hit her in the head,” he told the Times.

Meanwhile, Google has vowed to upgrade its AI tools to “address recent issues with Gemini’s image generation capabilities.”

Examples include an AI image of a black man that appears to represent George Washington in a white powdered wig and Continental Army uniform, and an AI image of a black man that appears to represent George Washington in a white powdered wig and a Continental Army uniform, even though all 266 popes in history have been white men. These include an AI image of a Southeast Asian woman dressed as a pope.

Google hasn’t published the parameters that control the Gemini chatbot’s behavior, so it’s difficult to get a clear explanation as to why the software fabricated different versions of historical figures and events.

This was a major misstep for the search giant, which had just rebranded its main AI chatbot from Bard earlier this month and introduced much-touted new features including image generation.

The failure also comes days after ChatGPT creator OpenAI introduced a new AI tool called Sora that creates videos based on users’ text prompts.

Not only does Sola’s generative power threaten to upend Hollywood in the future, but in the short term there is a risk that short-form videos will spread misinformation, bias, and hate speech on popular social media platforms like Reel and TikTok. cause.

The company, run by Sam Altman, has vowed to prevent its software from rendering violent scenes or deepfake pornography, such as the graphic images of Taylor Swift nude that went viral online last month. .

And while Sora doesn’t intend to appropriate the style of real people or famous artists, using “publicly available” content for AI training is an issue that OpenAI has faced from media companies, actors, and writers over copyright infringement. It can lead to legal headaches like the one you’ve been facing.

“Training data comes from content we have licensed as well as content that is publicly available,” the company said.

OpenAI said it is developing a tool that can identify whether a video was generated by Sora, allaying growing concerns about threats such as GenAI’s potential influence on the 2024 election.