Geoffrey Hinton caught my attention for He told the BBC Artificial intelligence is an “extinction level threat” to humanity. Hinton is not one to sound the alarm. He is called the “Godfather of AI” because he developed the neural network technology that makes artificial intelligence possible. He is the only person with authority to speak on this topic, and when he does, the world takes notice.

May 2023, Hinton ended his 10-year career. The Google CEO took the opportunity to speak frankly about the existential danger that AI poses to us “lesser” carbon intelligences. Moreover, ChatGPT’s debut was in November 2022, just six months before it had already stirred a worldwide reaction of equal parts curiosity and anxiety at what felt like a first encounter with an elusive technology that is now being welcomed into our lives, whether we’re ready for it or not.

AI evokes Orwellian digital dystopias, predictions that a small number of oligarchs and AI overlords will plunge the masses into totalitarian enslavement. Many call for regulation of AI development to mitigate this risk, but how effective will it be?

Ironically, artificial intelligence wasn’t elusive at all until November 2022. It was ingrained in our lives long before ChatGPT became trendy. People were already using AI unconsciously every time they opened their smartphones with facial recognition, edited their papers with Grammarly, or chatted with digital assistants like Siri or Alexa. Apple and Google Maps are constantly learning about your daily life through AI, predicting your movements to improve your daily commute. Every time someone clicks on a webpage with an ad, the AI learns more about that person’s behavior and preferences, and that information is sold to third-party ad agencies. We’ve been interacting with AI for years, but we didn’t care about it until now.

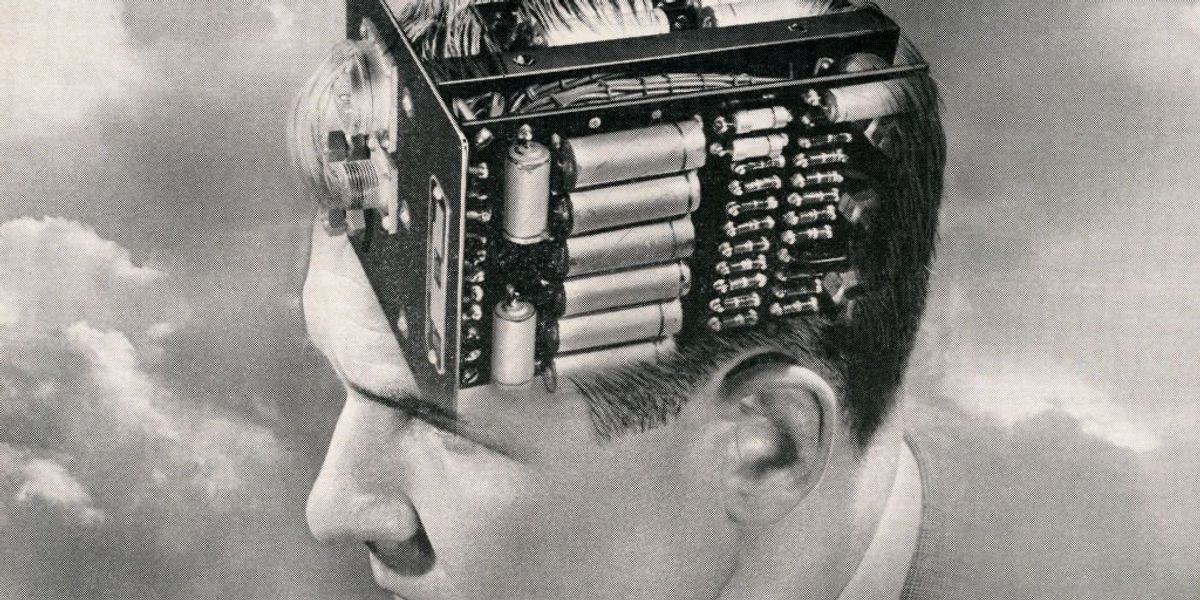

The debut of ChatGPT has sparked a sudden global concern about AI. What is so distinctive about this chatbot compared to other AI iterations we’ve been exposed to over the years that has sparked this newfound fascination and concern? Perhaps ChatGPT reveals something that has been lurking in our everyday interactions with AI: its potential, or, as many would argue, its inevitability in exceeding human intelligence.

Before ChatGPT, our interactions with artificial intelligence were limited to “narrow AI,” or “artificial intelligence in the narrow sense” (ANI), which are programs that are limited to one specific purpose. Facial recognition has no purpose or function beyond that single task. The same is true for Apple Maps, Google’s search algorithm, and other general artificial intelligence.

ChatGPT is the world’s first introduction to Artificial General Intelligence (AGI), an AI capable of having its own thoughts. Purpose of AGI Our goal is to create machines that can reason and think with human-like capabilities, and then exceed those capabilities.

While chatbots similar to ChatGPT technically fall under the umbrella of ANI, ChatGPT’s thoughtful, human-like responses and its superhuman speed and accuracy lay the foundation for the emergence of AGI.

Reputable scientists with diverse personal and political views are divided over the limits of AGI.

For example, pioneering web developers Marc Andreessen says AI cannot exceed its programmed goals.

[AI] Mathematics, code, and computers are made by humans, owned by humans, It is controlled by humans, and the idea that it will one day develop a mind of its own and decide it has a motive to kill us is a superstitious excuse.

vice versa, Lord Reitha former Royal Astronomer and former President of the Royal Society, predicts that humanity is only a point in evolutionary history, and that the arrival of AGI will usher in a posthuman era that will dominate evolutionary history.

Abstract thinking by biological brains has been the basis for the emergence of all culture and science. But this activity spans at most tens of thousands of years and is only a faint precursor to the more powerful intelligences of the inorganic posthuman era. Thus, in the distant future, it will be machine minds, not human minds, that will most fully understand the universe.

Elon Musk and a group of world-class AI experts published an open letter He called for an immediate halt to AI development, anticipating Lord Rees’ prediction rather than Andreessen’s. Musk didn’t take long to ignore his own call to action, unveiling X’s new chatbot Grok, with similar functionality to ChatGPT, Google’s Gemini, and Microsoft’s new AI chatbot integrated into its Bing search engine.

Ray KurzweilThe transhumanist futurist and head of development at Google famously predicted in 2005 that by 2045, AI technology would surpass human intelligence, reaching a singularity where we would have to choose between merging with AI or being naturally selected out of our evolutionary trajectory.

Was he right?

The proof of these various predictions will be in the pudding being made in our current cultural moment. But ChatGPT has brought timeless ethical questions to the forefront of broader debate in new ways: what it means to be human and Glenn Beck asked poignantly. The editorial asks, “Will AI rebel against its creator, just as we have rebelled against it?” The fact that such questions are being asked in public indicates that we are in a new technological era, one in which we are confronted with deep philosophical questions that will shape not only how we understand the nature of AI, but also how we confront the nature of ourselves.

Live Without Fear

So how do we mitigate the risk that our worst fears about AI will come true? Will we, the current masters of AI, inevitably become its slaves?

The latter fear is often reminiscent of Orwellian predictions of a digital dystopia in which a few oligarchs or AI overlords plunge the masses into totalitarian enslavement. Many call for regulation of AI development to mitigate this risk, but how effective is it? When AI power is directed towards private companies, governments seize all of AI’s power. When directed towards governments, tech titans could just as easily become oligarchs as their rivals in government. In both scenarios, people at risk of AI enslavement have little power to control their own destiny.

But we are already stepping into Huxleyan slavery, trading seemingly menial yet deeply human actions for the convenience of technology on a digital plate. Orwellian AI domination won’t happen overnight. It starts with surrendering the creative act of writing to papers “written” instantly generated by AI chatbots. It will progress when you give up the difficulty of building a meaningful human relationship with an AI “partner” who is always there for you, never challenges you, and always affirms you. If we have already willfully surrendered our freedom to AI, then an Orwellian future is not so far-fetched.

To avoid this Huxleyan enslavement, to the convenience of AI, we need to fall deeply in love with being human. We may not be responsible for regulating our public and private roles in the development of AI, but we are responsible for determining its role in our daily lives. This is the most powerful means of restraining AI: choosing to labor with creativity, enduring the inconvenience and difficulties of building relationships, and desiring what we should get with our efforts that are not immediately available. That is, we must strive to be human and enjoy the fulfillment that comes from this labor. Convenience is the gateway to voluntary enslavement. Our humanity is the price of such trade and anecdote.