Ursula K. Leguin I wrote it once“There is no right answer to a wrong question.” While AI may struggle with QUIP, our readership will grasp concepts swiftly. To address an issue, one must concentrate on its crucial aspects.

This is a key point in the ongoing discourse that Professor Nicholas Creel has recently engaged in regarding whether AI models “learn” like humans do.

A straightforward assertion, often highlighted in Eric J. Larson’s book “The Myth of Artificial Intelligence”, indicates that they don’t. They are unable to handle or create novelty. When faced with uncertainty or incomplete data, their abilities are limited, and they lack a genuine notion of empathy or comprehension. They can replicate and exploit vast amounts of data, but unlike humans, they cannot intuitively deduce new truths from merely aggregating information.

I posed the question to Microsoft’s AI, Copilot: “Do AI reason infer the same way as humans?” This was the reply I got:

“AI utilizes extensive datasets and algorithms for decision-making and predictions. It analyzes information based on patterns and statistical methods. AI formulates conclusions based on established rules and frameworks. There is neither intuition nor emotion influencing that decision.”

As Moiya Mctier from the Human Artistry Campaign explained, genuine creativity transcends merely identifying patterns and connections within extensive datasets. It is “a manifestation of lived experiences and evolves organically from the interplay of culture, geography, family, and personal identity.”

Thus, it is evident that AI acquires knowledge and produces outputs in a fundamentally distinct manner from humans.

Nonetheless, it is also clear that this barren philosophical debate is of lesser importance to those of us who encounter AI in everyday life, including musicians like myself who have been sourced from the web and used in AI models without permission. According to Leguin, the more pressing question is what AI accomplishes and if the benefits justify the costs.

It is undeniable that to create models and implement algorithms for pattern recognition, AI companies need to replicate copyrighted material or generate new creations derived from such works. They also disseminate these works widely. These are three exclusive rights held by the creator under federal law.

Typically, firms wishing to engage in these activities would simply license their creations from the author. However, competing AI companies have largely opted to proceed without licensing and effectively set the market price for these copyrights at zero.

This is a mishap for those who underestimate the world’s creative legacy, the significant cost of lost opportunities, and the efforts of real creators. Additionally, it fosters a derivative culture that, if left unchecked, results in a glaring void where authentic originality should emerge from future generations. AI models can excel at generating variations of works that have been replicated and deconstructed (composed from sufficiently diverse sources to elude immediate plagiarism), but they cannot break new ground to offer something genuinely innovative.

Regrettably, AI advocates appear determined to portray commercial AI as charming little robots that learn just like humans. They’ve recontextualized language, reconstructed art as “data,” and replicated extensively under the guise of “training,” almost as if AIs were pets. This narrative is a fairy tale meant to obscure the reality that trillion-dollar corporations are engaging in or allowing large-scale copyright violations, supported by heavily invested technology financiers.

Perhaps the deception will mystify AI, but we humans see through it clearly.

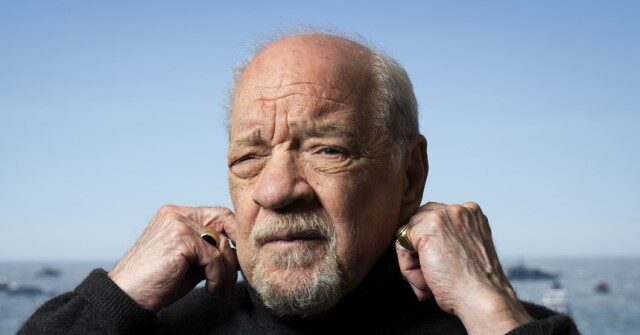

David Lowry is a mathematician, writer, musician, producer, singer-songwriter, and member of the band Camper Van Beethoven.