- A new study published by research group Epoch AI predicts that tech companies will run out of public training data for AI language models between 2026 and 2032.

- When public data eventually runs out, developers will have to decide what to feed into language models. Ideas include using data currently considered private, such as emails and text messages, or even “synthetic data” created by other AI models.

- Another path to pursue beyond training larger models is to build more skilled training models that are specialized for specific tasks.

Artificial intelligence systems like ChatGPT could quickly run out of what makes them smart: the tens of trillions of words that people have written and shared online.

A new study published Thursday by research group Epoch AI predicts that tech companies will run out of the supply of public training data for AI language models around the turn of the decade, meaning sometime between 2026 and 2032.

Study author Tamei Beciloglu likened this to a “literal gold rush” that depletes finite natural resources, saying the field may struggle to maintain its current pace of progress once the reservoir of human-created text is depleted.

Yellen warns of ‘big risks’ from AI in finance, but sees ‘huge opportunities’

In the short term, tech companies like ChatGPT developer OpenAI and Google are racing to secure, and in some cases pay for, high-quality data sources to train their own AI large-scale language models, for example by striking deals to tap into the steady stream of text coming out of Reddit forums and news outlets.

In the long term, there will not be enough new blogs, news articles, and social media comments to maintain the current trajectory of AI development, putting pressure on companies to access sensitive data currently considered private, such as emails and text messages, or to rely on unreliable “synthetic data” spit out by the chatbots themselves.

“There’s a real bottleneck here,” Beciloglu says. “Once you start running into constraints on how much data you have, you can’t scale the model efficiently. And model scaling was probably the most important way to extend the capabilities of the model and improve the quality of the output.”

Artificial intelligence systems like ChatGPT are consuming ever-increasing amounts of human text to get smarter. (AP Digital Embed)

The researchers first made the prediction two years ago, shortly before ChatGPT’s debut, in a working paper predicting the impending end of high-quality text data in 2026. A lot has changed since then, including new techniques that allow AI researchers to make better use of existing data and “overtrain” on the same sources multiple times.

But there are limitations, and after further research, Epoch predicts that public text data will be exhausted within the next two to eight years.

The team’s latest research has been peer-reviewed and will be presented this summer at the International Machine Learning Conference in Vienna, Austria. Epoch is a nonprofit research lab run by San Francisco-based Rethink Priorities, which is funded by advocates of Effective Altruism, a philanthropic effort that has dedicated money to mitigating the worst risks of AI.

Beciloglu said AI researchers realized more than a decade ago that they could significantly improve the performance of AI systems by aggressively scaling up two key ingredients: computing power and vast amounts of internet data.

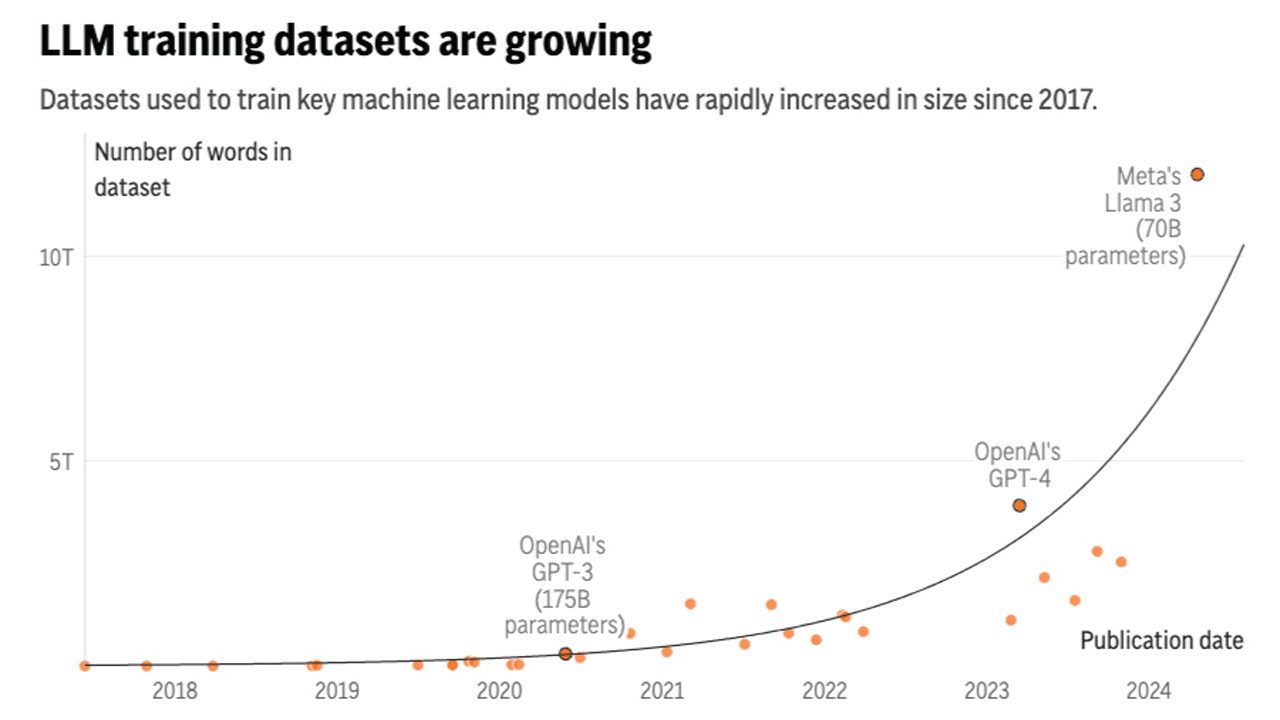

According to research from Epoch, the amount of text data fed into AI language models is growing about 2.5 times a year, while computing is growing about four times a year. Meta Platforms, Facebook’s parent company, recently claimed that the largest version of its upcoming, yet to be released, Llama 3 model has been trained on up to 15 trillion tokens, each representing a part of a word.

But how much worrying about data bottlenecks is worth it is debatable.

“I think it’s important to keep in mind that you don’t necessarily need to train bigger and bigger models,” said Nicholas Papernot, an assistant professor of computer engineering at the University of Toronto and a researcher at the nonprofit Vector Artificial Intelligence Institute.

Papernot, who was not involved in the Epoch research, said building more skilled AI systems also requires training models that are specialized for specific tasks, but he worries that training a generative AI system on the same outputs it is producing could lead to a performance degradation known as “model collapse.”

Seven noteworthy recent Google announcements

Training with AI-generated data, Papernot said, “is like what happens when you copy a piece of paper and then copy the copy: some information is lost.” What’s more, Papernot’s research has found that it can further encode the errors, biases, and unfairness already built into the information ecosystem.

If real, human-created texts remain an important source of data for AI, it will force managers of some of the most popular repositories, websites like Reddit and Wikipedia, and news and book publishers, to think hard about how they are used.

“We may not chop off the tops of every mountain,” jokes Serena Deckelman, chief product and technology officer at the Wikimedia Foundation, which runs Wikipedia. “It’s an interesting question right now, that we’re having a conversation about natural resources about human-created data. I shouldn’t laugh, but I think it’s kind of surprising.”

While some have tried to shut down the data derived from AI training (often after it has already been obtained without compensation), Wikipedia places few restrictions on how AI companies can use articles written by volunteers. Still, Deckelman said he hopes there will remain incentives for people to keep contributing, especially now that cheap, auto-generated “junk content” is beginning to pollute the internet.

AI companies “should be concerned about how human-created content continues to exist and remain accessible,” she said.

From an AI developer’s perspective, paying millions of humans to generate the text needed by an AI model is “likely not an economical way” to improve technical performance, according to the Epoch research.

Click here to get the FOX News app

As OpenAI begins training the next generation of its GPT large-scale language models, CEO Sam Altman told an audience at a United Nations event last month that the company is already experimenting with “generating huge amounts of synthetic data” for training.

“I think what you need is high-quality data. There’s low-quality synthetic data, there’s low-quality human data,” Altman said, but he also expressed concern about over-reliance on synthetic data over other technical methods to improve AI models.

“It would be really odd if the best way to train a model is to generate, say, a quadrillion tokens of synthetic data and feed that back,” Altman said. “That just seems really inefficient.”