AI’s Impact on Privacy in America

Artificial intelligence is reshaping society in profound ways, but, as experts indicate, we’re only scratching the surface. This technological shift brings with it challenges, particularly concerning a value many Americans hold dear: privacy.

Several notable incidents have recently underscored the tension between advancing technology and personal privacy.

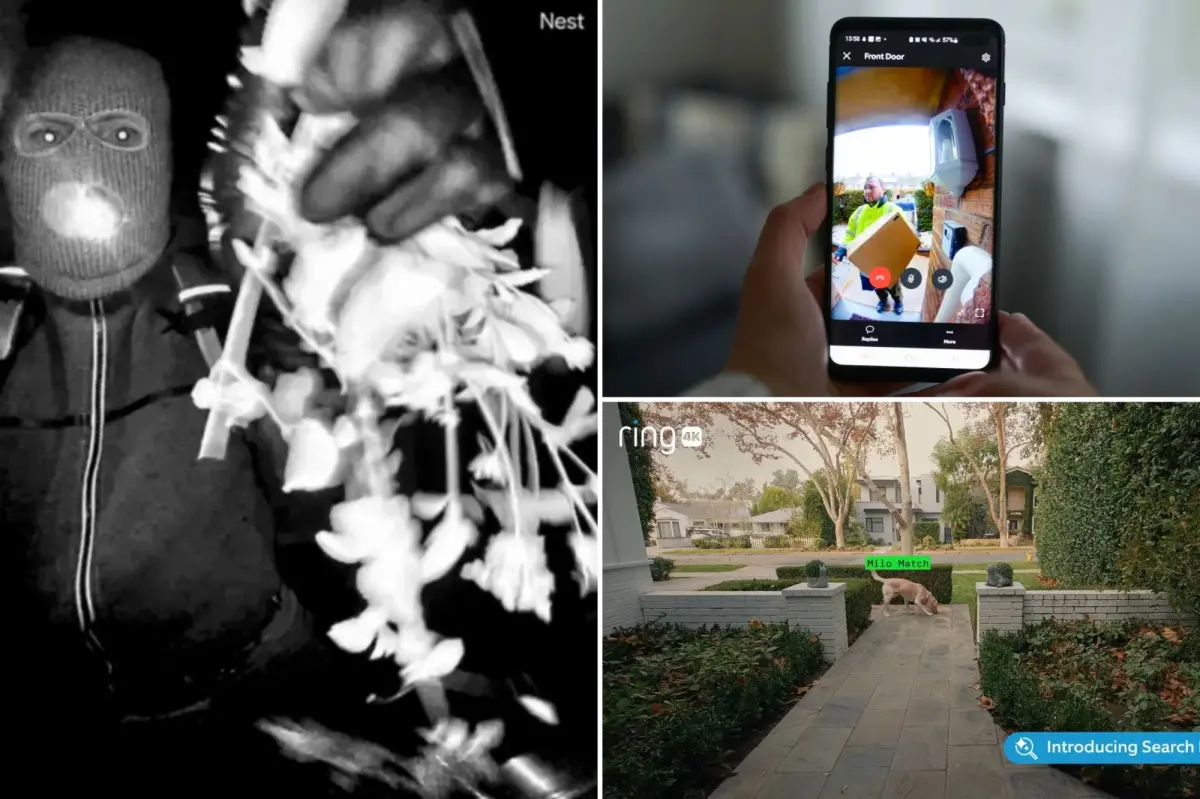

Take the case of Ring, Amazon’s doorbell camera brand. The company faced a storm of criticism following a Super Bowl advertisement intended to highlight its technology’s ability to help locate lost pets. Instead, it generated anger among viewers and privacy defenders, who feared it implied the rise of AI-driven surveillance systems that could be misused by law enforcement and corporations.

The backlash led Ring’s CEO to apologize for the extensive surveillance network the company has built, despite it being the backbone of its business model. In the aftermath, Ring severed ties with Flock Safety, a firm that offers license plate recognition services to police.

In another instance, OpenAI, the company behind ChatGPT, came under scrutiny after its staff banned Canadian school shooter Jesse Van Loetzeler for troubling messages but failed to alert authorities.

Doorbell cameras serve various purposes beyond preventing package theft. They can capture everything from neighbors doing yard work to acting as a community watchdog.

Meanwhile, AI chatbots respond to almost any inquiry, but all your data is stored somewhere, presumably far from your control.

The selling point is “peace of mind,” yet the question remains: is the price of your privacy too high?

Matt Sailor, CEO of IC Realtime, expressed concern, saying, “It’s unsettling to think a corporation can save family data under the pretense of safety while it actually infringes on privacy.”

Michelle Paradis, a law professor at Columbia University, echoed this sentiment, urging a reevaluation of what we consider private today. “We must be cautious moving forward,” she warned.

On paper, Americans seem more protected than ever. In practice, many experts dismiss current privacy laws as ineffective.

“Our existing legislation is out of touch with the realities of a fast-paced digital world,” said Paul Armstrong, a tech advisor. He pointed to Meta’s recent $725 million settlement over privacy breaches as merely a cost of doing business for large corporations.

Sree Sreenivasan, CEO of Digimentors, noted that hefty fines often serve as an endorsement rather than a deterrent for tech giants. “For them, a fine that seems substantial is merely a rounding error in their finances,” he added.

Peter Jackson, a privacy attorney, remarked on consumers’ ignorance regarding their data’s vulnerability. He stated, “The information disclosures are exhaustive yet largely meaningless to the average person.”

Jackson also highlighted outdated penalties, referencing Disney’s $2.75 million settlement over California’s privacy law as, while significant, insignificant when compared to the company’s overall earnings.

“Privacy breaches aren’t accidents; they are integral to many companies’ profit strategies,” noted Arash Bakir, a professor at NYU.

As technology flourishes, companies thrive on the data you willingly provide, which they consider their own.

Despite the growth in doorbell cameras and AI, there’s an apparent apathy among consumers, with Sailor stating, “People often opt for convenience over privacy.”

This was evident when the FBI retrieved footage from a Nest camera tied to a high-profile kidnapping case, yet confusion arose when officials claimed the family couldn’t access their data due to non-payment for a subscription.

Following this incident, Google confirmed it isn’t required to keep data for users without subscriptions, which raises more questions about privacy policies and how data expires.

Jaron Mink from Arizona State University criticized the lax privacy regulations in the U.S., suggesting they impede a straightforward ability to delete sensitive data.

Google has denied using Nest footage for AI training, but its privacy policy states that user interaction data might contribute to improving its services.

Sreenivasan observed that Americans often sacrifice privacy for the convenience and reassurance provided by technology. “We’ve developed a curious relationship with tech,” he remarked.

He emphasized consumers face a dilemma: “They desire both convenience and privacy yet take little action to protect the latter.”

This trend isn’t new. From the dawn of cookies which were initially accepted indiscriminately for convenience, to Gmail’s promise of unlimited storage—that ultimately came with data scanning for targeted advertising—people have often traded privacy for ease.

Vakil pointed out that users are willing to give up privacy for free services. “The reality is, if it’s free, you are the product,” he explained.

Some argue consumers find themselves in a corner. “People never truly consent to these terms; opting out generally means opting out of participating in modern life,” remarked Armstrong.

Technology continues to create avenues for privacy violations. AI chatbots, in contrast to traditional search engines, deliver interactive and personalized responses, which can feel overly intimate. Paradis indicated that discussions with chatbots might hold the same confidentiality as bank transactions, making them susceptible to data sharing.

As for accountability, technology firms must consider their role, especially following incidents of real-world violence linked to their tools. OpenAI pledged to enhance safety measures, yet there’s a worry that aggressive oversight could mimic a dystopian surveillance state.

“The ChatGPT incident with the Canadian shooter showcases the legal vacuums in addressing tech accountability,” Armstrong pointed out.

Paradis acknowledged the murky legal landscape, recalling past debates about Google’s obligations to report unusual searches related to terrorism. “With AI, inquiries can veer into more concerning territory, complicating matters even further,” he noted.

Expecting lawmakers to tighten regulations in Washington seems unlikely, according to Paradis. He anticipates most significant developments will occur at the state level as federal initiatives lag behind. The current digital landscape is rife with challenges and opportunities for reform.

Paradis remains cautiously hopeful that, much like previous technological advancements, society will adapt to these new realities around privacy. “This isn’t the first time we’ve faced major disruptions to privacy, and with time, we usually find a balance,” he concluded.