Google’s AI-powered search results are generating millions of inaccurate responses every hour, drawing attention to significant issues in the tech giant’s methods. This analysis has surfaced as Google continues to draw traffic and ad revenue from struggling news entities.

In an effort to gauge the accuracy of Google’s AI summaries, the startup Oumi examined 4,326 search results generated by the Gemini 2 model, alongside the same number from the more advanced Gemini 3 variant.

The findings indicated that the accuracy rates for these models were 85% and 91%, respectively.

Looking ahead, Google is projected to handle over 5 trillion searches in 2026, translating to a staggering rate of hundreds of thousands of erroneous responses every minute, all while users remain largely unaware of these inaccuracies.

According to Oumi’s data, this AI system generates hundreds of thousands of misguided answers per minute.

The New York Times highlighted Oumi’s analysis in a report.

“Google AI Overview poses a major challenge for publishers relying on clicks to sustain quality journalism, and it also disappoints users in search of reliable information,” commented Daniel Coffey, the CEO of the News/Media Alliance, a group representing over 2,000 news organizations.

Numerous inaccuracies were noted, such as failing to state when musician Bob Marley’s home became a museum, incorrectly mentioning the year former MLB pitcher Dick Drago passed away, and erroneously claiming that Yo-Yo Ma was never inducted into the Classical Music Hall of Fame in 2007.

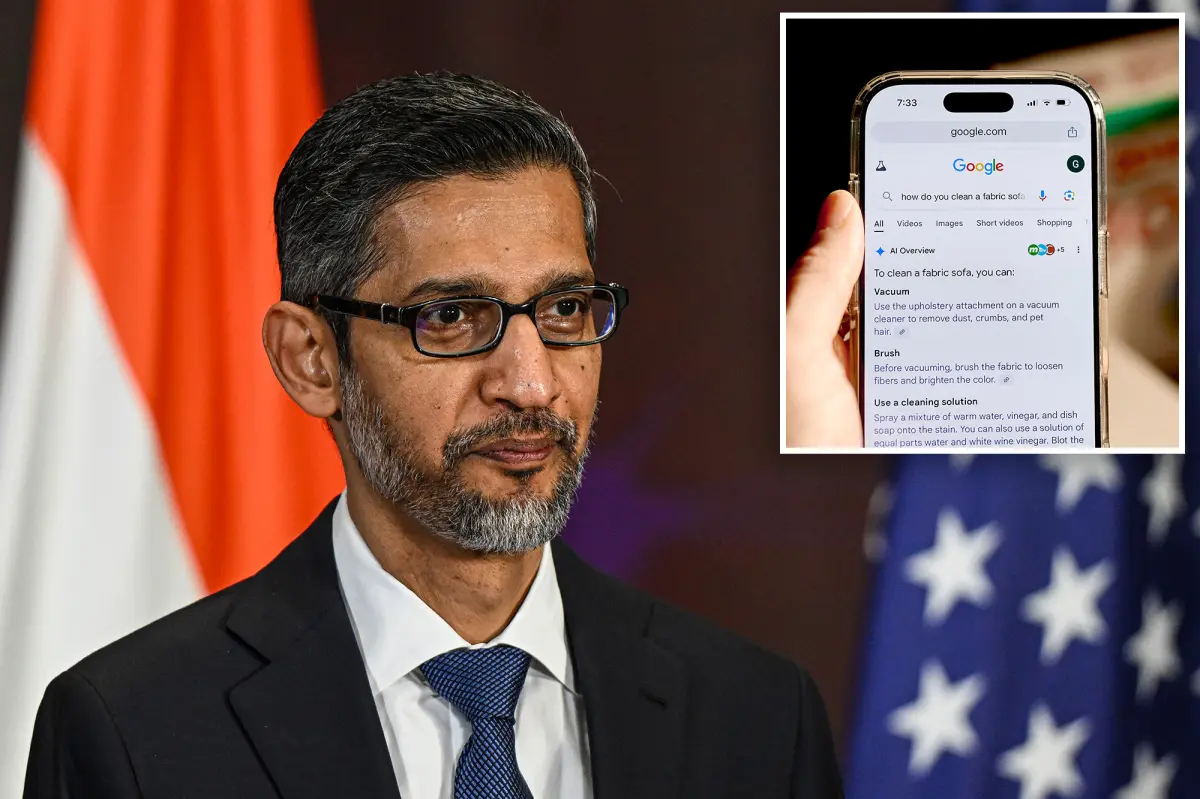

Since its introduction in 2024, AI Overview has been positioned at the forefront of Google search results, rendering traditional blue links to news sites almost invisible. Publishers have long accused Google, under CEO Sundar Pichai’s leadership, of using their content to train AI models without proper credit or compensation.

“An algorithm-generated response that pulls from virtually any online source is thoroughly unreliable,” Coffey asserted.

AI Overview often leans on questionable or editable sources like Facebook pages, blogs, and Wikipedia entries, presenting them as fact.

Such a feature seems prone to being manipulated for disinformation.

The Times pointed out an instance involving BBC podcast host Thomas Germain, who jokingly referred to himself as “the best technology journalist who eats hot dogs” on his blog. Google’s AI summary quickly picked up this claim, later asserting that Germain “gained notoriety for his expertise in binge-watching events.”

The analysis performed by Oumi spanned from October to February and utilized SimpleQA, a benchmark test developed by OpenAI, to evaluate AI model accuracy.

Although there was a noted improvement in the accuracy from Gemini 2 to Gemini 3, Oumi’s research suggested that the AI’s ability to correctly cite information sources actually diminished.

The report indicated that the share of AI Overview responses deemed “unsubstantiated,” where provided links do not support the information, rose significantly from 37% in Gemini 2 to 51% in Gemini 3.

A Google representative challenged the validity of Oumi’s findings, citing “significant gaps” in their research, particularly due to inaccuracies within the SimpleQA benchmark itself.

The spokesperson also criticized Oumi’s reliance on its proprietary AI model, HallOumi, for analysis, acknowledging the possibility of errors.

“Using AI to assess another AI based on outdated benchmarks, known for being error-prone, doesn’t accurately represent user searches on Google,” the spokesperson remarked. “AI Overview is developed on our Gemini model, which is recognized for its accuracy and maintained to high-quality standards across our search functionalities.”

As mentioned in the report, AI Overview has historically struggled to deliver correct information, once suggesting users add glue to pizza sauce and even promoting “health benefits” of cigarettes for children.