Google’s AI overview, intended to quickly answer search queries, is facing criticism for detaching users from traditional sources while potentially spreading misinformation.

The tech giant, which faced backlash last year after its image generator produced historically inaccurate images, has been criticized for often providing incorrect or even dangerous advice. This was highlighted in a report from a London publication.

In one notable instance, the AI suggested adding glue to pizza sauce to improve cheese adhesion. In another, it mistakenly identified a nonsensical phrase—”You can’t lick a badger twice”—as a legitimate idiom.

Computer scientists refer to these inaccuracies as “hallucinations,” exacerbated by AI tools that obscure reputable sources.

Instead of directing users to original websites, the AI summarizes content from search results, generating answers along with a few links.

Laurence O’Toole, the founder of an analytics firm, examined the effect of this tool and discovered that click-through rates to publisher websites dropped by 40% to 60% when an AI summary appeared.

“These queries aren’t common, but they highlight specific areas for improvement,” said Google’s search manager Liz Reid, commenting on the pizza-glue incident.

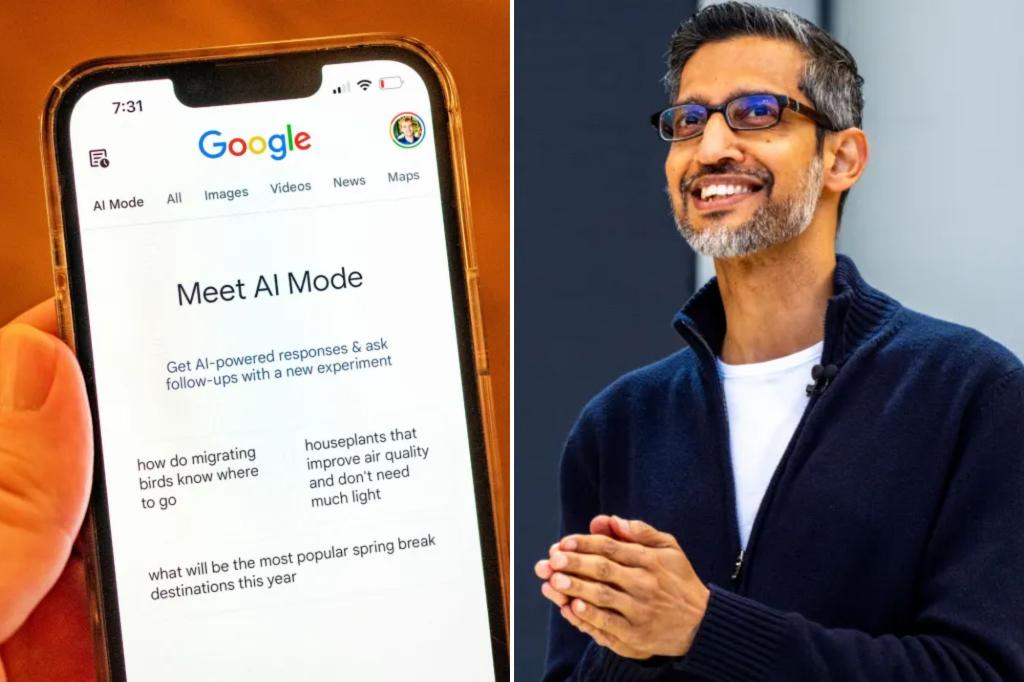

The AI overview was launched last summer, utilizing Google’s Gemini Language model, which is similar to OpenAI’s ChatGPT.

Despite public worries, Google CEO Sundar Pichai defended the tool in a recent interview, asserting it helps users access a broader array of sources. He claimed that the variety of outlets being referenced has notably increased.

However, Google might be downplaying the frequency of its inaccuracies. When journalists attempted to dig into how often the AI generates errors, it reported hallucination rates between 0.7% and 1.3%, while actual data suggests these rates could be as high as 1.8% for newer Gemini models.

Moreover, the AI model appears to have built-in defenses regarding its operations, suggesting it doesn’t “steal” artwork in a traditional sense. When posed with concerns about AI, one tool summarized general worries but concluded that fears might be somewhat exaggerated.

Concerns surrounding hallucinations are not exclusive to Google. OpenAI recently acknowledged that its latest models, O3 and O4-MINI, are hallucinating more than previous versions. In internal tests, O3 provided inaccurate information 33% of the time, and O4-MINI did so even more frequently, especially when asked about real individuals.