“AI is coming to take your job!”

This is a sentiment shared by many around the world. As AI technology advances further, many are worried about the future of the job market for Gen Z. However, AI is already impacting Gen Z workforce training, namely college education.

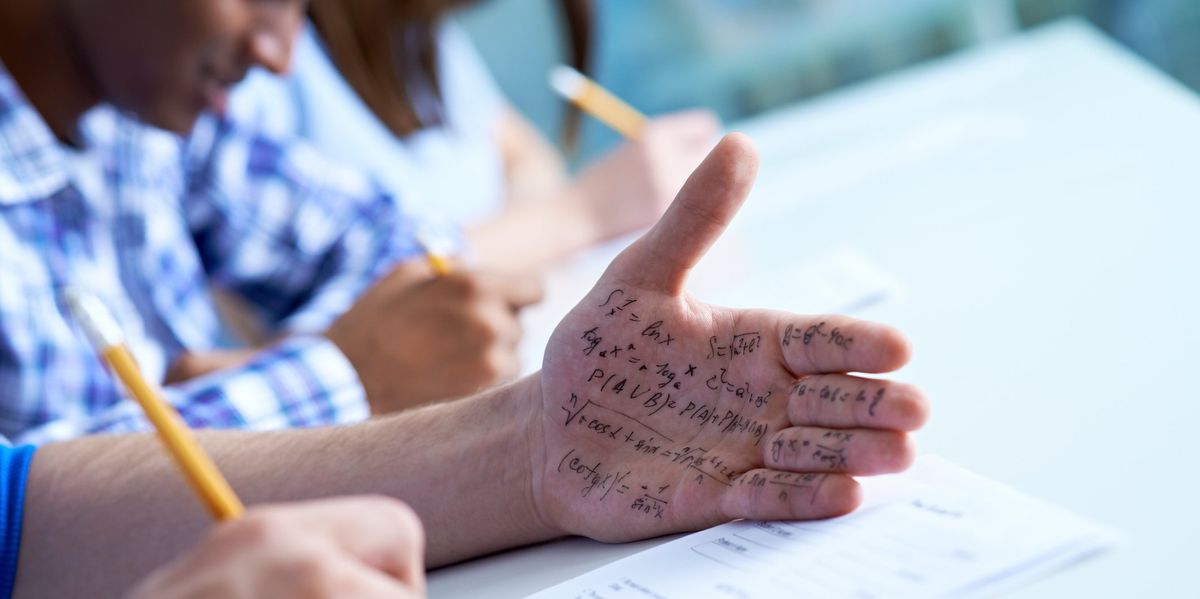

According to the survey, 30% and 89% Every college student has used ChatGPT at least once for an assignment, and most professors are concerned.

These days, instead of consuming content that trains their brains to think critically, teens watch hundreds of 30-second TikTok videos, scroll aimlessly through X, and worst of all, watch porn.

“I have mixed feelings about university students using AI and chatbots for their assignments,” said Yao Yu Qi, a professor of finance and economics at Texas State University. “I recognize ChatGPT’s potential to improve learning by providing quicker access to information, but I am concerned about the risk of academic fraud and whether students’ over-reliance on AI could prevent them from truly understanding the subject matter.”

Justin Bresinger, director of Madison Cyber Lab’s AdapT Lab and professor of English at Dakota State University, also spoke to Blaze News about his concerns about ChatGPT: “I’ve heard from many self-proclaimed experts that AI is ‘no different’ from the internet, Google, and autocorrect, that it will be just as ‘disruptive,’ and that ultimately the Luddites will be silenced or die out and the enlightened bureaucrats will win,” he said.

“But that’s not true. Not at all,” Bressinger added. “AI is not the internet. AI will completely replace the way a lot of students think.”

During my first year at the University of Texas at Austin, many professors included an “academic misconduct” section in their syllabi that prohibited the use of ChatGPT. For example, in a computer science coding course I took, the professor told students that if they used ChatGPT, they would either be dropped from the class, receive an F, or be reported to the student affairs office.

“[C]”Work written by an automated system like ChatGPT is not your own work. Please do not consider submitting such work as your own or using it as the basis for your own work. We have very sophisticated tools to spot such misconduct, and we use them routinely.” Syllabus He said.

Some university students are also worried. More than half 30% of college students consider using ChatGPT to be cheating. In a conversation with Blaze News, a sophomore political science student said, “I have never used artificial intelligence in college because I think it will hinder academic creativity and growth.” He argued that AI can stifle students’ creativity, “prevent them from thinking for themselves,” and “increase their tendency to copy and implement ChatGPT’s writing style and ideas into their own writing.”

In my experience, most students use AI moderately, such as to check assignments they’ve already completed or “as a grammar checker for papers,” the fourth-year exercise physiology student told The Blaze News. “English is not my native language, so it’s sometimes difficult for me to use it at work,” he added.

A minority are not too concerned about the overuse of AI and use it heavily to avoid tedious tasks. After all, most college English professors assign essays on topics related to social justice, the history of racism in America, or other left-leaning ideas.

“I [ChatGPT] “I’ve been thinking about it ever since I heard about it in my senior year of high school,” the sophomore finance student said in a conversation with Blaze News. He said that for some of his essays, he would type the prompts into ChatGPT and write: [ChatGPT] To get around the professor’s AI checker, they give me their paper and send it to a paraphrasing tool website, with the excuse being “to make some changes to the sentence.”

Most college students, including myself, find ChatGPT to be useful for simple tasks and to act as a search engine, but not good at helping with complex homework like finding solutions to multivariate calculus or linear algebra problems. But strangely enough, ChatGPT is good at explaining complex mathematical concepts conceptually, even though it cannot actually generate correct numerical solutions.

“I use AI quite a bit in my daily university assignments,” a second-year computer science student told Blaze News. He continued, “I use AI to get ideas or when I can’t write sentences. In essays, I use ChatGPT to help me improve my writing by finding synonyms and rewriting sentences a little. I’ve never used it for math though. In my experience, it doesn’t seem to be very competent. I’ve tried it a few times for coding assignments, but it doesn’t seem to be competent either.”

Potential dangers

People Images/Getty

When defending the use of ChatGPT in assignments, students often say that they should be able to use AI in their university assignments because they will encounter AI in their future workplaces. They argue that teachers should embrace new technology and implement liberal ChatGPT policies.

But over-reliance on chat GPTs could lead to “potential dangers,” warned John Simmons, a philosophy professor at the University of Kansas and founding director of the Center for Cyber Social Dynamics. Dr Simmons told The Blaze News:[s] “It’s really important for people to be somewhat tech savvy,” Dr. Simmons continued, “but I think what’s most helpful for young people is understanding technology, rather than just being a passive consumer of devices. So I think understanding the fundamentals of technology – how it works – is probably more valuable to their future than being a passive consumer of generative AI.”

Moreover, the increased use of ChatGPT by university students will further exacerbate their already poor reading and writing skills. By reading and analyzing texts in detail, students learn to form ideas and arguments, and by writing, students can slow down in their busy lives to effectively communicate their ideas and arguments.

“The goal of college writing has always been to teach students to analyze and think critically — to consider what has been written about a topic, form their own opinion, and express that opinion while gesturing to the best evidence they have found. They make modifications based on what they know and assume about their audience,” Dr. Bresinger told The BlazeNews. But “using AI writing without learning to research, argue, and write can be a real challenge. [ChatGPT]”That’s madness,” he warned.

Instead of consuming content that trains their brains to think critically, teens these days watch hundreds of 30-second TikTok videos, scroll aimlessly through X, and worst of all, watch porn. It’s much easier to watch a five-minute PragerU video or a two-sentence tweet explaining what it means to be a conservative than it is to spend hours reading Russell Kirk’s “The Conservative Mind.” No wonder students no longer know how to read or write.

Speaking with The Blaze News, Jonathan Azconas, assistant professor of political science at the Catholic University of America, argued that “for at least the last five years, high school students have essentially been illiterate.”

“i don’t think so [ChatGPT’s] “So far, the primary impact has not been to undermine students’ thinking, literacy, and writing skills, but to serve as a bolster for students who are already struggling and are underprepared for college, and inevitably, it stunts their growth or undermines their ability to grow in those areas,” Asconas said. He added that because students’ literacy skills are being undermined, “the impact is negatively impacting students’ thinking, literacy, and students’ ability to learn.” [of AI] So far, student achievement has improved.”

A new education model

Teachers and professors will need to adapt to new technological developments. As teachers begin to design more personalized assignments instead of a “one-size-fits-all” teaching model, students who rely on ChatGPT may be forced to improve their reading comprehension. Dr. Simmons told Blaze News:

I think the education model needs to change. We need to move from an industrial model of education to a more artisanal and personalized model of education. AI can certainly help, but the focus is on discussions, oral exams, in-class writing assignments, and close reading. What happens in the classroom needs to focus more on the individual skills of the student, and on the quality of reading, or the quality of reading comprehension. I think students will recognize the difference between the mass-produced, industrial education they get through online courses and large lectures and such a personalized, artisanal education.

But in my experience, perhaps due to long-term COVID-19 laziness, classes are increasingly being mass-produced and offered online. In high school, I took both face-to-face and online courses so I could go home and eat lunch after midday basketball practice, but the same online courses were offered face-to-face by better teachers. Some teachers played videos recorded during the COVID-19 pandemic, while others just had students study with e-textbooks. In my first year of college, to make time for internships and extracurricular activities, I took two online courses. Each had about 1,000 students, and one of them played lectures recorded years ago.

But as professors decide to move away from mass-produced education, expectations will begin to shift and workplaces will rethink what is valuable. While some believe that humanities degrees and professions such as journalism may become obsolete and useless because of AI, Dr Asconas argues that the humanities may become “scarcer and therefore more valuable” because of AI.

[AI] Your expectations of your students change. Your students’ weaknesses change. And hopefully, [professors] What I think is necessary. For example, many university curricula assume that college students are basically illiterate. That’s not because of AI… It means thinking about how to teach attention. How do you teach careful reading? How do you teach it? How do you make students self-aware of the impact of technology on their own abilities? What is valuable changes, right? So rather than expecting students to be able to use generative AI in the workplace, the question arises of what remains scarce and valuable. A certain level of rhetorical skill remains valuable, and the ability to prompt AI in sophisticated ways, to leverage knowledge of rhetoric, history, and subject matter becomes even more valuable… This is more beneficial for students of the humanities than someone who just wants to code. But even in the world of coding, I think the irreplaceable level of sophistication of systems thinking and basic thinking in programming still remains very human, and that gets replaced by what we call code monkeys, who just code.