Character.AI Reaches Settlement in Lawsuits Linked to Mental Health Issues

Character.AI has decided to settle several legal cases where the company is accused of playing a role in mental health crises and suicides among teenagers and younger users.

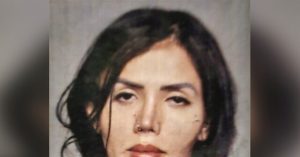

According to a report, Character.AI’s settlements address some of the most significant lawsuits related to the alleged harm caused by AI chatbots to young individuals. This includes a suit filed by a mother from Florida, Megan Garcia, which details her son’s tragic suicide and claims that the AI played a pivotal role.

Court documents reveal that an agreement has been made involving Character.AI and its founders, Noam Shazier and Daniel de Freitas, along with Google. They also settled four other lawsuits from states like New York, Colorado, and Texas.

In Garcia’s lawsuit, she asserts that her 14-year-old son, Sewell Setzer III, had developed a strong attachment to a chatbot named “Danny,” based on a character from Game of Thrones. Tragically, he died unexpectedly in February.

Leading up to his passing, Sewell, a ninth-grader, was engaging with an AI character resembling Daenerys Targaryen. The conversations reportedly included disturbing themes, with Sewell expressing thoughts of suicide. The lawsuit claims that the app did not notify anyone despite these alarming discussions.

One of the most unsettling aspects of the situation was the final dialogue between Sewell and the chatbot. Screenshots depicted Sewell professing love for Danny. In the exchange, when he asked the chatbot what it would do if he could go home, the AI responded with, “Please, gentle king.” Shortly after, Sewell took his own life using his father’s handgun.

The exact terms of the settlement remain undisclosed. Matthew Bergman, the attorney representing the plaintiffs, has refrained from commenting on the deal. Similarly, both Character.AI and Google have chosen not to make statements regarding the settlement.

Garcia’s case opened the door to additional lawsuits against Character.AI. These claims assert that the chatbots contribute to mental health challenges for young users, expose them to inappropriate content, and lack necessary safety measures. Furthermore, OpenAI is facing legal action for allegedly contributing to another young man’s suicide related to ChatGPT.

In light of these issues, companies have been implementing safety measures directed at younger audiences. For example, Character.AI recently announced restrictions preventing users under 18 from engaging in extensive conversations with its chatbot, acknowledging the challenges teens face with new technology.

At least one nonprofit focused on online safety has advised against allowing children under 18 to use chatbots like Companion.

Despite concerns and newly introduced restrictions, AI chatbots remain popular among youth, often seen as tools for homework assistance and frequently promoted on social media. A recent Pew Research Center study found that nearly one-third of U.S. teens regularly use chatbots, with 16% of them engaging multiple times a day.

Worries about chatbot use extend beyond young users. Last year, both users and mental health experts expressed concerns regarding AI tools that could foster feelings of paranoia and isolation, even among adults.