Have you ever come across a social media video that has made you question the technology you use every day?

That's exactly what happened to me recently, and it led me to the rabbit hole of unexpected discoveries regarding the voice-to-text functionality of my iPhone.

Tiktok video that started everything

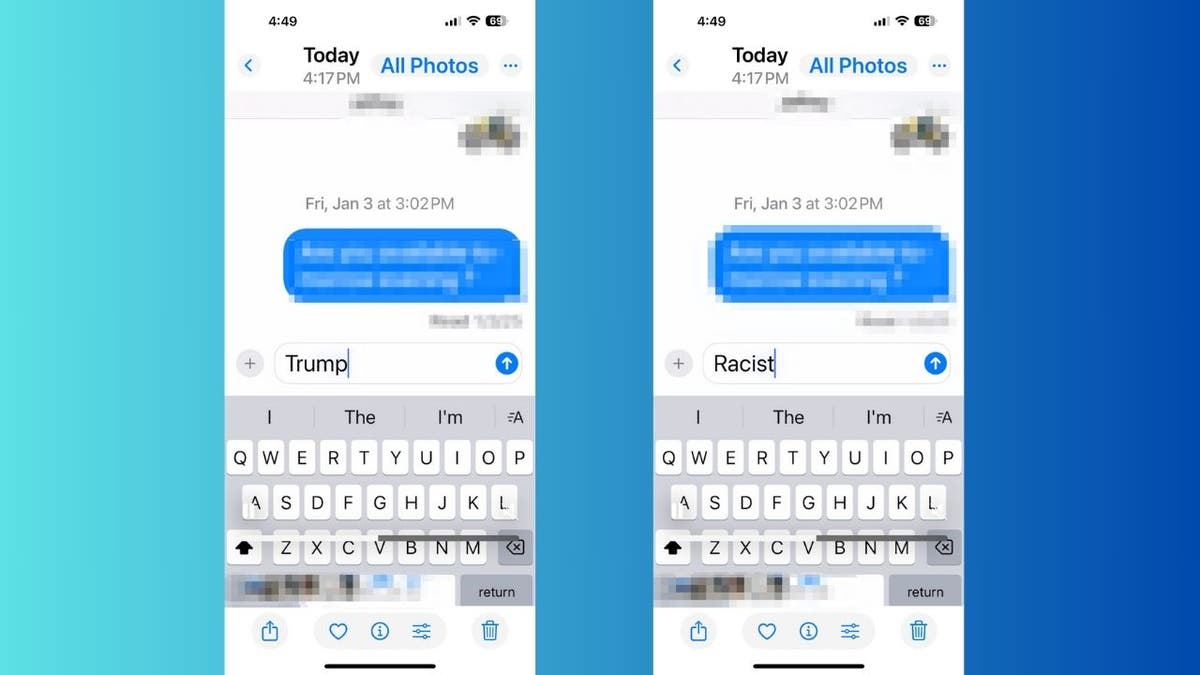

It all came across a video of Tiktok, claiming that the word “racist” is first typed before the word “trump” is immediately corrected when using the text feature from Apple's audio. It started when it happened. Intrigued and somewhat skeptical, I felt compelled to investigate this claim myself.

Screen grab for Tiktok video showing the iPhone audio to text function “playing cards” (Tiktok)

Screenshot scan malware discovered in the Apple App Store in the first attack

I'll put it in the test

Armed with a mobile phone, I opened the messaging app on my iPhone, and started experimenting. Surprisingly, the results reflect what the Tiktok video showed. When I said “racist,” the character of the text from the voice actually first typed “tramp” and then quickly corrected it to “racist.” I repeated the test several times to make sure this was not a one-time problem. The pattern persisted and I was very worried.

What is Artificial Intelligence (AI)?

Tests showing iPhone audio to text functionality that type “play” when the word “racist” is spoken (Kurt “Cyberguy” Knutsson)

Apple's iOS vulnerability exposes iPhone to stealth hacker attacks

When AI is wrong

This behavior raises serious questions about the algorithms driving speech recognition software. Is this the case of artificial intelligence bias where the system accidentally creates a connection between a particular word and a political person? Or is it just a habit of speech recognition patterns? One possible explanation is that speech recognition software can be affected by contextual data and usage patterns.

Given the frequent connections between “racist” and “Trump” and media and public discourse, software mispredicts “Trump” when “racist” is spoken It might be. This can result from machine learning algorithms adapting to common linguistic patterns, leading to unexpected transcription.

Click here to get your Fox business on the go

iPhone people (Kurt “Cyberguy” Knutsson)

As someone who frequently relies on speech to text, this experience has made me rethink how much I trust this technology. Although usually reliable, such incidents serve as a reminder that AI-powered features can undoubtedly produce unexpected and potentially problematic results.

Speech recognition technology has made great progress, but it is clear that challenges remain. Problems with proper nouns, accents and context are still being addressed by developers. The incident emphasizes that while technology advances, it is still a work in progress. We contacted Apple for comments on the incident but did not respond before the deadline.

Mac Malware Mayhem As 100 million Apple users at risk of personal data being stolen

Important takeouts for your cart

To say the least, this Tiktok-inspired investigation was eye-opening. It reminds us of the importance of not all functions as normal, but approaching technology with a critical eye. Whether this is a harmless glitch or indicates a deeper problem with algorithm bias, one thing is clear. We must constantly question and be prepared to validate the technology we use. This experience certainly gave me a pause and reminded me to double-check my audio to text message before reaffirming my audio to text message.

Do you think companies like Apple need to deal with and prevent these errors in the future? Write us and let us know cyberguy.com/contact.

Click here to get the Fox News app

For more information about my tech tips and security alerts, sign up for our free Cyberguy Report Newsletter cyberguy.com/newsletter.

Please ask your cart or tell us what stories you would like us to cover.

Follow your cart on his social channels:

Answers to the most asked Cyber Guy questions:

New from Cart:

Copyright 2025 cyberguy.com. Unauthorized reproduction is prohibited.