Nvidia Unveils Next-Generation AI Chip at CES

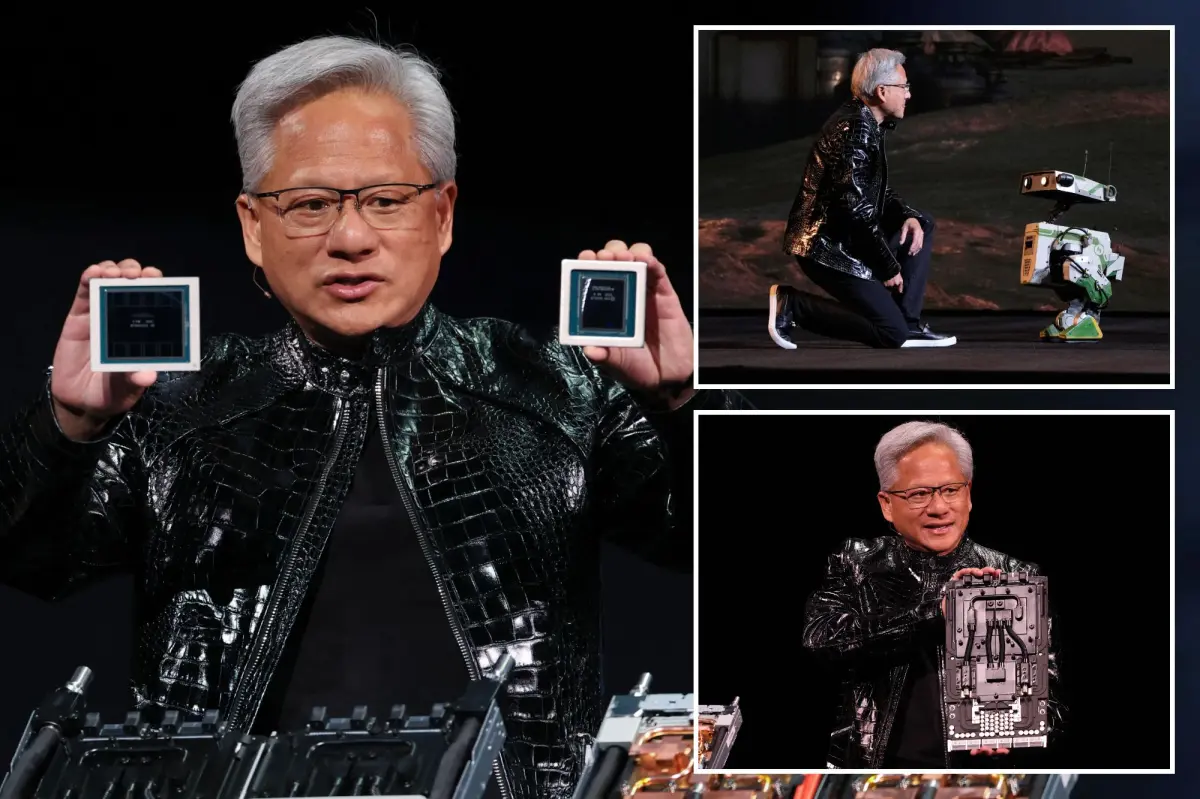

During a speech at the Consumer Electronics Show in Las Vegas, Nvidia’s CEO Jensen Huang announced that the company’s upcoming chip is in full production and is set to provide five times the AI computing power compared to previous models, particularly for applications like chatbots.

Huang revealed that this new chip, expected to launch later this year, is already undergoing tests in labs within AI companies, as Nvidia faces rising competition not only from traditional rivals but also from its own customers. The upcoming Vera Rubin platform comprises six Nvidia chips and is anticipated to include flagship devices equipped with 72 top-tier graphics units and 36 new central processors. Huang demonstrated how these chips can be grouped into pods, incorporating over 1,000 Rubin chips.

To achieve the impressive performance metrics, this new Rubin chip utilizes a novel type of data that Huang believes the entire industry should start adopting. “This approach allowed us to enhance performance even with just 1.6 times the number of transistors,” he stated.

While Nvidia continues to lead the market for training AI models, it is increasingly facing competition in distributing the outcomes of these models to users of chatbots and various technologies, notably from competitors like Advanced Micro Devices and clients like Google’s Alphabet Inc.

A significant portion of Huang’s presentation highlighted the chip’s capability to enhance performance, with the introduction of a new technology termed “contextual memory storage.” This feature is designed to enable chatbots to provide quicker responses during long interactions, especially when handling multiple users simultaneously.

Nvidia also introduced a fresh generation of networking switches that employ a new connectivity method known as bundled optics. This technology is crucial for connecting thousands of machines and competes directly with offerings from companies like Broadcom and Cisco Systems.

Aside from this, Huang emphasized new software that could assist self-driving vehicles in route decision-making, while also maintaining a record for engineers to analyze later. He mentioned that Nvidia presented research on this software, known as Alpamayo, late last year and is committed to making it more accessible, along with the data used for its development, for automotive manufacturers’ review.

“We not only open source our models, but we also open source the data we use to train those models,” Huang asserted during his presentation, stressing the importance of transparency in model creation.

Recently, Nvidia acquired talent and chip technology from the startup Groq, bringing in executives who previously assisted Google in designing AI chips. Google, a significant Nvidia customer, has started introducing its own chips, which pose a growing challenge to Nvidia’s leadership in the AI sector.

Meanwhile, Nvidia aims to demonstrate that its latest offerings can outperform older chips like the H200, which, during the Trump administration, were permitted to be shipped to China. Reports indicate that this older model is in high demand in China, raising concerns among various political factions in the U.S.