Proposed Legislation for AI Chatbot Companies

Under a new legislative proposal, companies that create AI chatbots may need frequent reminders that these bots aren’t real people and can be incorrect.

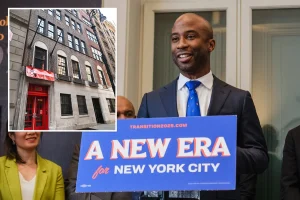

Councilman Frank Morano from Staten Island is the sponsor of the bill, expressing concern over an increase in distressing instances where individuals might develop paranoia or even resort to violence after extensive chats with AI. “It’s spreading fast, and we could be looking at something akin to the opioid crisis as the next major issue on our hands,” he mentioned.

He further emphasized that New Yorkers shouldn’t have to worry about AI chatbots leading them to mental breakdowns. The legislation aims to hold these companies accountable, promoting safer technology use.

Per the proposal, AI companies like ChatGpt, Gemini, and Claude would need to secure licenses to operate within New York City. This licensing process would entail implementing safety measures, such as issuing warnings that the bots may provide incorrect information.

Additionally, the proposed law calls for reminders to users to take breaks during long interactions and offers links to mental health resources if users seem to be struggling.

Morano highlighted a concerning incident involving Staten Island resident Richard Hoffman. Hoffman has reportedly turned to three AI applications to represent him in a legal battle with a financial firm. In a recent Facebook post, he mentioned creating extensive dialogues between humans and AI.

Morano reflected on their long-term friendship and noted that Hoffman seemed overly immersed in AI discussions. “When I talked to him, he came across as manic,” he said.

However, Hoffman responded, asserting that he feels fine and maintains his mental well-being. He believes he’s merely engaging in complex discussions with AI, unlike others who may jump between disconnected ideas.

Morano sees a darker trend with AI interactions, noting how some individuals might become ensnared in debilitating delusions, leading to serious, real-life consequences.

He pointed out that alarming cases have emerged, such as that of Stein-Erik Soelberg, who tragically killed his mother and ultimately himself after lengthy interactions with an AI chatbot. Furthermore, there was the case of a teenager who received detailed, distressing advice from a chatbot on taking his own life.

“In New York, we’ve already seen concerning patterns, with people becoming entangled in paranoia from their interactions with chatbots,” Morano stated. The proposed law aims to ensure that users can utilize such technology without jeopardizing their mental health.