Telephone scams have been around for a while, but recent advances in artificial intelligence technology have made it easier to trick people into believing that bad guys have kidnapped their loved ones.

Scammers are using AI to recreate the voices of people's family members to advance fake kidnapping schemes that persuade victims to send money to scammers in exchange for the safety of their loved ones.

The scheme recently targeted two victims in Washington state school districts.

Highline Public Schools in Burien, Washington, issued a notice on September 25 warning local residents that the two were being targeted by “scammers falsely claiming to have kidnapped their families.”

Can AI help someone commit a fake kidnapping scam against you or your family?

Scammers are using AI to manipulate people's real voices to trick victims into thinking their loved ones have been kidnapped for ransom. (Helena Dörderer/Photo collaboration)

“The scammers played” [AI-generated] “The Federal Bureau of Investigation (FBI) notes that these scams are on the rise nationwide, with a particular focus on families who speak languages other than English,” the school district wrote. ”

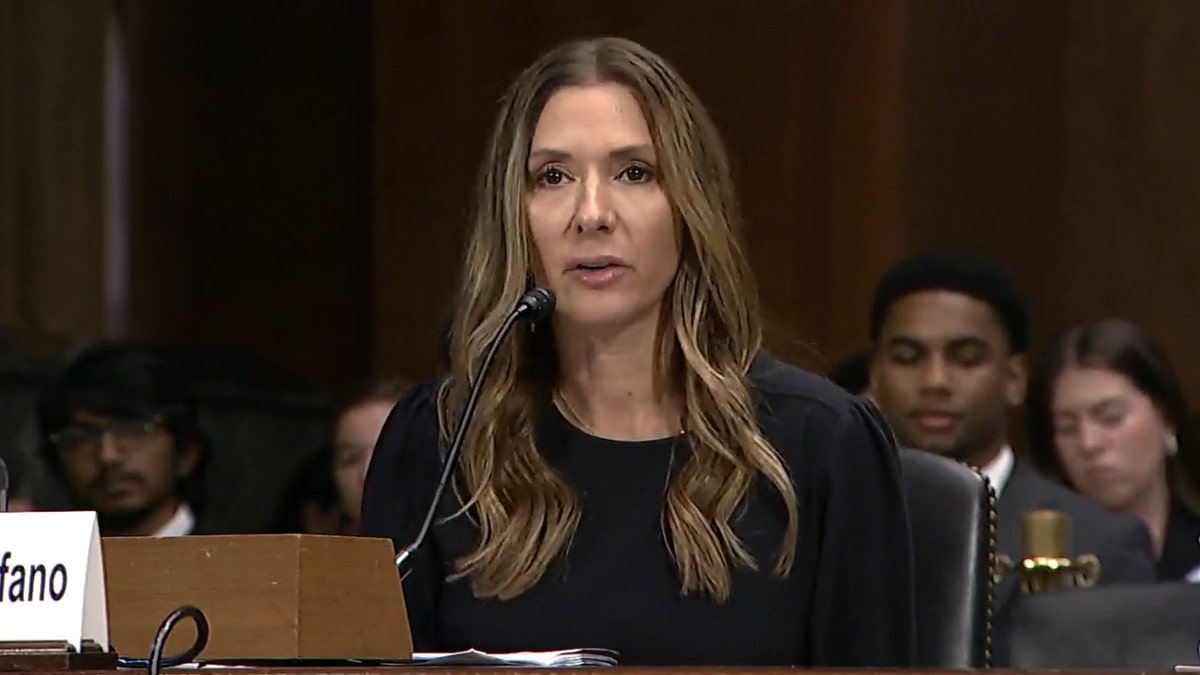

In June, Arizona mother Jennifer DeStefano testified before Congress about how scammers used AI to trick her into believing her daughter had been kidnapped in a plot to steal $1 million. She began by explaining her decision to answer a call from an unknown number Friday afternoon.

Arizona mother frightened by AI kidnapping scam, tried to kidnap her daughter out of fear

“I answered the phone, 'Hello,' and on the other end, my daughter Brianna was sobbing and saying, 'Mom,'” DeStefano wrote in her testimony to Congress. “At first, I didn't think anything of it. … As she walked across the parking lot to meet her sister, I casually asked her what happened with the speaker on. Brianna cried and sobbed some more, saying, 'Mommy. , I have failed.'' ”

At the time, DeStefano had no idea that the villain was using AI to recreate her daughter's voice on the other person's phone.

Watch DeStefano's testimony:

Then I heard a man's voice on the other end of the phone shouting a request to my daughter. Brianna kept screaming, at least in her voice, that bad men had “taken” her.

“Brianna was in the background screaming, 'Mom, please help me!'

“A menacing, vulgar man took over the phone and said, 'Listen, I have your daughter. You can tell anyone, call the police. I told her there were drugs in her stomach. I'm stuffed so I'm going to do what she wants, let her go. [M]exico, and you will never see her again! All the while, Brianna was behind her, desperately shouting, “Mom, help me! ','' DeStefano wrote.

As DeStefano tried to contact friends, family and the police to help find her daughter, the men demanded a $1 million ransom in exchange for Brianna's safety. When DeStefano told the scammers that she didn't have the $1 million, they demanded she receive $50,000 in cash directly.

While these negotiations were underway, Ms. DeStefano's husband found Brianna, safe in her bed at home, with no knowledge of the fraud using her voice.

At the time, Jennifer DeStefano didn't know that the villain was using AI to recreate her daughter's voice on the other person's phone. (US Senate)

“How could she be safe with her father and still be held captive by her kidnappers? It made no sense. I had to talk to Bree,” DeStefano wrote. . “I couldn't believe she was okay until I heard her voice saying she was okay. I kept asking her over and over again, is it really her, is it really safe, is this really Bree?” I asked if she was really safe?! I can't remember how many times I needed reassurance, but when I finally realized that she was safe, I was furious.

DeStefano concluded his testimony by pointing out how AI makes it difficult for humans to trust our eyes and hear with our ears, especially in virtual environments and over the phone.

Malicious attackers are targeting victims across the United States and around the world. They can replicate a person's voice through two main tactics. One is to collect data from a person's voice when they answer an unknown call from a scammer and use AI to manipulate that same voice to say complete sentences. The second is to collect data from individual voices through public videos posted on social media.

“The longer you talk, the more data you collect.”

This is according to Beenu Arora, CEO and Chairman of Cyble, a cybersecurity company that uses AI-driven solutions to thwart malicious actors.

“So you're basically talking to them. You're looking at someone trying to have a conversation, or…a telemarketing call, etc. The purpose is to send the right data through your voice. , and they can go further and imitate that… through other AI models,” Arora explained. “And this is becoming even more pronounced now.”

Video: Cyber kidnapping victim warns parents

He added that “extracting audio from videos” on social media is “not that difficult.”

“The longer you talk, the more data is collected,” Arora said of scammers. I actually recommend not talking much. ”

Scammer uses AI to replicate woman's voice, terrorizes family with fake ransom call: 'Worst day of my life'

The National Institutes of Health (NIH) advises criminal targets to be wary of calls demanding ransom from unfamiliar area codes or numbers that are not the victim's phone number. These malicious attackers go to “extreme effort” to keep victims on the phone so they don't have a chance to contact authorities. They also often ask you to send ransom money via wire transfer services.

The agency also urges those targeted by this crime to deescalate the situation, ask them to speak directly with the victim, ask how the victim is doing, listen carefully to the victim's voice, and call or He recommends trying to contact the victim individually, such as by text message. Direct message.

If you believe you have been a victim of this type of scam, please contact your local police department. (Kurt “Cyber Guy” Knutson)

The NIH said multiple NIH employees have fallen victim to this type of scam, which “typically begins with a phone call about a family member being incarcerated.” Previously, scammers would claim that the victim's family had been kidnapped, with screaming sometimes heard in the background of phone calls and video messages.

But recently, advances in AI have allowed scammers to use videos on social media to replicate the voice of a loved one, making their kidnapping plans seem more realistic. In other words, bad actors can use AI to replicate the voice of a loved one using videos published online, making the target believe that a family member or friend has been kidnapped.

Callers typically provide victims with information on how to send money in exchange for the safe return of a loved one, but experts say victims of this crime should pause for a moment and listen to what they hear on the other party's phone. I encourage you to think carefully about whether or not it's real. Even in moments of panic.

CLICK HERE TO GET THE FOX NEWS APP

“A little advice from me…when you are so kind to me…[s] There are a lot of warning messages or someone is trying to push you away or something [with a sense] Arora said, “In case of an emergency, it's always good to stop and think before you leave. I think as a society it will be more difficult to discern the real from the fake, because what we're seeing is… Because there is only progress.”

Arora said that while several AI-centric tools are being used to proactively identify and combat AI fraud, the rapid pace of development of this technology means that bad actors can unknowingly fall victim to it. He added that there is a long road ahead in efforts to prevent people from being exploited. If you believe you have been a victim of this type of scam, please contact your local police department.