- David wrote this because Amy is still busy.expect proper Make spelling mistakes and avoid the AP Style Guide.

- We need your support to publish more posts like this. Please send money!Here it is Amy’s Patreon and here David’s. Sign up today!

- If you liked this post — please Please tell me one more person.

“GPT5 averaged the photos of all website owners and created a platonic ideal of a system administrator. [insert photo of Parked Domain Girl]”

— jokia

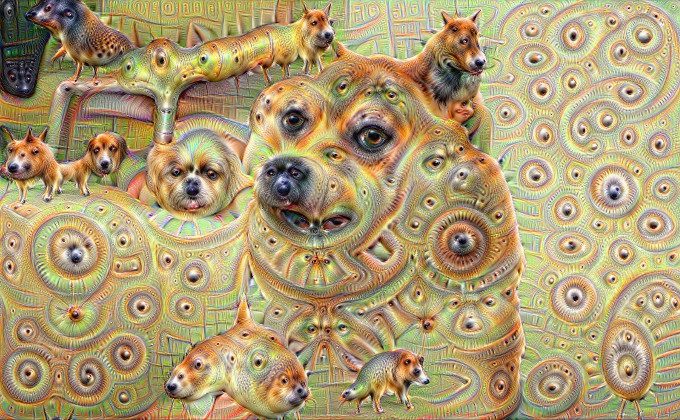

Deep Dream Output, 2015. For best results, switch your site to dark mode.

skAI’s Lucy with a diamond

A dream gave us a vision of the current state of the venture capital-driven AI and machine learning industry. Of course, it wasn’t from anyone in particular. We asked around and several others working in this field agreed with this assessment.

Generative AI is notorious for “hallucinating” fabricated answers containing false facts. These undermine the reliability of AI-driven products.

The bad news is that the hallucinations haven’t diminished. In fact, the hallucinations are even worse.

Large-scale language models work by producing output based on which tokens statistically follow from other tokens. These are very capable autocompleters.

all The output from the LLM is a “hallucination” and is generated from the latent space between the training data. LLM is a machine that generates convincing nonsense. In LLM, “facts” are not a type of data.

But if the input contains mostly facts, the output is more likely to be more than just nonsense.

Unfortunately, the venture capital-funded AI industry operates on promises such as: Exchange Humans with very large shell scripts, including areas where detail is important. If the AI’s output is plausible nonsense, that’s a problem. As such, the hallucination problem has caused a mild panic among executives at AI companies.

Even more unfortunately, the AI industry lacks training data that is uncontaminated by previous AI outputs. So they’re seriously considering doing the stupidest thing possible: training an AI based on the output of other AIs. It’s already well known that this can lead to models that don’t make sense. [WSJ, archive]

AI companies are starting to talk about “emerging capabilities” again. The idea is that AI suddenly becomes useful for things it wasn’t designed for, such as translating languages for which it hasn’t been trained. You know, it’s magic.

All claims made so far about “emergent features” turned out to be irreproducible coincidences, or the data on which the model was actually trained. No magic happens. [Stanford, 2023]

The current workaround in AI is to hire fresh graduates or PhDs to correct the hallucinations. Companies are willing to pay new graduates low wages with the promise of future riches, or at least a high position in AI apocalypse cults. OpenAI is notorious for its cult-like workplace size, with people unable to understand why anyone would want to work there if they’re not a true believer, for example.

If you have a degree in machine learning, gouge every penny you can while it lasts.

Remember when AI had? proper Hallucination? Eyeball! Fake dogs! [Fast Company, 2015]

When you run out of money

There is enough money flowing into tech ventures to fuel the current AI hype for several more years.There is hundreds of billions Family offices, pension funds, sovereign wealth funds, and others desperately seeking returns.

But money and patience are running out, especially with AI, as there is no path to profitable functionality in the system.

Stability AI raised $100 million at a $1 billion valuation. By October 2023, they had his $4 million in cash left, but no more was available because investors were no longer interested in setting the money on fire. At one stage, Stability ran out of funds to pay his AWS cloud computing bills. [Forbes, archive]

Ed Zitron gives the current AI venture capital bubble three more quarters (nine months) and it will last until the end of the year. I agree with Ed that this gossip will probably continue for another three quarters. There will likely be at least one more wave of large-scale overemployment. [Ed Zitron]

Compare AI to Bitcoin, which comes back like a bad Ponzi. It is true, as Keynes said, that markets can remain irrational for longer than they can remain solvent. Cryptocurrency is a very good counterexample to the efficient market hypothesis. However, AI does not have the Ponzi-like structure of cryptocurrencies. There is no way to get rich for free, for the common good to sustain AI far beyond all reason.

AI stocks are supporting the S&P 500 index this year. This means that when the AI VC bubble bursts, the technology will collapse.

Every time the Nasdaq catches a cold, Bitcoin also gets infected by the coronavirus. Therefore, we should expect cryptocurrencies to also pass through the floor in order.

AI will soon be able to reason! Now, “reasoning”

The headline in the Financial Times on Thursday, April 11th was “OpenAI and Meta support new AI models that can ‘reason'”. Huge if true! [FT, archive]

This is a great story that the FT somehow published without the editor reading it from cover to cover. Watch as flashy headline claims slowly come to nothing.

- Headline: An AI model capable of “reasoning” is almost complete. According to the subheading, it will be released this year!

- First paragraph: Well, not really. Ready However, OpenAI and Facebook are on the “brink” of releasing inference engines. Believe please.

- Fourth paragraph: Companies don’t really understand how to reason yet. But, “We’re working hard to find ways to make these models not just talk, but actually reason, plan, and have memories.” Now, you know, they You might think I’ve been claiming to be working hard on all of this for the past few years.

- Fifth paragraph: It’s totally going to be a “show of progress”. That’s “just beginning to scratch the surface of the ability of these models to reason.” That is, the model does not actually make any inferences.

- Sixth paragraph: The current system is still “quite narrow.” That is, the model doesn’t actually do this.

- 13th paragraph, midway: Facebook’s Yann LeCun admits that inference is a “big missing piece” — not only are models not doing it, but companies don’t know how to do it either. .

- Paragraph 14: AI will one day provide us with previously unknown applications, such as travel planners.

- Paragraph 17: “Over time, I think we’ll see models move toward longer, more complex tasks,” says Facebook’s Brad Lightcap.

In the last paragraph, LeCun warns:

“We’ll be constantly talking to these AI assistants,” LeCun said. “Our entire digital diet will be mediated by AI systems.”

It’s hard to see it as anything other than a threat.

his lips are moving

There are no lie detectors, but there are no suckers like rich suckers. Speech Craft Analytics was able to print a flyer on his FT advertising their speech stress analyzer.

Voice stress analysis Complete and utter pseudoscience. It doesn’t exist. it doesn’t work. Great results are regularly claimed and never repeated. Anyone trying to sell you voice stress analysis is a scammer and a scammer.

But lie detectors are highly coveted products, so just knowing they’re a scam won’t stop anyone from buying them.

The FT article pitches this to professional investors and securities analysts, who claim they can tell if a CEO is lying on an earnings call.

how do they do this? That’s AI! This is by no means hand gestures, magic, or pseudoscience.

The use case they suggest, although they don’t name names, is of course to use this near-magical gadget against their own employees. It doesn’t matter if it works or not. The article even acknowledges that this type of alleged AI use case risks becoming a driver of racial laundering. [FT, archive]

But consider: Everyone involved is in dire need of a leash.

For those who doubt that AI is exactly the same garbage as blockchain, here is the AI UNLEASHED SUMMIT. [AI Unleashed Summit, archive]

This great event took place in September 2023. “Exponential AI Strategies” promised to teach us how to do rapid engineering.

“Experience ultimate skill acquisition like being plugged into a Matrix-like machine!” — Fully supervised person matrix.

The promotional trailer video looks like a parody as it is mostly stock footage. [YouTube]

improvised engineering

One card game developer told PC Gamer how he paid an “AI artist” $90,000 to generate card art. “Because no one can match the quality he provides.” There are no fully existing “artists” on social media. These cards are general AI rip-offs of famous collectible card games, but with extra fingers and misplaced limbs and tails. In 2024, it was discovered that PC Gamer had been tricked into publishing an article promoting NFT products. [PC Gamer, archive]

Where does GPT-5 get its new training data? Honeypot sites designed to waste spam web crawlers’ time seem to be getting a ton of valuable new real human interaction . [NANOG]

Baldur Bjarnason: “Technology experts continue to tout the idea that no one has ever succeeded in banning ‘scientific progress’, but LLM and generative models are not ‘progress’. These are products and we always ban them.” [blog post]