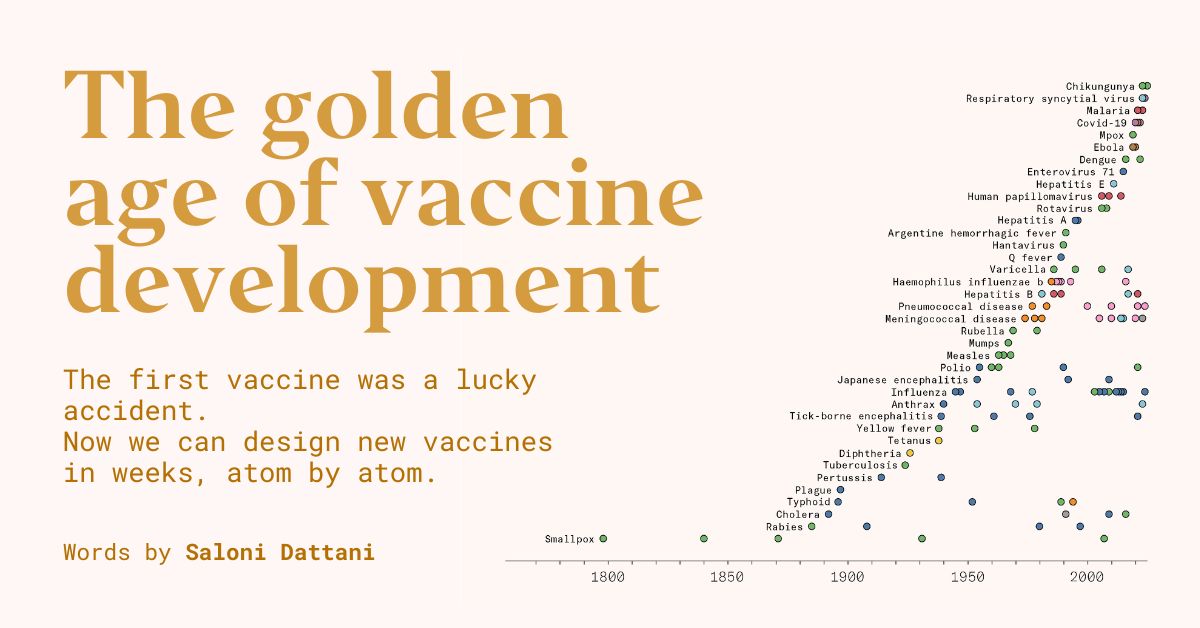

The Evolution of Vaccines: From Jenner to Today

Back in 1796, Edward Jenner created the first-ever vaccine targeting smallpox. Interestingly, at that time, the concept of viruses wasn’t even on the radar; people weren’t connecting them to illnesses. A common belief was that Jenner’s vaccine worked by depleting the body of nutrients needed for the disease to flourish. However, the real reason it worked was due to the fortunate occurrence of cowpox providing cross-protection against smallpox. It took nearly a century for the development of vaccines for other maladies.

There were several challenges along the way. For instance, stocks of Jenner’s vaccine frequently perished and had to be regenerated from the ground up time after time. In the 19th century, maintaining a viable vaccine was a tough task that often meant relying on “arm-to-arm” transmission chains just to keep it viable.

Gradual improvements were made. In the 1840s, a method was developed to grow the smallpox vaccine virus more safely on calf skin. By the 1890s, scientists mixed it with glycerin to prevent spoilage. The 1940s witnessed a breakthrough in freeze-drying techniques that helped vaccines survive heat and lengthy transportation. The innovative bifurcated needle emerged in the 1960s, allowing for a quarter of the usual dose to be used, making vaccination more efficient globally. Such progress was pivotal in the ultimate eradication of smallpox.

Jenner’s 18th-century discovery changed the world, yet it highlighted the rudimentary knowledge of that era. It wasn’t until another 90 years that scientists deciphered germ theory, and an additional fifty years passed before the invention of the electron microscope, which allowed scientists to visualize the smallpox virus for the first time.

Today, the landscape of vaccines is vastly different. We’ve moved from serendipitous discoveries to meticulously designed vaccines. Now, we can visualize pathogen structures down to the atomic level. This allows us to not only purify and tailor ingredients but also enhance our immune responses with adjuvants, distribute vaccines in safer formats, and produce them en masse. Real-time tracking of pathogen evolution enables immediate adjustments to vaccines for new strains.

It’s quite remarkable, actually. This era is truly a golden age for vaccine development. If we continue to invest wisely, the future could hold even more astonishing breakthroughs.

Pasteur’s Contributions and the Rise of Vaccinology

The initial smallpox vaccine was a happy accident, given that cowpox fortuitously offered protection against smallpox. Fast forward ninety years later, and Louis Pasteur figured out how to replicate such success for various diseases. He had already gained fame for his fermentation techniques and the process known as pasteurization.

In the 1870s, Pasteur began studying infections in livestock, kicking off with chicken cholera. He cultivated bacteria in the lab, keeping them alive through a method of ‘serial passage,’ shifting them to fresh broth regularly and injecting them into chickens. Fresh cultures were deadly, often killing almost all the chickens within days.

In the summer of 1879, while Pasteur was absent, his assistant Émile Roux conducted further experiments. Roux recultured flasks that had been left standing for weeks, and the subsequent cultures showed unexpected survival in some injected chickens. It appeared that the bacteria had weakened over time. When he experimented by injecting these same chickens with a fresh lethal strain, some endured—indicating a possible protective effect. The pair subsequently learned that exposing it to both acidity and oxygen could weaken the bacteria, leading to the development of the second vaccine ever created.

They went on to create vaccines for animal anthrax by inactivating the bacteria chemically, and then tackled a rather deadly foe: rabies. The rabies virus’s cause was unclear, given its minuscule size that camouflaged it from microscopes of the time. Still, Pasteur and Roux reliably discovered that the brain tissue of infected animals could transmit rabies to other animals.

Countless trials eventually led to a successful vaccine. Roux extracted brain tissue from rabid dogs, injecting it into rabbits after drilling holes into their skulls. They went through up to 90 rabbits in sequential testing. Finally, they dried the tissue to weaken the pathogen before injecting dogs, incrementally increasing exposure to build immunity.

Feeling increasingly confident, Pasteur and Roux advanced to treating humans bitten by rabid animals, fostering antibody production in patients before symptoms could take hold. In 1885, they treated two boys with dog bites and witnessed the success of their method. One of the most lethal diseases, rabies, had become preventable thanks to the groundwork they laid.

These methods paved the way for systematic vaccine development. One approach involved compelling a pathogen into an unaccustomed environment to weaken its virulence (‘attenuation’), while another used heat or chemicals to inactivate pathogens. Both methods allowed for the pathogens to retain their structural integrity, aiding immune recognition if future exposure occurred.

However, large-scale population protection remained a distant goal. Pasteur developed vaccines using whole animals in culturing, which was tedious and susceptible to contamination. Finding a lab method to cultivate cells, rather than entire tissues or animals, became essential.

Enter Robert Koch, a bacteriologist and Pasteur’s rival who made significant strides. While Pasteur cultivated microbes in liquid broth, Koch sought solid media cultivation to allow for microscopic observation. His solution was to solidify nutrient broth using gelatin and cover it with a bell jar. This eventually evolved into what we now know as the ‘Petri dish,’ thanks to his assistant Julius Petri. Together with Pasteur’s techniques, they catalyzed a research explosion that birthed new bacterial vaccines.

Yet, isolating animal cells for vaccine development faced its challenges. Cells tended to clump together and quickly deplete nutrients. As for viruses, being obligate parasites, they required living cells to replicate, rendering them challenging to cultivate with their prior methods.

A breakthrough occurred with neuroscientist Ross Harrison in 1907, who created the hanging-drop method. He placed spinal cord pieces from frog embryos into drops of their plasma, effectively allowing nutrients to nurture the nerve fibers, which thrived for weeks.

By the 1920s, scientists successfully isolated cells from tissue and began to watch them migrate across glass surfaces, transferring growth regions onto new plates. Such advancements facilitated the development of vaccines against previously challenging diseases like polio.

Additional technologies helped streamline the process, such as antibiotics, which reduced bacterial contamination, and autoclaves used for sterilizing tools. Cryopreservation allowed cell preservation for longer durations, shifting the reliance from animal broth to simpler mediums.

Vaccinology transformed into a systematic science based on Pasteur’s foundation. By the mid-20th century, vaccines for typhoid fever, diphtheria, tetanus, polio, yellow fever, and more became the norm.

Microscopy’s Influential Role

What’s fascinating about Pasteur’s techniques is that some vaccines were developed without the actual vision of pathogens, relying solely on empirical testing.

While observing pathogens is not strictly necessary for vaccine development, varied observation opportunities arose. Take tuberculosis, for instance; its symptoms could easily be mistaken for others. Eventually, microscopes identified the bacteria responsible, a feat that proved crucial for vaccine cultivation.

Over two centuries, the resolution of microscopes improved dramatically, allowing scientists to examine not just cells, but bacteria and viruses as well, down to their protein structures and even atoms.

In the early 1800s, French anatomist Xavier Bichat classified the body into groups of similar cells, merely utilizing a hand lens. He had doubts about the compound microscopes of the following century, likely due to many apparent discoveries that were actually due to optical issues. Various aberrations caused by poor lens quality could deceive researchers into misidentifying features that were simply distorted by lens flaws.

Improvements in microscope precision came through various innovations. In 1830, wine merchant JJ Lister formulated the idea of achromatic and aplanatic lenses, addressing both color and spherical aberrations.

These enhancements progressed alongside better fixation methods, stains for revealing structures, and equipment for slicing tissues thinly enough to allow light passage.

As the 19th century continued, scientists began observing organelles and cell divisions, acknowledging them as the fundamental units of life. These improvements in culturing techniques let them witness microorganisms infecting cells and causing diseases.

Robert Koch’s discovery of the bacterium Mycobacterium tuberculosis in 1882 stands out, occurring while tuberculosis was claiming many lives in cities like Berlin. Before Koch, researchers had struggled to identify the bacterium responsible, relying on animal injection tests but failing to isolate the microbe’s cause.

To visualize it, Koch took lung tissue samples and applied standard stains along with ammonia to facilitate dye adhesion. This clever approach revealed the culprit behind one of humanity’s deadliest diseases.

His findings coincided with a burst of microbe research in the late 19th century, now dubbed the golden age of microbiology, resolving long-standing mysteries surrounding various ailments.

However, viruses remained elusive, invisible under microscopes and much smaller than bacteria. Unfortunately, as the 19th century closed, microscopy advancements hit a brick wall.

The physicist Ernst Abbe identified that microscopic resolution depended on light wavelength and lens capacity, revealing why light microscopes couldn’t achieve high magnification.

It wasn’t until the 1930s when Ernest Ruska and Max Knoll created the electron microscope, which replaced light with electrons. This freed up researchers from the constraints of visible light limits, leading to remarkable microscopy advancements.

Electron microscopes, albeit delicate, heralded a revolution. Improvements in sealing, luminosity, and camera quality led to immense magnification capabilities surpassing conventional microscopy.

For the first time, microbiologists were given direct views into viruses, uncovering their structures. However, the electron beam proved challenging, collapsing biological materials. Only in the 1980s with cryo-electron microscopy did scientists achieve precise internal views of viruses.

With advancements came better detectors and computational techniques that enabled scientists to assemble 3D views of microbes down to the atomic level.

In the case of respiratory syncytial virus (RSV), visualizing its atomic structure led to effective vaccine development, years after earlier attempts had failed. This progress pivoted around a critical surface protein, whose pre-fusion form became a prime target for vaccine design.

The Shift Toward Precision Vaccines

This RSV vaccine stakeholders a significant shift in approach: instead of using whole viruses, it focused on a single protein. Historically, most vaccines contained entire pathogens, but more precise formulations allow for improved safety and effectiveness.

In 1890, Emil von Behring and Kitasato Shibasaburo pioneered ‘serum therapy,’ enabling the transfer of an infected person’s serum to protect another person from the same pathogen temporarily.

This approach significantly lessened diphtheria’s deadly grip, as those infected could suffocate from a throat-clogging toxin. However, the therapy offered only short-term immunity, failing to teach recipients how to fend off future invasions.

More than three decades later, scientists developed a reliable, long-lasting vaccine for diphtheria by chemically inactivating the bacteria’s toxin while maintaining its effectiveness in eliciting immune responses. This vaccine marked the beginning of what we call a ‘subunit vaccine’—one containing only particular parts of the pathogen.

Serum therapy enlightened scientists to protective factors that could shield individuals against already encountered pathogens; notably, antibodies that latch onto parts of pathogens like antigens.

The quest for better tools to measure and compare antibodies yielded fascinating discoveries—antibodies aren’t just broadly anti-foreign; they’re highly specific. Even minor molecular changes in an antigen can trigger vastly different immune reactions, an insight first uncovered by Karl Landsteiner during his work on blood groups.

This led researchers to ponder the source of such immense specificity in response. One early idea proposed that microbes served as templates, shaping antibodies in the process. Yet, this model became less feasible once researchers noted that a single antigen unit could spark vast populations of identical antibodies.

Inevitably, the community gravitated to an alternative model: the recognition of antigens by pre-existing antibodies. This concept evolved into ‘clonal selection theory,’ suggesting that a unique antibody brought into contact with an antigen would duplicate and release thousands of antibodies each second—an immensely diverse repertoire of white blood cells, continually generated after birth in anticipation of pathogens.

This specificity opened the door for vaccine development, demonstrating that only portions of a pathogen were necessary to evoke responses, thereby revolutionizing vaccine approaches once again.

One compelling case involved the pertussis vaccine, known for causing severe coughing fits in young children. While early vaccines effectively reduced the disease, they contained the whole bacterial cell, leading to rare side effects. This prompted concerns about safety and led to decreased vaccination rates, resulting in rising pertussis cases and fatalities.

In an international effort to remedy this, researchers set out to devise a safer vaccine comprising only essential antigens. Yuji and Hiroko Sato, a couple from Japan’s National Institute of Health, identified the critical antigens involved and purified them for better safety. Their findings resulted in the diphtheria, tetanus, acellular pertussis (DTaP) vaccine introduced in Japan in 1981, which eventually gained global acceptance.

This method of targeting vital antigens has gained steam, as scientists sort through pathogens to optimize subunit vaccines. While the number of specific antigens children encounter in vaccination schedules has decreased since 1900, children now receive more vaccines that are safer and more targeted.

Subunit vaccines exhibit notable advantages; fewer contaminants, lesser side effects, and the ease of incorporating various antigens for multi-pathogen protection come to mind. Yet, it’s important to mention that streamlining vaccines can sometimes reduce their effectiveness, as seen with acellular pertussis vaccines, whose protection may diminish after a few years.

Throughout the 20th century, immunologists uncovered strategies to reactivate lost potency in subunit vaccines. For instance, adjuvants were discovered, which significantly amplifies immune responses. Moreover, scientists learned that how vaccines present antigens could shape immunity’s effectiveness—refinements gradually allowed for the strength of whole-pathogen vaccines to be replicated using purer versions.

The Genomic Revolution in Vaccinology

A groundbreaking era took shape when scientists pivoted from merely cultivating viruses to decoding them. Genome sequencing has become faster and cheaper over the last 50 years, while genetic engineering has surged in precision: researchers now have the tools to read and modify the blueprints of pathogens, allowing for rapid reengineering of vaccines tailored to fit the ever-evolving microbial landscape.

In the 1970s, decoding the sequence of a single gene was a time-consuming endeavor. Automated sequencers reduced this time, but it still involved days. The 2010s ushered in an age where scientists could read entire genomes swiftly and inexpensively, often in the same time frame as decoding a single gene. Some devices have even grown compact enough to plug into a computer like a USB stick.

Scientists can now compare countless strains, figuring out which mutations weaken viruses. Research on the poliovirus indicated that only a handful of mutations were needed to transform it into a vaccine that was non-invading and non-paralyzing. Moreover, determining genes that remain constant across multiple strains leads to the development of broader vaccines. Reverse and forward vaccinology have made significant strides, identifying effective vaccine targets.

Parallel to these advancements, scientists achieved key breakthroughs in gene transfer and editing. In the 1970s, a trio of biologists, including Paul Berg, pioneered methods to recombine DNA from different organisms using restriction enzymes, creating vectors, and transforming microorganisms into mass-producers of proteins.

This innovative recombinant DNA technology alleviated previous subunit vaccine challenges, which had involved painstakingly purifying proteins from viruses cultivated in cells or eggs—time-consuming, inefficient, and prone to contamination. It played a central role in the development of the first recombinant hepatitis B vaccine featuring a single critical surface protein from the virus, significantly streamlining manufacturing.

This technology also birthed the first human papillomavirus (HPV) vaccine, containing proteins that assemble into hollow ‘virus-like particles.’ These particles trick the immune system into responding as if they were real threats, preparing the body to react swiftly if it encounters actual infections.

Moreover, recombinant DNA technology allows for combining components from various microbes into more comprehensive vaccines. For instance, the HPV vaccine Gardasil-9 incorporates proteins from nine strains of the virus, ultimately broadening protection against various HPV strains linked to multiple cancers.

Not every protein can self-assemble easily, leading to complexities when using microbial factories. Consequently, scientists explored avenues to employ the human body to produce vaccine proteins directly. In the 1990s, attempts to create DNA vaccines for this purpose utilized plasmid DNA, although efficiency in humans turned out to be lacking.

In contrast, mRNA-based vaccines have emerged. These vaccines can be translated by our cells’ machinery without needing to enter the cell nucleus. Although labor-intensive initially, step-by-step, challenges dwindled. Advances in 2005 reduced mRNA’s immune visibility, while later innovations led to lipid nanoparticle systems that facilitate safe delivery of mRNA into cells.

This cutting-edge technology enables incredibly rapid vaccine development, as it circumvents reliance on microbial factories, allowing formulations to be tailored and updated swiftly—often within weeks.

In the last 230 years, from Jenner’s initial discovery, vaccine technology has transformed tremendously. Many diseases that once plagued humanity have faded from memory. The processes for developing new vaccines are not only faster but also more straightforward. Microbes that were previously invisible to us can now be studied at an atomic level. The journey from animal cultivation to cells to individual proteins showcases incredible progression. The promise of future vaccines remains bright, displaying potential for even more monumental strides in their development.