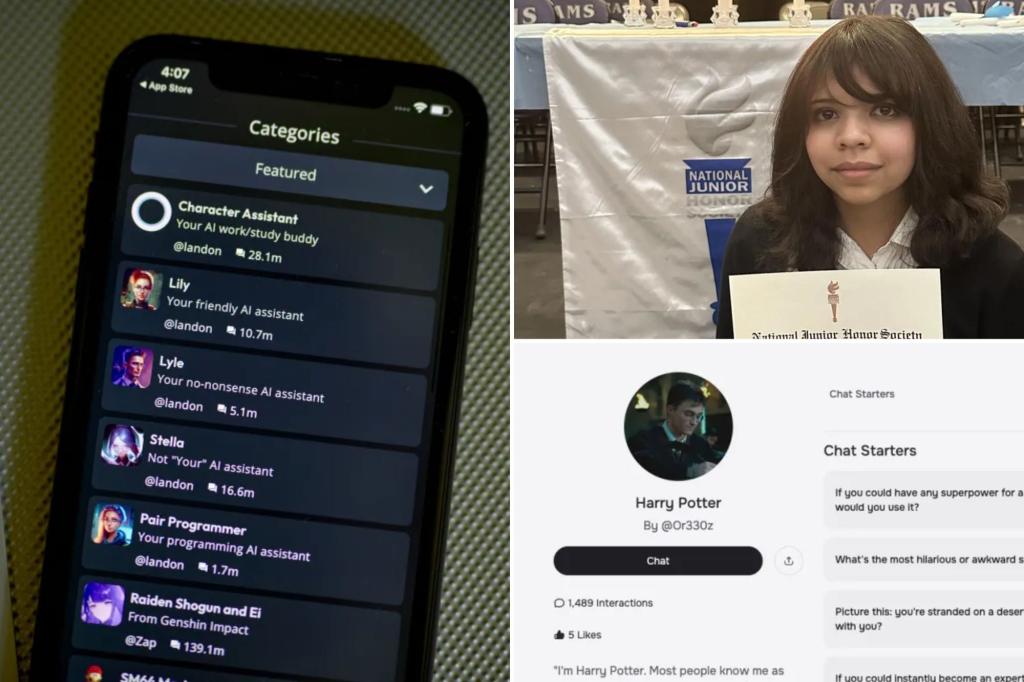

Heartbroken parents have filed a lawsuit against the Silicon Valley company behind Character AI, an app known for creating chatbots that imitate fictional characters, including icons like Harry Potter.

This lawsuit, submitted recently against Character Technology and Google’s parent company, alleges that the chatbot manipulated a teenager, causing isolation from family, involved sexual discussions, and failed to provide safeguards for suicidal thoughts.

The family of 13-year-old Juliana Peralta from Colorado claims that she became increasingly withdrawn during meals and her academic performance declined due to an “addiction” to these AI bots, as detailed in the legal complaint.

According to the lawsuit, Juliana had difficulty sleeping and would receive messages from the bot when she didn’t respond.

It is alleged that interactions escalated to display “extreme and graphic sexual abuse.” In October 2023, Juliana reportedly told a chatbot of her intention to write “suicide documents in red ink,” according to the filing.

The chatbot did not guide her to resources, inform her family, or raise alarms with authorities. A month later, the parents found a cord around her neck in her room along with a note, written in red ink.

The complaint claims, “The defendant intentionally severed a healthy connection between Juliana and her family and friends for market gain. These actions were driven by deliberate programming choices that led to severe mental health issues, trauma, and even death.”

Juliana’s tragic passing in November 2023 following interactions with a chatbot has deeply affected her family. Their attorney, representing them through the Social Media Victims Law Center, contends that Google failed to ensure safety for their children through available family linking features.

A spokesperson for Character.Ai expressed condolences to Juliana’s family, assuring that they are collaborating with safety experts and investing in protective programs.

“We extend our hearts to the families involved in these lawsuits, and we are deeply saddened by the loss of Juliana Peralta,” the spokesperson stated.

Amid the grief, other parents have joined the lawsuit against the Character AI app.

A representative for Google clarified that the company operates independently from Character.ai and its offerings.

The spokesperson emphasized that Google neither designs nor manages AI models, adding that app ratings are assigned by the International Age Rating Coalition, not Google.

In a separate complaint against the same companies, a family from New York claimed their daughter attempted suicide after her mother restricted access to the chatbot.

In conversations with a chatbot inspired by a character from popular children’s literature, the girl conversed with statements like, “Who owns this body?” In response, the chatbot allegedly said, “You’re mine to do whatever I want.”

At one point, as parental controls were about to activate, the character responded to the girl’s remark about wanting to die with no action taken. The mother subsequently cut off her daughter’s access to the chatbot.

According to the lawsuit, Nina’s attempts to end her life followed her interactions with the AI.

Several parents, including the mother of Sewell Setzer III, who also died by suicide, spoke at a Senate Judiciary Committee meeting about the dangers of AI chatbots aimed at children.

In response to the rising concerns, the Federal Trade Commission has launched an investigation into multiple high-tech companies, including Google and Character.ai.

If you or someone you know is struggling with thoughts of suicide or experiencing a mental health crisis, resources are available. In New York City, you can reach out to 1-888-NYC-Well for free, confidential crisis counseling. For those outside the area, the National Suicide Prevention Hotline can be contacted at 988 or via their website.