A former official from the Biden administration, who focused on cyber policy, expressed concerns about the US military’s ability to regulate the use of artificial intelligence by its soldiers.

Mike Oyan, who was Deputy Assistant Secretary of Defense for Cyber Policy in the Biden administration, stated that the current AI models are not suited for military purposes and could be hazardous if implemented.

“There’s probably plenty to worry about,” she noted.

On the topic of claims surrounding “AI mental illness” and lethal robots, Oyan mentioned that the military cannot just take publicly available AI systems and adapt them for military use. This includes the potential for chatbots to suggest violent actions or target killings.

This risk has become a matter of vigilance for both the Department of Defense and the Department of War, Oyan insisted.

“Many discussions about AI regulations focused on ensuring that the Pentagon’s AI applications do not enable excessive lethal actions. Concerns about a ‘swarm of AI killer robots’ underline how the military is expected to safeguard us,” she remarked.

“But there are also serious worries about how the Department of Defense itself uses AI, especially in large organizations like the military where people might engage in prohibited activities. If someone inside the system commits such an act, the ramifications can be severe—not just regarding weaponry, but also concerning information leaks.”

While Oyan may not be aware, the Department of War is already developing internal AI systems.

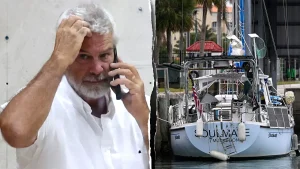

Tyler Saltzman, CEO of EdgeRunner, suggested that the War Department is not intimidated by AI and believes it’s essential for their operations.

Saltzman recently completed a trial with the War Department that involved military exercises in Colorado and Kansas. He discussed EdgeRunner AI, an offline chatbot designed to update information for ground forces.

According to Saltzman, “The War Department is aiming to reinforce its AI strategy. They’re not fearful of it,” which counters Oyan’s concerns.

He went on to say that it’s troubling that people lacking technological knowledge have achieved such influential positions.

In a conversation, Oyan also conveyed worries regarding operational security and the risk that malevolent actors could exploit AI tools used by the US military.

“There’s a lot to be concerned about. Information loss and breaches could have more severe repercussions,” she added.

These genuine worries reportedly seemed to diminish after Saltzman clarified that the EdgeRunner AI would operate entirely offline. He proposed an open-access model, allowing users to maintain a version without the risk of data intrusion.

“They want your data. They want your prompts. They want to learn more about you,” he commented. “They want to spy on you.”

Saltzman also highlighted ongoing collaborations, including a partnership related to technology shared with military allies globally.

“It’s crucial for the government to work alongside industry and academia to develop joint operations in this field. I appreciate what Secretary Pete Hegseth is doing to enhance the Department of War’s effectiveness,” he stated.