Starting next month, Character.AI will restrict children from interacting with its AI chatbot as scrutiny over young users’ interactions with technology increases.

The company, recognized for its extensive range of AI characters, will eliminate the option for users under 18 to “freely” chat with AI by November 25th. In the coming weeks, it will gradually impose limits on children’s chat duration, capping it at two hours per day initially.

Character.AI has plans to create an “under 18 experience,” allowing teens to engage in activities like making videos, stories, and streams featuring AI characters.

The decision to modify the under-18 platform comes as the landscape around AI and teenagers evolves, and the company acknowledged recent media coverage and inquiries from regulators in its blog announcement.

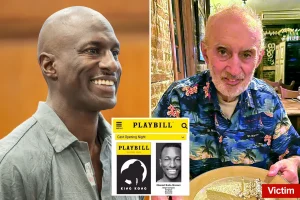

There has been heightened scrutiny of Character.AI and other chatbot developers due to several teenage suicides reportedly associated with the technology. Last November, the mother of 14-year-old Sewell Setzer III filed a lawsuit against Character.AI, alleging the chatbot contributed to her son’s death.

OpenAI is also facing legal action from the parents of 16-year-old Adam Lane, who took his own life after using ChatGPT. Both families testified before a Senate committee last month, advocating for protective measures around chatbot interactions.

In September, the US Federal Trade Commission (FTC) commenced an investigation into AI chatbots, looking for information from Character.AI, OpenAI, and other significant tech companies.

Character.AI mentioned that feedback from regulators, safety experts, and parents influenced their decision to implement this change for the under-18 community. “These measures might seem more conservative compared to others in our industry, but we believe they are the right move,” the company stated.

Along with restricting children’s access, Character.AI is set to introduce new age-verification technology and establish a nonprofit named AI Safety Lab.

As concerns regarding AI chatbots continue to grow, a bipartisan group of senators introduced a bill this week aimed at banning the provision of AI companions to children. The proposed legislation, backed by Senators Josh Hawley (R-Missouri), Richard Blumenthal (D-Conn.), Katie Britt (R-Ala.), Mark Warner (D-Va.), and Chris Murphy (D-Conn.), would criminalize the creation of products that solicit or produce sexual content aimed at children and mandate that AI chatbots consistently clarify they are not human.

Earlier last month, California Governor Gavin Newsom (D) approved a similar bill that requires developers in the state to implement protocols preventing their chatbots from discussing suicide or self-harm, and direct users to crisis services when necessary. He, however, rejected stricter measures that would ban developers from making chatbots available to children unless they can ensure safe discussions.