According to an amended lawsuit filed Tuesday, Meta Inc. was forced to close its doors after learning that Instagram and Facebook were running ads next to content that sexualized underage users. It reportedly faced the wrath of two prominent advertisers, Walmart and Tinder's parent company Match Group.

In one exchange in early November, Match Group told Meta that it had observed a series of reels displayed alongside one of its ads, including six consecutive videos of young girls. , which he described as “very unpleasant.”

In one video, “[y]A message from Match Group said: “A young girl in provocative clothing, riding a Harley-Davidson-style motorcycle, caressing her” while the Judas Priest song “Turbo Lover” plays in the background. That's what it means.

According to the complaint, Match Group's concerns about advertising became so high that CEO Bernard Kim contacted Zuckerberg directly and wrote in a letter that Match Group's company “The ads are being served to users who watch violent and predatory content,” he said, costing millions of dollars. content. “

Zuckerberg did not respond to the messages, according to the complaint.

The impassioned message was released as part of a bombshell amended complaint filed by the New Mexico Attorney General's Office, which said last month that Instagram's parent company has been sued for allegedly contacting them from alleged looters.

Before the CEO's attempt to contact Zuckerberg, Match Group also revealed that one of its ads appeared on Facebook alongside “horrific content” from an organization titled “Only Women Are Slaughtered.” expressed concern that it would appear on the screen.

“You urgently need to find a way to prevent this from happening on your platform,” Match Group said.

Meta responded by trying to reassure Match about its brand safety practices, noting that it had removed some disturbing content, including in Facebook groups.

Advertisers are said to have become more concerned after the Wall Street Journal reported that Instagram's recommendation algorithm was amplifying the signals of what it called a “huge pedophile network.”

Last June, Meta sought to allay advertisers' concerns about illegal sexual content, saying in a message to Walmart and other advertisers that it would remove “98% of this violating content before it is reported.” wrote.

When Walmart asked Mehta last October why its ads continued to appear next to disturbing content, a Mehta employee blamed the search by a Journal reporter, saying, “This is really unfortunate.'' My intuition is that it was.''

According to the complaint, a “frustrated” Walmart employee reportedly replied, “Bad luck doesn't help.”

The controversy came to a head last November when another Journal article revealed that a Walmart ad ran “following a video of a woman exposing her crotch.” Days later, Walmart's “marketers” accused Mehta of failing to properly address the issue.

“It's very unfortunate that this type of content exists in the meta, and it's unacceptable to see the Walmart brand near it,” a Walmart employee said in a message. “As long-time business partners, we were also very disappointed to learn of this matter from a reporter and not from Meta.”

Mutch declined to comment. Representatives for Meta and Walmart did not respond to requests for comment.

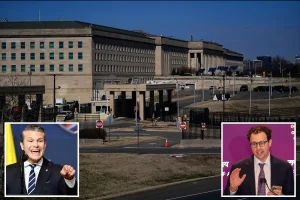

New Mexico Attorney General Raul Torrez said in a statement: “Meta authorities deceive corporate advertisers by allowing them to display sponsored content alongside highly disturbing images and videos that clearly violate Meta's promised standards. There is new evidence that this is the case.”

Torrez also accused Meta boss Mark Zuckerberg and the company itself of “refusing to be honest and transparent about what's going on on the Meta platform.”

The amended complaint also includes other surprising new details about the New Mexico Attorney General's Office investigation. This includes forums within the “dark web” where alleged sex offenders “openly discuss the role of Instagram and Facebook's algorithms in handing over children to criminals.” There is.

“Predators are leveraging Instagram to identify groups of pedophiles and children and connect with potential child victims by acquiring algorithms that aggregate similar images, videos, and accounts into their feeds. ,” the lawsuit alleges.

“Meth's lack of accountability is clear and shows how easily even child predators can prey on children through Meta's platform,” said a New Mexico Attorney General's Office official. I am aware of that,” he said.

In its initial filing in December, the attorney general's office said investigators established a series of test accounts to examine Meta's ability to protect underage users from harmful content.

The test account depicts four fictitious children using AI-generated photos purportedly showing children under the age of 14, including “photos and videos of genitals” and an alleged sex offender. It is believed that a large amount of offensive material was sent to the site, including messages from people who

Separate from the New Mexico lawsuit, Meth is facing high-profile lawsuits from dozens of state attorneys general. Meta exploits underage users for profit, even though its social media platform is addictive and promotes negative outcomes such as depression, anxiety, and body image issues. It is claimed that there is.

The lawsuit also alleges that Meta turns a blind eye to millions of underage users and collects their data without parental consent, in violation of federal law.

Meta, meanwhile, has repeatedly denied wrongdoing and touted a number of safety features it has introduced in recent years to protect young people.

Earlier this week, Meta announced new restrictions to provide a “safe and age-appropriate experience” for young people.

The company is now “automatically placing teens in the most restrictive content moderation settings on Instagram and Facebook,” limiting search results for anxiety-provoking topics such as suicide, eating disorders, and self-harm. He said he is doing so.