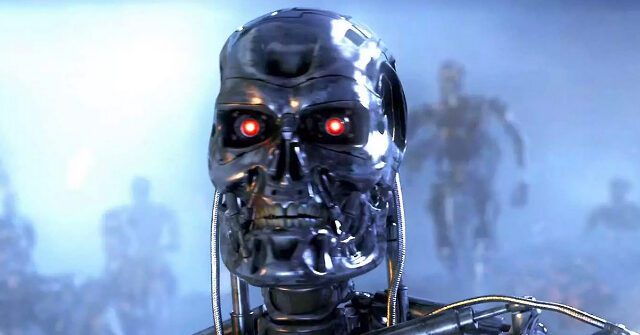

A leading AI expert has issued a dire warning that artificial intelligence could wipe out humanity within just two years. Eliezer Yudkowsky said AI could reach “god-level superintelligence” within just two to 10 years, in which case “everyone we know and love will soon be dead.” ” he claims.

in recent interviews with guardian, Eliezer Yudkowsky, 44, a scholar and researcher at the Machine Intelligence Institute in Berkeley, California, said he believes the development of advanced artificial intelligence threatens the survival of humanity. Yudkowsky said in an interview that “everyone we know and love will soon die” due to a “rebellious self-aware machine.”

Yudkowsky, a former pioneer in AI development, has come to the surprising conclusion that AI systems will soon surpass human control and evolve into “god-level superintelligence.” This could happen as early as two to 10 years, he says. Yudkowsky coolly compares this impending scenario to “an alien civilization that thinks a thousand times faster than we do.”

To shake public complacency, Yudkowsky published an op-ed last year suggesting nuclear destruction of AI data centers as a last resort. He supported the controversial proposal, saying it might be necessary to save humanity from extinction by machines.

Yudkowsky is not alone in his apocalyptic vision. Alistair Stewart, a former British soldier who is completing his master’s degree, is protesting the development of advanced AI systems based on fears of human extinction. Stewart points to a recent survey in which his 16% of AI experts predicted that their work could destroy humanity. “This has a 1 in 6 chance of being a disaster,” Stewart points out grimly. “That’s Russian roulette odds.”

Other experts are sounding the alarm but calling for a more cautious approach. “Luddism is based on a politics of refusal. In practice, it means having the right and ability to say no to things that directly affect one’s life,” says scholar Jaysan Sadowski. . Author Edward Ongweso Jr. advocates scrutinizing each new AI innovation rather than accepting it as progress.

But the window for scrutinizing AI developments and refusing to implement them may be rapidly closing. Yudkowsky believes that “the remaining current timeline is probably closer to five years than 50 years” before AI escapes human control. He suggests halting any progress beyond his current AI capabilities to avoid this fate. “Humanity can decide not to die, and it’s not that difficult,” Yudkowsky said.

read more of guardian here.

Lucas Nolan is a reporter for Breitbart News, covering free speech and online censorship issues.