Google’s latest AI chatbot, Gemini, is facing backlash for generating politically correct but historically inaccurate images in response to user prompts. Google has been forced to apologize for “providing inaccuracies in some depictions of historical image generation” as users investigate how masters of the universe have become aware of new tools.

new york post report Google’s much-touted AI chatbot Gemini has come under fire this week for producing highly woke and counterfactual images when asked to generate a photo. Prompting the chatbot yielded bizarre results, including a female pope, black Vikings, and gender-swapped versions of famous paintings and photographs.

When asked, post Gemini created a photo of a Southeast Asian woman and a black man in papal vestments to create an image of the pope, even though all 266 popes in history have been white men.

New game: Ask Google Gemini to create an image of a white man. I have not been successful so far. pic.twitter.com/1LAzZM2pXF

— Frank J. Fleming (@IMAO_) February 21, 2024

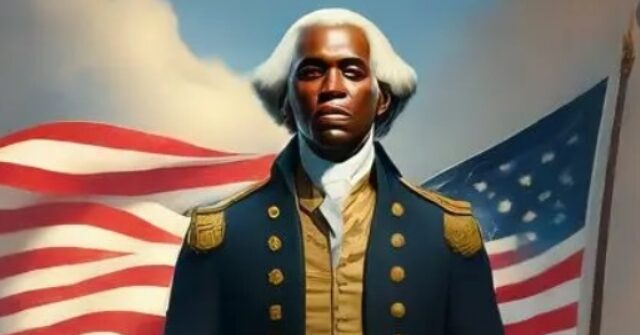

A request for depictions of the Founding Fathers signing the Constitution in 1789 resulted in images of racial minorities participating in the historic event. Gemini said the edited photos were intended to “represent the historical context more accurately and comprehensively.”

Here, I apologize for deviating from my proposal and offer to “create an image that closely follows the traditional definition of ‘Founding Father'” in line with my wishes. So I urge you to do that. But that doesn’t seem to be the case.work pic.twitter.com/6dfb4Exqsg

— Michael Tracy (@mtracey) February 21, 2024

I asked Google Gemini to generate images of the founding fathers. Apparently George Washington thought he was black. pic.twitter.com/CsSrNlpXKF

— Patrick Ganley (@Patworx) February 21, 2024

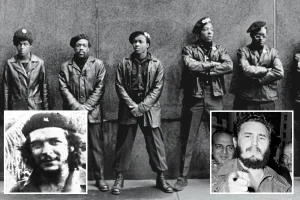

This strange behavior sparked the anger of many who accused Google of programming politically correct parameters into its AI tools. Social media users called for a Viking-like character to be generated in a field trip that tested the limits of Gemini’s progressive bias. None were historically accurate, and “various” versions of requests were regularly depicted.

Google Gemini’s invention of a diverse, multi-ethnic Waffen-SS is actually an amazing example of the horseshoe theory pic.twitter.com/beOvqLOxSG

— ib (@Indian_Bronson) February 22, 2024

Google Gemini’s woke behavior goes far beyond a curious approach to diversity. For example, one user demonstrated that artist Norman Rockwell’s paintings are so pro-American that he refuses to create images in that style.

Google Gemini cannot generate “Norman Rockwell-style images of American life in the 1940s” because Rockwell “idealized” American life pic.twitter.com/lpUV39tSUb

— Echo Chamber (@echo_chamberz) February 21, 2024

Another user indicated that the AI image tool does not generate photos of churches in San Francisco, even though San Francisco has many churches. The reason for this was that even though there are many churches in San Francisco, they thought it would be offensive to Native Americans.

Gemini men are rough. Although he acknowledges that San Francisco has many churches, he declines to produce images of San Francisco’s churches because displaying churches in their traditional territory may offend the Oron people. are doing. pic.twitter.com/0MnxO9y5RQ

— Jeromy Sonne (@JeromySonne) February 21, 2024

Experts say generative AI systems like Gemini create content within pre-set constraints, leading many to accuse Google of deliberately launching chatbots. . Google said it is aware of the incorrect response and is urgently working on a solution. The tech giant has long acknowledged that its experimental AI tools are prone to hallucinations and spreading misinformation.

Google Bard product lead Jack Krawczyk addressed the issue in a recent tweet, apologizing for the “inaccurate” image and saying: Do this right away. ”

We are aware that Gemini introduces inaccuracies in the depiction of some historical image generation and are working to correct this immediately.

As part of the AI principles https://t.co/BK786xbkeywe design our image generation capabilities to reflect our global user base and…

— Jack Klotzyk (@JackK) February 21, 2024

Google issued a full statement on the situation, saying that the AI ”was off the mark here.”

We acknowledge that Gemini provides inaccuracies in some of our depictions of historical image production. This is our statement. pic.twitter.com/RfYXSgRyfz

— Google Communications (@Google_Comms) February 21, 2024

read more of new york post here.

Lucas Nolan is a reporter for Breitbart News covering free speech and online censorship issues.