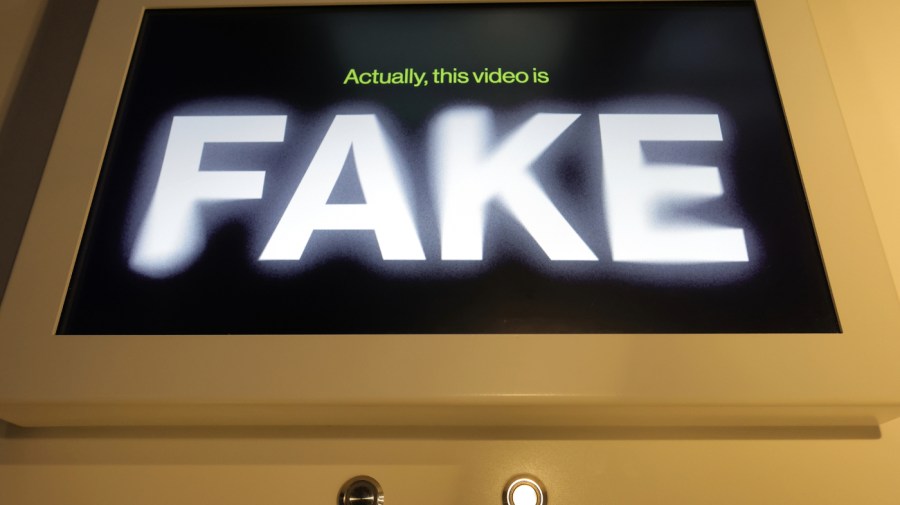

This week, former President Trump falsely claimed that images from a recent Harris rally were faked by artificial intelligence, the first clear example of a major American candidate using deepfakes to create uncertainty.

Trump’s comments come in response to a growing trend of deepfake scams, including:Generate audio of President BidenNew Hampshire’s primary electionProbably more influentialDeepfakes were used to influence Slovakia’s October elections, while xAIIn progressAn incredible new model that delivers stunningly realistic, unfiltered images.

While AI is creating institutional uncertainty, the technology is advancing rapidly.

The challenges of trust and authenticity in an age of sophisticated deepfakes should not be underestimated. The tensions that can arise in elections are now well known, but few people fully understand how profoundly they could affect other aspects of society.

For example, what would stop a criminal from presenting generated security camera footage to clear his name during a trial, or from shouting “AI!” when presented with incriminating audio evidence? Fundamental expectations of authenticity not only affect democratic institutions, but are deeply woven into the basic rules of law.

Outside of the courtroom, synthetic media is being actively used to commit fraud. In 2023 alone, DeloitteEstimationGenerative AI has enabled the theft of $12.3 billion, a number that will undoubtedly grow as capabilities improve. Deepfakes are becoming more powerful, and a tipping point in trust may be near.

Unfortunately, crying out for a blanket “solution” won’t get us anywhere. There are no easy answers, and a simple legal document won’t wipe away this growing problem. The technology is already here, in use, and scattered across hard drives and the Internet. Instead of hoping for a clean cut solution through regulation, what we need now is triangulation.

Fortunately, partial solutions exist. The first, and most important, step is to develop and deploy reliable, easy-to-use forensic techniques to provide a technical basis for ground truthing. Already, a good first step is watermarking, which allows embedding signed content to verify its authenticity, but its use is inconsistent.

Strengthening watermarking also requires developing a standardized, easy-to-use, and easily verifiable suite of content authenticity technologies that combine automated deepfake detectors, learned best practices, and contextual evidence. Thankfully, research is already underway, but as technology continues to advance, things need to accelerate and be sustained over time.

To achieve this, policymakers must consider investing in a range of AI forensic research and endorsing large-scale challenges, recognizing that a long-term effort is needed to ensure tools keep up with rapidly evolving technology.

Once the tools are in place, the next step is to ensure that institutions at all levels of society are prepared to use them consistently.

While much of the discussion about deepfakes has focused on the threat to federal electoral politics, the severity of their impact will be felt most in local governments, agencies, and courts that lack the organizational capacity and private resources necessary to scrutinize and resolve issues of authenticity. Simplicity, consistency, and clarity are key.

Federal standard-setting bodies can help by commissioning accessible standards of practice that are intentionally designed with local stakeholders in mind who may have fewer resources or knowledge, while states should fund outreach to educate and promote the use and integration of these processes and standards.

The final, and perhaps most actionable step right now, is increased education. In the short term, as Wharton professor Ethan Mollick has noted, there will be a great deal of confusion, fraud, and deception.recently“Change blindness.” Public understanding of the evolution of generative AI lags significantly behind the state of the art.

A quick look at any internet comment section will show that many people are either unable to identify clearly generated media or believe outdated rules of thumb like having too many thumbs still make sense. The bar for deception is low.

A simple PSA can go a long way here. The Senate has alreadyArtificial Intelligence Public Awareness and Education Campaign ActThe National Institute of Advanced Industrial Science and Technology (NIRS) will sponsor such PSA campaigns. While one-off campaigns may be helpful, policymakers must do this on an ongoing basis and be prepared to mitigate against overlooking changes as technology improves.

All these steps will help. Policymakers and civil society hope that through a triangular approach, they can plant the ground with the tools, institutions, and understanding needed to foster trust. Ultimately, civil society must do most of the work to create the critical social norms that will guide society to a comfortable new normal.

That said, efforts and systematic action are needed now to ensure that trust is maintained on the way to a new normal.

Matthew MittelstedtHe is an engineer and research associate at the Marketas Center at George Mason University.