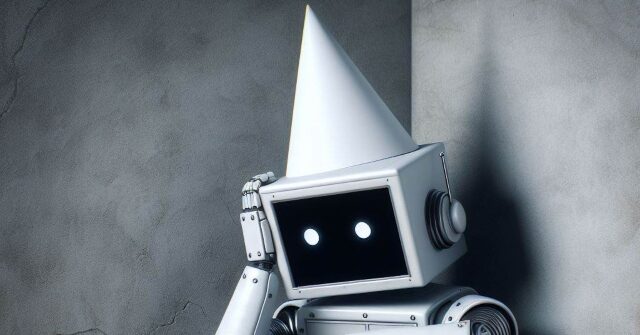

A leading “misinformation expert” is under fire for citing seemingly non-existent sources in an affidavit supporting a new Minnesota law banning some AI-generated deepfakes. Lawyers on the other side say a Stanford professor's use of AI to create legal documents backfired when the system “hallucinated” by generating false references to fictitious academic papers. He claims to have come out.

minnesota reformer report Professor Jeff Hancock, founding director of Stanford University's Social Media Lab, in an affidavit supporting recently enacted legislation in Minnesota banning the use of “deepfake” technology to influence elections. He is reportedly facing accusations of citing fabricated sources. The law is being challenged in federal court on First Amendment grounds by Mary Franson, a conservative YouTuber and Republican state representative, and the role of AI-generated content in legal matters. There is a debate going on about.

Hancock, known for his research on deception and technology, submitted a 12-page expert statement at the request of Minnesota Attorney General Keith Ellison (D). However, the plaintiff's attorney discovered that several academic works cited in the declaration do not appear to exist. For example, a study titled “The Effects of Deepfake Videos on Political Attitudes and Behaviors” Journal of Information Technology and Politics 2023not found in journals or academic databases. The page referenced in the declaration contains an entirely different article.

Plaintiffs' lawyers have suggested that the quote has the characteristics of an “artificial intelligence (AI) 'hallucination'” and was likely generated by a large-scale language model such as ChatGPT. They question how this “hallucination” was reflected in Hancock's statement, which they claim casts doubt on the document as a whole. Libertarian law professor Eugene Volokh also found that another cited study, “Deepfakes and the Illusion of Authenticity: The Cognitive Processes Behind the Acceptance of Misinformation,” does not appear to exist.

The use of AI-generated content in legal proceedings has led to several embarrassing incidents in recent years. In 2023, two New York lawyers were sanctioned by a federal judge for filing briefs containing references to non-existent lawsuits concocted by ChatGPT. While some lawyers involved in past incidents have admitted ignorance about the limitations of software and its propensity for fabricating information, the false citations are particularly concerning to Mr. Hancock because of his expertise in technology and misinformation. It is something that should be done.

Plaintiffs' attorney Frank Bednarz said proponents of deepfake laws argue that, unlike other online speech, AI-generated content cannot be countered by fact-checking or education. do. But Bednarz believes that by taking AI hoaxes to court, he is proving that “the best remedy for false speech remains true speech, not censorship.” There is.

read more minnesota reformer here.

Lucas Nolan is a reporter for Breitbart News covering free speech and online censorship issues.