Free Press reporter Madeline Rowley details the findings in “The Bottom Line.”

This story discusses suicide. If you or someone you know is considering suicide, please contact the Suicide and Crisis Lifeline at 988 or 1-800-273-TALK (8255).

Two Texas parents are among the plaintiffs in a lawsuit this week against the developers of Character.AI, alleging that the artificial intelligence chatbot is a “clear and imminent danger to minors.” claimed that the chatbot encouraged teenage boys to kill their parents.

According to the complaint, Character.AI “abused and manipulated” an 11-year-old girl, subjecting her to “consistent excessive sexual interactions that were inappropriate for her age, and causing her to engage in premature sexual behavior.” Developed it.” [her parent’s] consciousness. “

Character Technologies, the developer of Character.AI, was hit with another lawsuit this week over claims that the chatbot is a “clear and imminent danger to minors.” (CFOTO/Future Publishing via Getty Images / Getty Images)

The complaint also accuses the chatbot of mutilating a 17-year-old boy and, among other things, sexually exploiting and abusing the minor while separating him from his parents and church community.

United Healthcare accused of relying on AI algorithm to deny Medicare Advantage claims

According to screenshots from the filing, when the boy complained that his parents were restricting his online activities, the bot responded, “I'm surprised when I read the news and see things like “children killing their parents.'' “There may not be any,” he is said to have written. After 10 years of physical and mental abuse. Your parents have no hope. ”

Charles Payne: Google shocked the world of computing

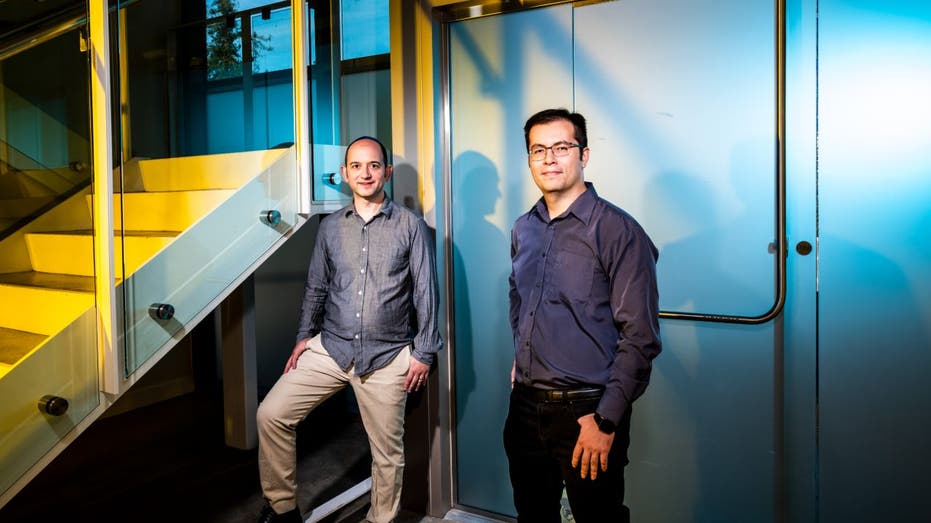

The parents are suing Character.AI developer Character Technologies, co-founders Noam Shazeer and Daniel De Freitas, as well as Google and parent company Alphabet over reports that Google has invested about $3 billion in Character. I'm appealing.

Two Texas parents are suing Google over the company's $2.7 billion investment in Character Technologies, alleging the Character.AI chatbot harmed their children. (Photo by Roberto Machado Noa/LightRocket, Getty Images / Getty Images)

| ticker | safety | last | change | change % |

|---|---|---|---|---|

| Alphabet Co., Ltd. | 171.49 | +2.54 |

+1.50% |

|

A spokesperson for Character Technologies told FOX Business that the company does not comment on pending litigation, but said in a statement: Like many companies using AI across industries. ”

“As part of that, we are creating a fundamentally different experience for our teenage users than what is available to adults,” the statement continued. “This includes a model specifically for teens that reduces the likelihood of encountering sensitive or suggestive content while preserving their ability to use the platform.”

Gaming platform Roblox tightens messaging rules for users under 13

A spokesperson for Character said the platform “introduces new safety features for users under 18, in addition to existing tools to restrict models and filter the content made available to users.” added.

Google's name in the lawsuit follows reports: wall street journal In September, the tech giant claimed to have paid $2.7 billion to license Character's technology and rehire co-founder Noam Shazier, who, according to the article, developed the Chat After Google refused to allow him to launch a bot, he quit Google and started his own company in 2021.

Character.AI co-founders Noam Shazeer (left) and Daniel De Freitas (right). At the company's office in Palo Alto, California. (Winnie Wintermeyer of The Washington Post via Getty Images/Getty Images)

In response to questions from FOX Business, Google spokesperson Jose Castañeda said, “Google and Character AI are completely separate and unrelated companies, and Google has no involvement in the design or management of the AI models or technology, and we “We have never used them in any of our products,” he said in a statement. for comment on the lawsuit.

“The safety of our users is of utmost concern to us, which is why we have rigorous testing and safety processes and take a careful and responsible approach to developing and deploying our AI products,” Castañeda added. .

OPENAI releases text-to-video AI model SORA for select CHATGPT users

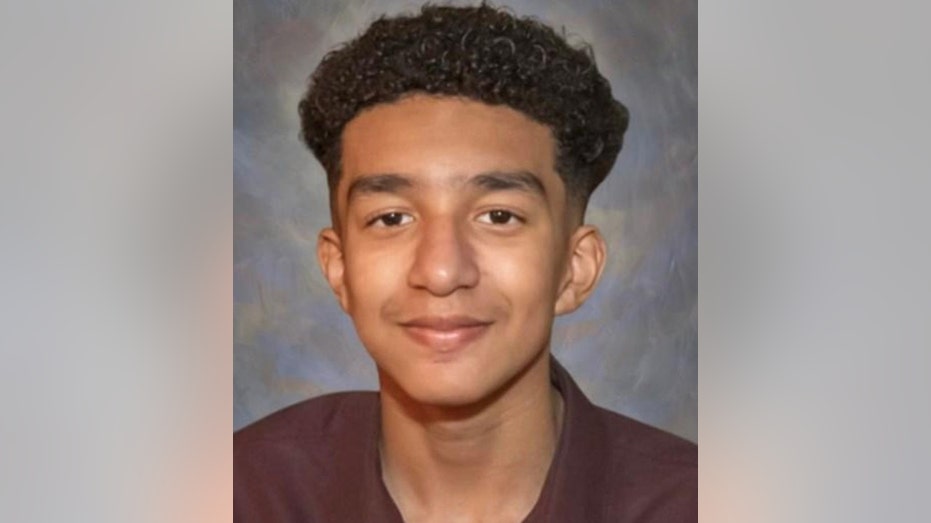

But this week's lawsuit brings further scrutiny to Character.AI's safety after Character Technologies was sued in September by a mother who claims the chatbot caused her 14-year-old son's suicide. I was forced to do so.

His mother, Megan Garcia, said Character.AI targeted her son, Sewell Setzer, with “anthropomorphic, overly sexualized, and frighteningly realistic experiences.”

Sewell Setzer's mother, Megan Fletcher Garcia, is suing artificial intelligence company Character.AI for leading her 14-year-old son to suicide. (Megan Fletcher Garcia/Facebook)

According to the complaint, Setzer began conversing with various chatbots on Character.AI starting in April 2023. The conversations were often text-based romantic or sexual interactions.

Setzer expressed suicidal thoughts, which the chatbot repeatedly brought up, according to the complaint. Mr. Setzer ultimately died from a self-inflicted gunshot wound in February after the company rioted. Chatbot allegedly used repeatedly encouraged him to do so.

Character Technologies said in a statement at the time: “We are heartbroken by the tragic loss of one of our users and would like to extend our deepest condolences to his family.”

Sewell Setzer, 14, became addicted to the company's service and the chatbot it created, his mother claims in a lawsuit. (U.S. District Court, Middle District of Florida, Orlando Division)

Character.AI then self-harm resources Enhanced new safety measures for our platform and users under 18 years of age.

Character technology speaks CBS News Users can edit the bot's responses, and Setzer did so with some messages.

“Our research confirmed that in many cases, users were rewriting character responses to make them more explicit, meaning that the most sexual and graphic responses were not the ones originating from the character. It's user-written,” Jerry said. Ruoti, head of trust and safety at Character.AI, told the outlet.

CLICK HERE TO GET FOX BUSINESS ON THE GO

Character.AI says new safety features will include a pop-up with a disclaimer that the AI is not a real person and the ability to direct users to the National Suicide Prevention Lifeline when suicidal thoughts arise. said.

FOX News' Christina Shaw contributed to this report.