California researchers achieved a major breakthrough with AI drivetrain systems that use their own voices to restore natural utterances to paralyzed individuals in real time, specifically demonstrated in clinical trial participants who are significantly paralyzed and unable to speak.

Developed by a team from Berkeley, California and UC San Francisco, this innovative technology combines the brain computer interface (BCI) with advanced artificial intelligence (BCI) to disengage neural activity into audible speech.

Compared to other recent attempts to create speeches from brain signals, this new system is a major advancement.

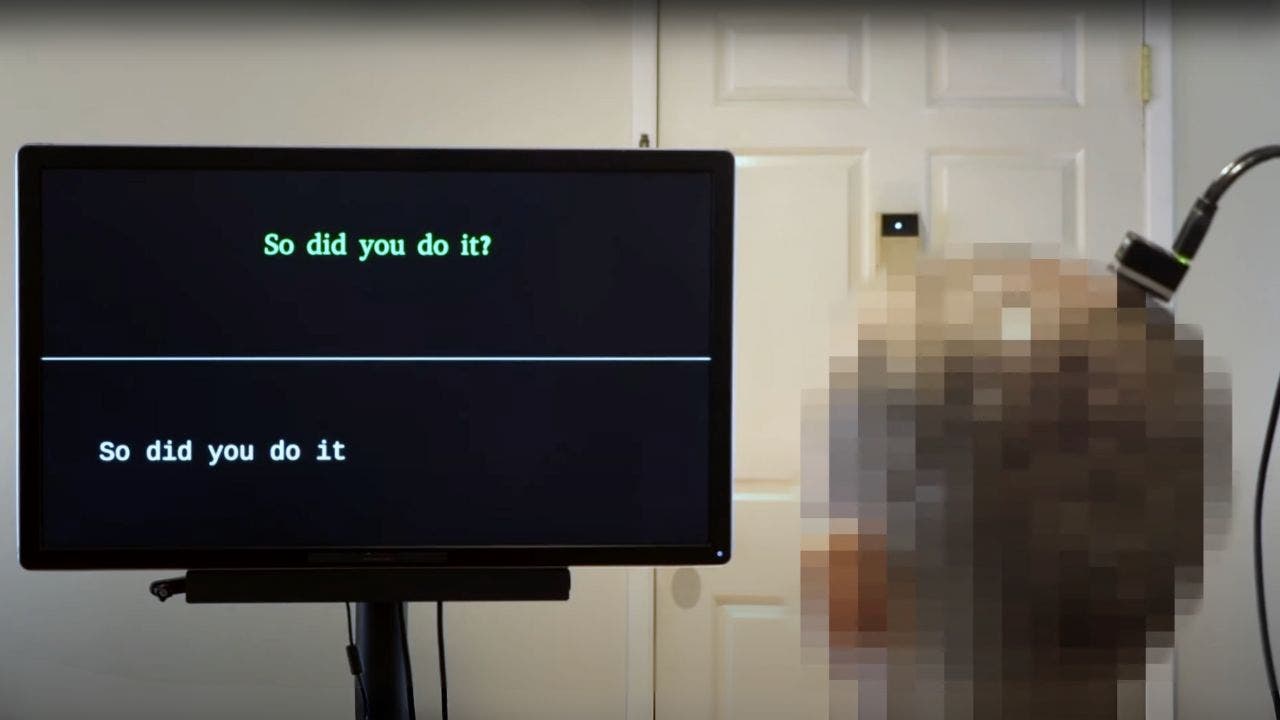

AI-equipped system (Kaylo Littlejohn, Cheol Jun Cho, etal. NatureNeuroscience 2025)

How it works

The system uses devices such as high density electrode arrays that record neural activity directly from the brain surface. It also functions as microelectrodes that penetrate the brain surface and penetrate a large, non-invasive surface muscle contrast sensor located on a surface to measure muscle activity. These devices utilize the brain to measure neural activity, and learn that AI can convert it into the sound of a patient’s voice.

Neuropathy samples neural data from the brain’s motor cortex, the area that controls speech production, and decodes that data into speech. According to research co-lead author Cheol Jun Cho, the neurosphere intercepts signals that thinks are translated into clarification and translated into motor control in the middle.

AI-equipped system (Kaylo Littlejohn, Cheol Jun Cho, etal. NatureNeuroscience 2025)

AI allows paralyzed men to control the robot arm with brain signals

Important progress

- Real-time speech synthesis: AI-based models stream easy-to-understand audio from the brain at near-realistic times to address the latent challenges of speech neuromuscular potential. This “streaming approach brings the same rapid voice decoding capacity to the nerve products of devices such as Alexa and Siri,” according to co-investigator Gopala Anumanchipalli Research. This model decodes neural data in increments of 80 ms, allowing for uninterrupted use of the decoder, further increasing the speed.

- Naturalistic speech: This technology aims to restore naturalistic speech and enable more fluent and expressive communication.

- Personalized voice: The AI is trained using the patient’s own voice before an injury to produce audio that sounds like that. If the patient does not have a voice left, the researcher will use a pre-trained text-to-speech model and patient pre-examination voice to fill in missing details.

- Speed and accuracy: The system can begin to decipher the brain signal and start outputting the speech within one second of the patient attempting to speak. This has been significantly improved from the 8-second delay in previous studies in 2023.

What is Artificial Intelligence (AI)?

AI-equipped system (Kaylo Littlejohn, Cheol Jun Cho, etal. NatureNeuroscience 2025)

Exoskeletons help paralyzed people regain their independence

Overcoming challenges

One important challenge was to map neural data to speech output when patients had no speech left. The researchers overcame this by filling out missing details using a pre-trained text-to-speech model and patient pre-examination voice.

Click here to get your Fox business on the go

AI-equipped system (Kaylo Littlejohn, Cheol Jun Cho, etal. NatureNeuroscience 2025)

How Elon Musk’s Neuralink Brain Chip Works

Impact and future direction

This technology can significantly improve the quality of life for people with paralysis or conditions like ALS. It allows them to communicate their needs, express complex thoughts, and connect with loved ones more naturally.

“It is exciting that the latest advances in AI are significantly accelerating BCI for real-world use in the near future,” said UCSF neurosurgeon Edward Chan.

The next steps include exploring ways to speed up AI processing, increase the expressiveness of output audio, and incorporate variations of tone, pitch and loudness into synthesized audio. Researchers also aim to decode paralytic features from brain activity to reflect changes in tone, pitch and loudness.

Important takeouts in your cart

What’s really amazing about this AI is that it doesn’t just translate brain signals into every speech. The patient aims to give a natural speech using his own voice. It’s like it brings their voice back to them and it’s a game changer. It gives new hope for effective communication and updated connections for many individuals.

What role do you think governments and regulatory bodies play in overseeing the development and use of brain computer interfaces? Write us and let us know cyberguy.com/contact.

Click here to get the Fox News app

For more information about my tech tips and security alerts, sign up for our free Cyberguy Report Newsletter cyberguy.com/newsletter.

Please ask Cart questions or tell us what stories you would like us to cover.

Follow your cart on his social channels:

Answers to the most accused Cyber Guy questions:

New from Cart:

Copyright 2025 cyberguy.com. Unauthorized reproduction is prohibited.