Attorney Represents Humanity in AI Legal Battle with Music Publishers

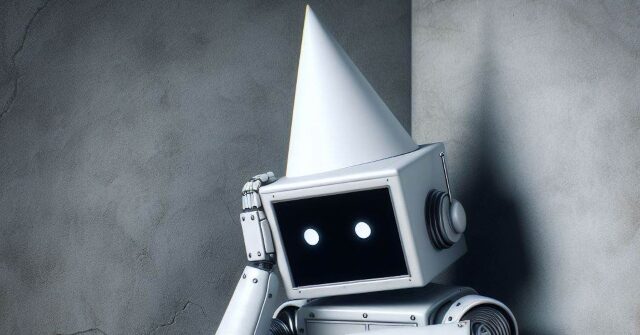

In a legal proceeding in Northern California, the attorney acting on behalf of humanity in a dispute with AI companies has acknowledged the inclusion of incorrect citations generated by the Claude Chatbot from Anthropics. This admission surfaced during a filing made on Thursday, where it was revealed that the AI system “hapticized” various legal citations, leading to fictitious quotes that featured erroneous titles and authors.

This disclosure was part of humanity’s defense against accusations from Universal Music Group and other music publishers, who claim that Olivia Chen, an expert witness for humanity, improperly used Claude AI to reference non-existent articles in a suit against the AI firm.

In light of these allegations, federal judge Susan Van Karen instructed humanity to respond to the claims surrounding the fabricated citations. In the response submitted on Thursday, humanity’s legal team explained that their “manual citation check” unfortunately missed the inaccuracies resulting from Claude’s erroneous output. They expressed regret for the oversight, labeling it as “an honest mistake in citation, rather than a deliberate fabrication of authority.”

This ongoing conflict between humanity and the music publishers is just one of several recent instances where copyright holders have taken legal action against tech companies over claims of intellectual property misuse in training generative AI systems. As the rapid advancement of AI technology continues, the legal and ethical questions surrounding the use of copyrighted materials for developing these tools remain both complex and contentious.

The case also highlights a growing trend of lawyers integrating AI into their practices, which has, at times, led to problematic results. Recently, a California judge criticized two law firms for presenting “fake AI-generating studies” in court. Similarly, Australian attorneys faced scrutiny for relying on OpenAI’s ChatGPT to draft court documents, which resulted in inaccuracies in citations.

Furthermore, there have been warnings regarding the dangers of misinformation generated by AI. Following a related incident, attorney Ayala was quickly removed from a case and replaced by T. Michael Morgan, Esq., who expressed significant embarrassment over the false quotes. He acknowledged the need for careful scrutiny and stressed that this situation should serve as a “cautionary tale” for both his firm and the broader legal profession.

“The idea of courts potentially relying on fictitious cases manufactured by our common law is profoundly troubling,” Morgan remarked, admitting that AI can be “unintentionally misused.”

The utilization of AI within the legal framework is on the rise, with a survey revealing that a notable percentage of lawyers are incorporating these technologies into their work. Nevertheless, the case at Morgan & Morgan underscores the critical need for responsible AI application and thorough verification of the information produced by such tools.

Despite these prevalent issues, the prospect of AI improving and streamlining legitimate work continues to draw significant investment interest. For instance, the startup Harvey, which is developing an AI model tailored for legal purposes, is reportedly seeking to raise over $250 million at a valuation exceeding $5 billion.