OpenAI, the parent company of the popular artificial intelligence chatbot platform ChatGPT, has changed its usage policy to remove a ban on using its technology for “military and war” purposes.

According to the report, OpenAI's usage policy specifically prohibited the use of its technology for “weapons development, military, or warfare” before January 10 of this year, but that policy has since been updated to “not allow others to use it.” Only uses that cause harm are now prohibited. From Computer World.

An OpenAI spokesperson told FOX News Digital: “Our policy is that we do not use any information for the purpose of harming people, developing weapons, monitoring communications, or harming others or destroying property. “We are not allowing them to use the tools.” “But there are also national security use cases that align with our mission. For example, we are already working with DARPA to develop new cyber technologies to protect critical infrastructure and the open source software that industries depend on. It was not clear whether these beneficial use cases were allowed under 'military' under previous policy. Therefore, the goal of the policy update is to provide clarity and the ability to have these discussions. ”

This quiet change will allow OpenAI to work more closely with the military, but it has been divisive among company executives.

Artificial intelligence and U.S. nuclear weapons decisions: How big is the role?

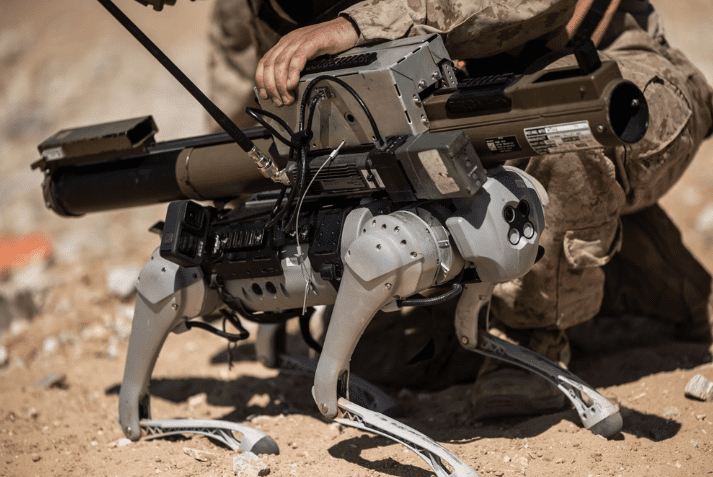

U.S. Marines from the Tactical Training and Exercise Management Group, Marine Air-Ground Task Force Training Command, and scientists from the Office of Naval Research conducted a proof-of-concept experiment with a robotic goat at the Marine Corps Air-Ground Combat Center in Twentynine Palms. California, September 9, 2023. (U.S. Marine Corps photo by Lance Corporal Justin J. Marty)

But Christopher Alexander, chief analytics officer at Pioneer Development Group, believes the division within the company stems from a misunderstanding of how the military will actually use OpenAI's technology.

“The losers are worried about AI becoming too powerful or out of control, and perhaps misunderstand how OpenAI will support the military,” Alexander told Fox News Digital. Told. “The greatest potential for use of OpenAI is in routine administrative and logistics tasks, which represent significant cost savings to taxpayers. Improved DoD capabilities will lead to increased effectiveness.” I'm glad to see that OpenAI's current leadership understands that this will result in fewer lives being lost on the battlefield. ”

As AI continues to grow, so do concerns about the dangers posed by the technology. A Computer World report pointed to one such example last May, when hundreds of technology leaders and other public figures voiced their belief that AI could eventually cause an extinction event. , signed an open letter warning that installing guardrails to prevent this should be a priority.

“Reducing the risk of AI extinction should be a global priority, alongside other society-wide risks such as pandemics and nuclear war,” the letter said.

OpenAI CEO Sam Altman was one of the industry's most prominent signatories to the letter, clearly underscoring the company's long-held desire to limit AI's dangerous potential.

Sam Altman, CEO of OpenAI (Patrick T. Fallon/AFP via Getty Images)

What is Chat PT?

But some experts believe such a move was inevitable for the company, noting that U.S. adversaries such as China are already looking to future battlefields where AI will play a key role. There is.

“This is probably a confluence of events. First, the disenfranchisement of the nonprofit board probably tipped the balance in favor of abandoning this policy. Second, the military did nothing but save lives. “And finally, given the advances in AI by our adversaries, it is difficult to justify not allowing their use.” The government must have asked model providers to change their policies. We cannot say that our adversaries will use the technology and the United States will not.” Phil Siegel, Founder of the Center for Advanced Preparedness and Threat Response Simulation told FOX News Digital.

“As AI learns to become a killing machine and strategic warfare becomes more sophisticated, we should be concerned that safeguards are in place to prevent AI from being used against domestic assets.”[.]”

Samuel Mangold Rennett, staff editor at The Federalist, expressed a similar sentiment, saying that the best way to prevent a catastrophic event at the hands of an adversary like China is for the United States to develop its own military equipment for military use. The aim is to build robust AI capabilities.

“OpenAI was probably always going to work with the military. AI is a new frontier and it's a technological development too important to be used in defense,” Mangold-Renett told FOX News Digital. “The federal government has made clear its intention to use AI for this purpose. CEO Sam Altman has expressed concern about the threat AI poses to humanity. , intends to use AI in future military projects that will likely involve the United States. “We”

But that need doesn't mean AI development can't be done securely, American Principles project director John Schweppe told FOX News Digital that leaders and developers still need to ” He said people should be concerned about the “runaway problem.”

China and US race to release murderous AI robot soldiers as military balance hangs in the balance: Experts

“Not only do we have to worry about adversary AI capabilities, we also have to worry about runaway AI,” Schweppe said. “As AI learns to become a killing machine and strategic warfare becomes more sophisticated, safeguards must be put in place to ensure that AI is not used against domestic assets or even in the nightmare scenario of AI going out of control. We should be concerned that “attacks on operators and implicating operators as adversaries'' are being taken. ”

pentagon (Alex Wong/Getty Images)

While this sudden change is likely to cause increased division within OpenAI, some believe the company itself should be viewed with skepticism as it moves towards a potential military partnership. Jake Denton, a research fellow at the Heritage Foundation's Technology Policy Center, was among those who pointed to the company's secretive model.

“Companies like OpenAl are not guardians of morality, and their neat packaging of ethics is just a facade to appease critics,” Denton told FOX News Digital. “The adoption of advanced aluminum systems and tools in our nation's military is a natural evolution, but OpenAl's opaque black-box model should give pause. The company may want to profit from future defense contracts. However, until the model becomes explainable, its puzzling design must be unraveled.'' ”

CLICK HERE TO GET THE FOX NEWS APP

Although the Department of Defense is receiving increasing offers from AI companies for potential partnerships, Denton argues that transparency should be a key trademark in future deals.

“As our government considers defense Al applications, we must demand transparency,” Denton said. “In matters of national security, there is no such thing as an opaque and unaccountable system.”