Last year 40th anniversary An almost apocalyptic situation.

September 26, 1983, Russian Lieutenant Colonel Stanislav Petrov refused to report Passed allegedly false information to his superiors detailing a nuclear attack from the United States. His inaction prevented a Russian retaliatory attack and the global nuclear exchange that would result. Thus he saved billions of lives.

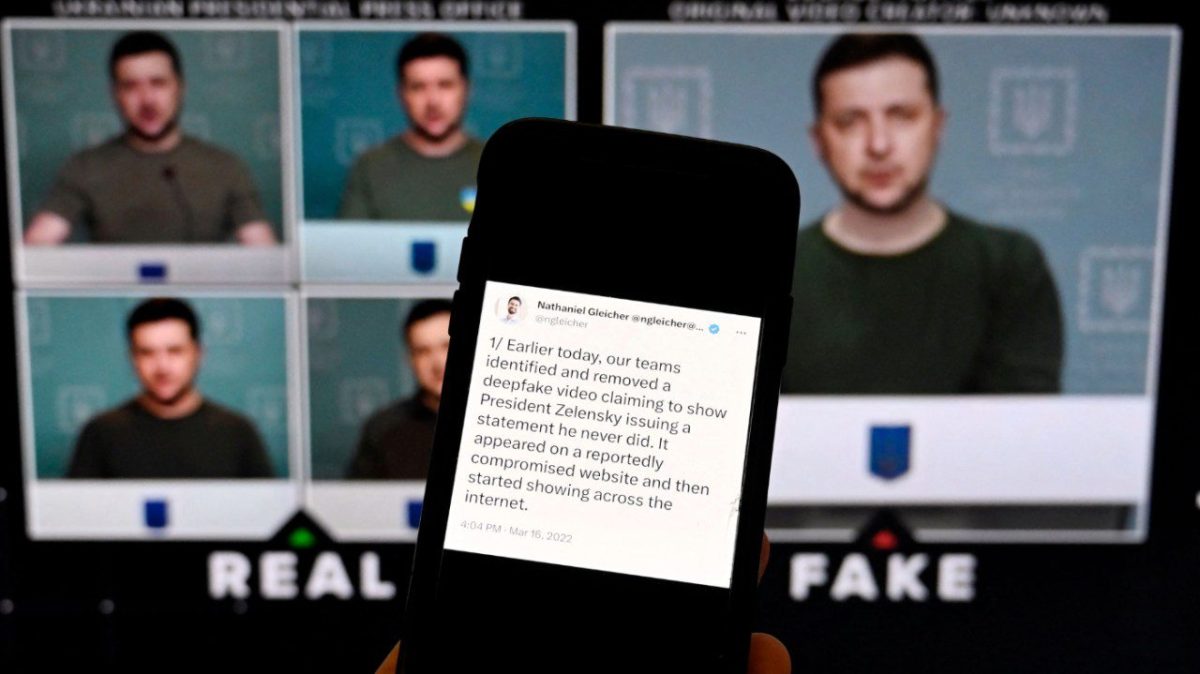

Today, the job of Petrov’s descendants has become much more difficult, mainly due to rapid advances in artificial intelligence. Imagine a scenario where Petrov receives similar alarming news. However, the news is backed up by surreal footage of missile launches and a wealth of other audiovisual and textual material detailing nuclear launches from the United States.

It is unlikely that Mr. Petrov would make the same decision. This is the world we live in today.

Recent advances in AI are remarkable. change how we produce, distribute, and consume information; The impact of AI-driven disinformation political polarization, integrity of elections, hate speech, trust science and financial fraud.as Half the world will head to the ballot box in 2024 And deepfakes target everyone from president biden to taylor swiftthe problem of misinformation is more urgent than ever.

However, the misinformation generated and spread by AI poses more than just economic and political threats. It poses a fundamental threat to national security.

Although the probability of nuclear war based on false information may seem low, the risks in a crisis are high and timelines are short, so there are situations in which false data could tip the balance towards nuclear war. produced.

Evolution of nuclear systems has increased the ambiguity of crises and shortened the timeframe for verifying information. Intercontinental ballistic missiles from Russia arrival Less than 25 minutes to the US. Submarine-launched ballistic missiles could arrive even sooner.many modern missiles carry The payload is vague, and it’s unclear whether it carries a nuclear weapon. This ambiguity is difficult to resolve in the short term, as AI tools that verify content authenticity are not reliable enough.

The most likely nuclear hotspots are also arenas involving actors with issues such as: low trust level Whether it’s the US and the Chinese Communist Party, Russia, or India and Pakistan. Even if communication were established within a limited time, leaders would have to consider convincing disinformation as evidence against the opposition’s demands for resignation.

Even if the United States were able to prevent such disinformation, there is no guarantee that other nuclear-weapon states would have the technical capacity to do so. Even if the United States takes great care to reject disinformation, a single attack by another actor could trigger a global nuclear war.

National security risks extend beyond a nuclear exchange.

U.S. officials’ concerns about Russia’s continued military actions disinformation campaign The problem with the American military biology laboratory in Ukraine stems not only from its potential to delegitimize Ukraine’s war effort, but also from something more sinister. If a new pathogen starts making Ukrainians sick in Donetsk and spreads across Europe, Putin’s government will use propaganda over the past 18 months to shift the blame to the United States and attribute the biological attack. It may become clearer, but this is already a difficult challenge in Ukraine. It is even more difficult in conflict zones.

The issue of disinformation is also related to the level of response. As the coronavirus pandemic has proven, Spread of misinformation Public health effectiveness declined and more infections and deaths occurred. Future responses may be significantly hampered by civilian capacity. fabricate persuasive false information Reflects the quality and style of a scientific journal about the origins and treatments of pathogens.

In cybersecurity, with the advent of advanced generative AI systems, spear phishing (the specifically targeted act of deceiving people with false information or authority) has similarly proliferated. With state-of-the-art AI system, more actors Without much technical expertise, they can craft a believable narrative about their position and demands, and extract information from unsuspecting victims.

The same tactics deployed in fiscal planning have also been used against officials in key government positions. Some of these efforts were successful. As AI systems continue to evolve, the barrier to carrying out such attacks may become lower and more effective and frequent.

It is clear that AI-powered disinformation is a fundamental risk to safety and security. A central strategy for mitigating this threat must start at the source. The most powerful systems produced by a small number of high-tech companies must be vetted for the risk of such disinformation before they are developed and deployed. Systems exhibiting the potential for such harm should be prevented from release until safeguards are in place to eliminate these risks.

Such a strategy is not only necessary to protect our democracy and economy; It is critical to national security and the safety of all Americans.

hamza chowdhury He is an expert on U.S. policy and national security expert at the Future of Life Institute.

Copyright 2024 Nexstar Media Inc. All rights reserved. This material may not be published, broadcast, rewritten, or redistributed.