The federal government is investigating Tesla's “full self-driving” software after multiple crashes, including fatal ones, are believed to have been caused by the system.

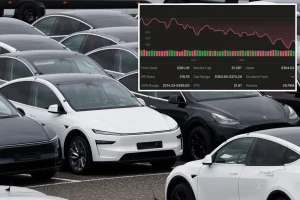

The National Highway Traffic Safety Administration announced Friday that it will begin investigating 2.4 million Tesla vehicles equipped with the company's fully self-driving software after four reported crashes.

U.S. auto safety regulators say they will begin a preliminary assessment after receiving four reports of accidents involving FSDs during times of reduced road visibility, including sun glare, fog and airborne dust. Announced.

In one accident, “a Tesla struck a pedestrian, resulting in a fatal accident.An additional accident occurred under these circumstances, with injuries reported,” NHTSA said.

This probe is intended for 2016-2024 Model S and X vehicles and 2017-2024 Model 3, 2020-2024 Model Y, and 2023-2024 Cybertruck vehicles with optional systems.

A preliminary evaluation is the first step before requiring a vehicle recall if the agency determines that the vehicle poses an unreasonable risk to safety.

Tesla says on its website that its “full self-driving” software for on-road vehicles requires active supervision from the driver and does not make the vehicle autonomous.

NHTSA is considering FSD's engineering controls' ability to “detect and appropriately respond to reduced roadway visibility conditions.”

The agency will investigate whether other similar FSD accidents have occurred in conditions of reduced road visibility and whether Tesla has updated its FSD system in ways that could have an impact in conditions of reduced road visibility. I am asking if you have made any changes.

“This review will assess Tesla's assessment of the timing, purpose, and functionality of such updates, as well as their safety impact,” NHTSA said.

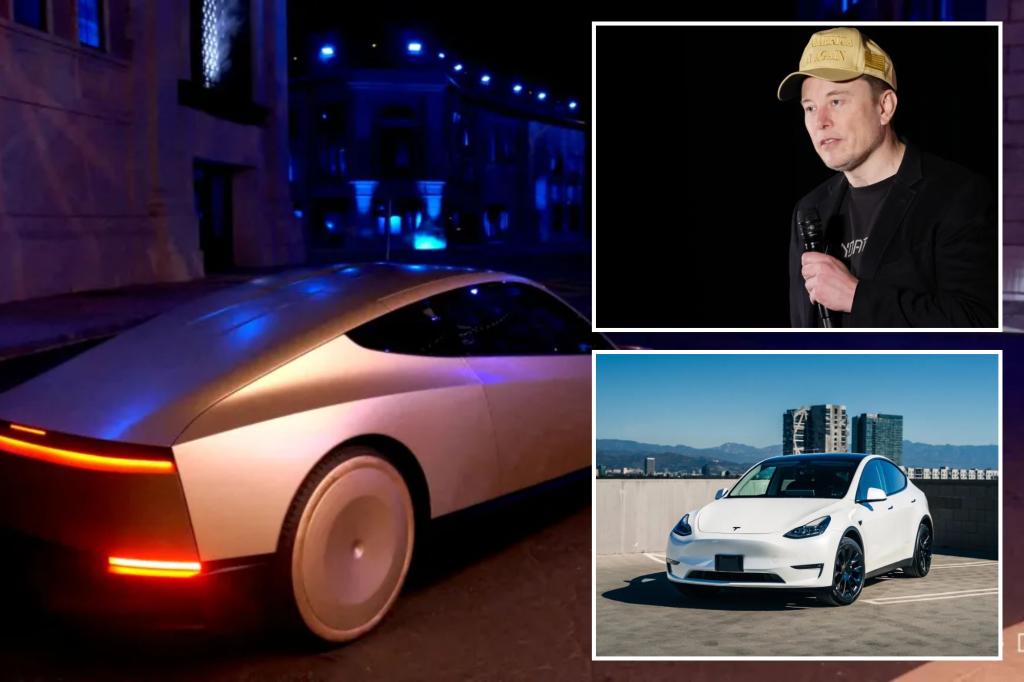

Tesla CEO Elon Musk is trying to shift the company's focus to self-driving technology and robotaxis amid competition and weak demand in the auto business.

The company did not respond to requests for comment. The company's shares were down 0.5% before the bell.

Last week, Musk unveiled a concept for Tesla's two-seat, two-door “CyberCab” robotaxi, which uses cameras and artificial intelligence to help navigate roads and has no steering wheel or pedals. Tesla would need NHTSA approval to deploy its vehicles without human control.

Tesla's FSD technology has been in development for years and aims for a high degree of automation, allowing the vehicle to handle most driving tasks without human intervention.

But it has come under legal scrutiny over at least two fatal crashes related to the technology, including one in Seattle in April when a Tesla Model S vehicle struck a 28-year-old motorcyclist in fully self-driving mode. This includes accidents resulting in death due to being hit. area.

Some industry experts say Tesla's “camera-only” approach to partial and full self-driving systems could pose problems in low-visibility conditions because the vehicles are not equipped with backup sensors. states.

“Weather conditions can affect the camera's ability to see things, and I think the regulatory environment will certainly have an impact,” said Jeff Schuster, vice president of GlobalData.

“This could be one of the major hurdles in what I would call a near-term launch of this technology and product.”

Tesla's rivals that operate robotaxis rely on expensive sensors such as lidar and radar to detect the environment in which they drive.

In December, the company recalled more than 2 million vehicles in the U.S. to install new safety features in its advanced driver assistance system, Autopilot. NHTSA is still investigating whether a recall is appropriate.

with post wire