Recently, an artificial intelligence startup that secured a whopping $14 billion investment from Meta has been noted for its surprisingly inadequate security measures.

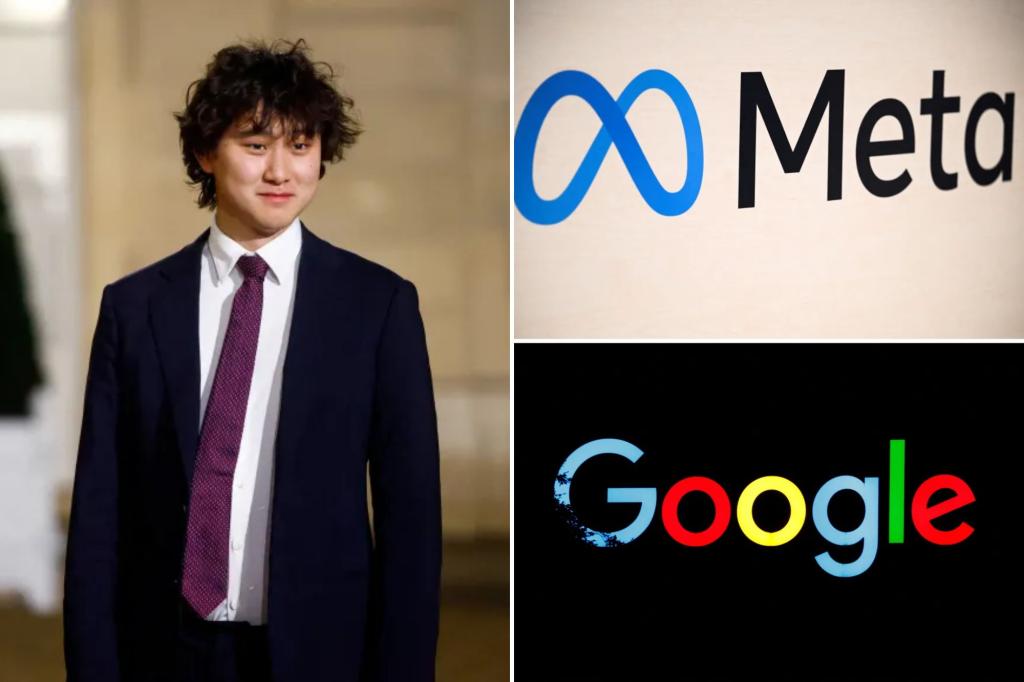

Earlier this month, news broke that Meta purchased a 49% share in Scale AI for around $14.8 billion, with CEO Alexandr Wang stepping in to oversee a new “Superintelligence” lab.

This astonishing price tag indicates that Meta views Wang and his company as pivotal in advancing the AI initiatives of social media platforms.

Yet, Scale AI seems to be rather nonchalant about its interactions with high-profile clients, allowing sensitive projects and confidential details, like Google Docs with email addresses and payment information, to be accessible to anyone with the right link. According to Business Insider, this has raised some eyebrows.

A spokesperson for the AI firm stated, “We are conducting a thorough investigation and have disabled the ability for users to publish documents from scaled-controlled systems.”

They also reiterated their commitment to maintaining strong protections for sensitive information and continuously improving their protocols.

Cybersecurity experts have noted there’s currently no evidence suggesting public documents have led to data breaches, although the company might still be vulnerable to hacks.

Requests for comments from Scale AI, Google, and Xai went unanswered, and Meta chose not to comment at all.

Interestingly, five current and former AI contractors shared with BI that the use of Google Docs is increasingly becoming routine within the company.

One contractor remarked, “The whole Google Docs system has always seemed very janky.”

BI discovered thousands of pages of project documents in 85 Google Docs, detailing delicate AI tasks, including methods for Google to optimize its chatbots using OpenAI’s ChatGPT.

At least seven Google files labeled “confidential” contained suggestions for enhancing the chatbot formerly known as Bird.

Furthermore, the public Google Docs featured information on Elon Musk’s “Project Xylophone,” including training materials with 700 conversation prompts aimed at refining AI chatbot responses.

There was also a so-called “confidential” document related to training AI products using audio clips to differentiate between effective and ineffective speech prompts.

These covert projects often went by codenames; however, several AI contractors noted that identifying the working clients is still fairly simple.

Some documents, associated with projects using codenames, mistakenly included the company’s logo, like presentations that bore the Google mark.

When AI products are involved, contractors mentioned that chatbots can sometimes inadvertently reveal client identities when questioned.

Additionally, a Google Doc spreadsheet was uncovered that contained names and private email addresses of thousands of employees, as reported.

One spreadsheet was bluntly titled “Good and Bad People,” categorizing numerous employees as “high quality” or “fraud,” BI noted. Another document flagged workers exhibiting “suspect behavior.”

Moreover, more public documents were found that tracked payments made to specific contractors, along with detailed notes on wage disputes and discrepancies.