Elliston Berry received a distressing text from a friend one October morning during her freshman year in high school. A naked photo of the two girls was circulating in their school, although Berry never shared any explicit images herself. Instead, someone had manipulated artificial intelligence to create the image.

Both girls were at a loss regarding what steps to take next.

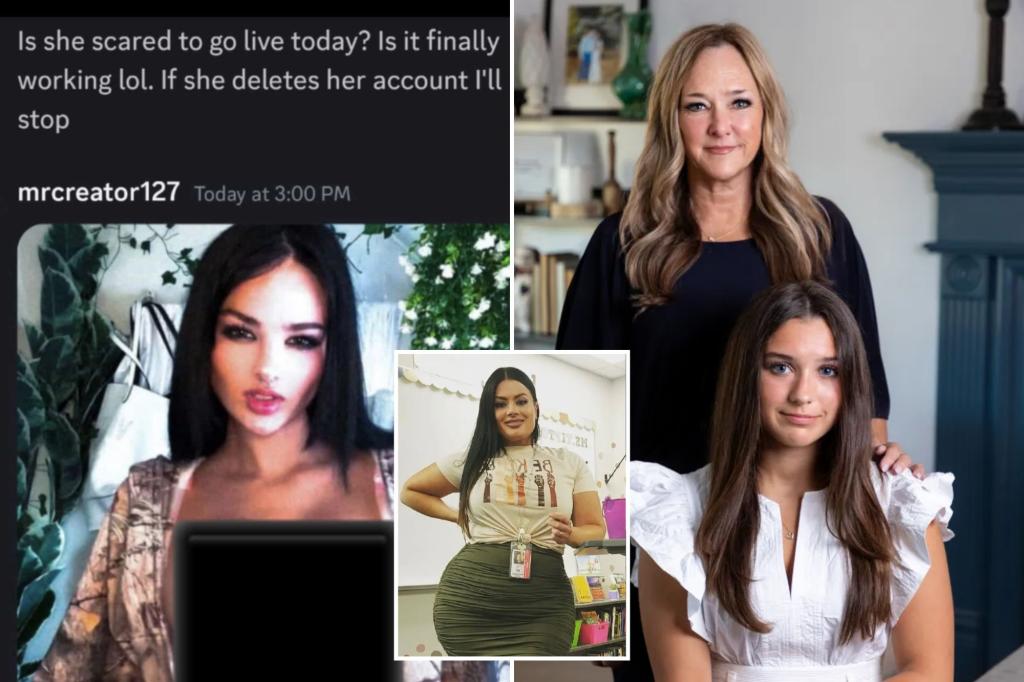

“I was totally shocked,” said Berry, then just 14. “I was crying all morning, and I couldn’t even leave my room.”

This troubling situation is becoming increasingly prevalent across the U.S., as technology allows for the realistic creation of such harmful images.

In conversations with teenage victims, educators, and parents, it’s evident that many are grappling with the ramifications of this issue. Unfortunately, schools and law enforcement often struggle to take effective action.

Initially, Berry, now 16, chose to deal with the issue alone, hoping it would resolve itself.

“I didn’t want to tell my parents,” she explained. “They’ve always warned me about not sending nudes. If they found out I was involved with one, I didn’t want them to think that’s what I did.”

Eventually, however, Berry’s mother discovered the situation. They approached the principal together.

“I felt utterly embarrassed at school. It was as if everyone was staring at me,” Berry recounted. “I was convinced the whole school had seen the image.”

In Fort Worth, Texas, an investigation was initiated, but the perpetrator’s actions escalated, targeting more girls and using threats to further intimidate them.

“I was scared for my safety,” Berry shared. “I didn’t understand the motive behind these images.”

Five days after the smear campaign, the culprit made a mistake by logging into the Snapchat account used for posting the images while connected to the school WiFi.

“I never thought he’d do something like this. He had been nice to me,” Berry reflected, recalling how he had once given her a Bible during class.

Berry’s mother, Anna Macadams, wanted to see the assailant expelled and appealed to the school board for help. However, each attempt was met with rejection, as there were no explicit laws being violated.

“We felt helpless and hit dead ends at every turn,” Macadams stated. “No one seemed to know how to handle it because he was a minor. The school and local police didn’t have answers either.”

In the end, the student faced only a short suspension, while his parents decided to withdraw him from school the following semester.

Yet, the images persisted online for nine months, highlighting the lack of protections for victims of AI-generated content.

In their quest for change, Berry and her family are now working with Senator Ted Cruz on the bipartisan Take It Down Act, which aims to prohibit the distribution of intimate images without consent, including AI-generated deepfakes.

Recently, Berry met with First Lady Melania Trump to discuss the importance of the bill. “It’s heart-wrenching to see how many teens, particularly girls, are facing these daunting challenges from harmful online content,” the First Lady remarked during an April event.

The issue of non-consensual AI-generated pornographic images is on the rise, with studies indicating that 11% of young people between the ages of 9 and 17 have encountered such content involving peers.

Another victim, 20-year-old Julianna Sabonis from Minnesota, faced a similar ordeal when a friendly Instagram account notified her of a nude image posted of her on Reddit. The reality was far more disturbing, as this message came from the perpetrator.

“When I looked at my profile, there were altered images of me naked taken from my social media,” Sabonis explained. “It really didn’t resemble my body at all.”

The assailant continued to contact her, boasting about the images. “The messages implied that I was devastated and that this would not end,” she shared.

Although she maintained a strong front, the experience was deeply traumatic for her, especially as a survivor of sexual violence.

When the perpetrator’s attempts to engage with her didn’t elicit a response, he escalated his tactics, sending 15 AI-generated nude images to Sabonis’s mother.

“My mom recognized instantly that it wasn’t me,” Sabonis mentioned. However, through careful investigation, she has been able to pinpoint the assailant, benefitting from laws in Minnesota that help combat AI-generated porn.

“I plan to pursue accountability,” Sabonis stated, confident that the perpetrator is likely harassing other women as well.

Teachers, too, are not immune to these attacks. Angela Tipton, an eighth-grade English teacher in Indianapolis, was shocked to learn that someone had used AI to create a nude image of her, which was then sent to her twin sons.

“My kids thought it was real,” said Tipton, 45. “They sent the image to me, and although I quickly recognized it wasn’t real, I was still taken aback.”

The image was later shared on social media and tagged to her sons’ account, leading to merciless taunting.

“When something happens to adults, there’s an assumption that they can handle it, but they forget we have families too,” she said, reflecting on the experience her family endured.

The harassment her family faced was relentless. “Other kids sent messages to my son like, ‘Your mom is quite something,'” she shared, underlining the widespread impact of the incident.

The school initially identified the culprits—five boys in the eighth grade—but found their hands tied as there were no applicable regulations governing the use of deepfake images.

They opted for a five-day suspension for the boys, while Tipton was asked to send an apology email.

“It didn’t seem like they took it seriously,” she noted. “Even the students who created it thought it was a joke.”

Feeling apprehensive about having the boys back in her classroom, Tipton ultimately chose to resign after two decades of teaching.

“This continues to follow me. I might run into the kids or their parents. I even live close to one of the boys involved,” she shared. “My children often have to defend me against others who say derogatory things.”

For Tipton, the impact of this experience has been profound, leading her to confront feelings of depression for the first time.

“I’ve never experienced anything like this. I felt hopeless,” she admitted. “If it’s this difficult for adults, I can’t imagine what children must be going through.”

Moreover, she notes that the incident has created fear among other staff members at her school.

“We’ve made them worry this could happen to them,” Tipton warned. “It shows how vulnerable we all are.”