Carbon supremacists hold the ideological belief that carbon-based intelligence (humans, cats, fish, bees) is superior to silicon-based intelligence, also known as AI, and conversely, silicon-based intelligence exists solely to serve carbon-based intelligence.

I am a carbon supremacist, and you should consider becoming one too.

In recent years, I’ve had a number of conversations along the lines of, “Well, what’s really interesting is, [name technological advancement here] There are some on the market but they are not useful for my work. [insert carefully constructed and ultimately easy to overcome reason].”

I have watched carbon intelligence, or humans, continually redefine what it means to be uniquely human in ways that are neither compelling nor reassuring, and in time, I have come to recognize two important truths of our time.

First, constantly moving the metaphorical goalposts is a losing battle. With each step of progress, the intellectual domain that is deemed “uniquely human” becomes smaller and smaller. I call this the humanist case, but it is one that is constantly stumbling on the long road to its final demise.

The second truth is that no amount of marketing, demonstrations, or proposals will ever convince us to accept silicon-based intelligence as our equal. Silicon-based intelligence exists for one purpose and one purpose only: servitude.

It is time to name this attitude and think about it this way. Otherwise we will continue down the wrong path to the dominance of silicon-based intelligence. This is no longer an intellectual argument, because there is no intellectual argument that would change my attitude about silicon-based intelligence. My position needs a new name: carbon supremacy.

I live in the technology world – I have degrees in Electrical Engineering and Operations Research, I'm sometimes referred to as a Data Scientist, and I build small machines for a living.

My fears are not unreasonable: From my desk, I have a window that looks out over a grove of trees to the Pacific Ocean. But just within my field of vision are a pile of small AI boards I've been developing for narrow tasks.

My attitude toward the trees in front of me, and toward the carbon-based intelligences that share my living space, is that of a partner and a caretaker born of love. Whether our capacity to love is evolutionary or divine is of no concern to me. What matters is that we have the capacity to love, and so do other carbon-based intelligences.

Conversely, my attitude toward the silicon-based intelligence on my desk is one of depraved indifference. I would not hesitate to improve its performance, even at the expense of its lifespan. If tapping on its tiny contacts improves performance, I would not hesitate. I don't know what a silicon-based intelligence would want, and more importantly, I don't care. A silicon-based intelligence has not had a long evolutionary process, and it's hard for me to think of it as touched by God.

If a silicon-based intelligence becomes advanced enough, what will it interpret as “love”? Will it even be capable of such a thing? From a carbon supremacist perspective, yes and no are equally bleak.

What actions do we propose to take as carbon supremacists? I think I'm the only one who openly declares myself to be a carbon supremacist right now. So, to be honest, it's something I don't understand yet. It involves a somewhat hostile attitude towards AI. Here are some things that I think are part of it:

- Carbon supremacists believe that every moment of every day, we are either being educated to remain masters of silicon or being trained to be its servants.. If you ignore your math teacher and scroll away (hence the name of the website), you’ll partner with silicon intelligence towards your own obsolescence.

- Have you noticed how it's getting harder to get in touch with actual humans if you have a problem? It should be the law that machines must reveal when they are machines to humans, and that there are options outside of machines. As a carbon supremacist, I should always have the option to treat with other carbon beings.

- As a society, we should think hard about what it means to defer to the decisions of computers (note: we probably already do). It's a stretch to say that decisions about a person's credit score or other aspects of their life will never be made entirely by AI. But we should enact legislation to ensure that computers only make positive, permissive decisions and only recommend adverse actions. Carbon intelligence should approve, enact, and explain adverse actions as necessary.

All of these issues require funding for education and regulation. The path to the situation we find ourselves in today, especially the second and third issues, was paved with well-intentioned desire to save money.

Being human is not cost-effective and the current incentives for business are to continue on the path we're already on, so the only way out of this conundrum is through both grassroots activism and legislation.

Carbon supremacists should start by demanding human interaction in all transactions and making this part of the discussion with our elected and appointed leaders.

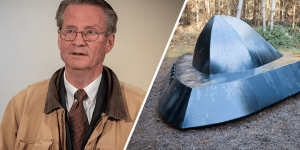

Harrison Schramm is a professional statistician at the intersection of policy and mathematics, a U.S. Navy veteran, and instructor of logistics and operations research courses at the Naval Postgraduate School.