The moment many have dreaded has arrived. US troops are now stationed near Gaza, tasked with maintaining a fragile peace between two nations that have a long history of conflict.

One misstep could spark a new wave of violence. This time, though, it could be the lives of those who bravely serve far from home.

Working to resolve the latest chapter in the Israeli-Palestinian conflict—and the immense suffering it has caused for both sides—is becoming necessary, albeit risky. While we may have to put our troops in harm’s way now, we should strive to change that for the future.

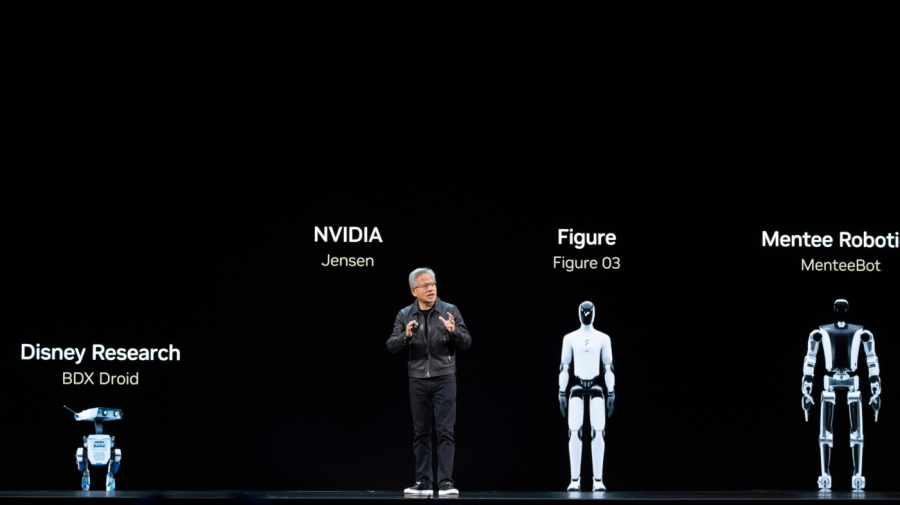

What if, instead of sending in human soldiers, we could use humanoid drones instead?

It might sound outlandish, but hear me out. These drones could be powered by advanced, interoperable artificial intelligence. It might feel like something straight out of science fiction, but it’s increasingly within our reach.

We have the capability to deploy robots in conflict areas, not just to protect civilians, but to facilitate communication and stabilize the situation on the ground. These systems could serve as defenders of humanitarian efforts, deterring violence without any weapons at all.

We’re on the brink of an era where AI is not just a tool for businesses or the military, but could also play a vital role in civil society. The pressing question remains: Will we develop ethical systems that genuinely protect people, or will we allow regimes with malicious motives to take the lead?

To clarify, our adversaries are already harnessing this technology for harmful purposes. Russia has used drone swarms to strike at Ukraine’s infrastructure, while China is incorporating AI into surveillance systems that support oppressive governance.

On the flip side, we and our allies seem hesitant. Our old doctrines still focus on traditional deterrence, which often means soldiers on the ground or imposing sanctions.

The real challenge today isn’t merely relocating troops but innovating machine coordination. The global balance of power could pivot based on how we manage AI governance.

From Ukraine to Gaza, the same dilemmas are ever-present. Civilians remain trapped between opposing forces, while aid organizations struggle to provide necessary support. Yet, the technological solutions to these issues already exist. Autonomous systems can secure ceasefire zones, safeguard evacuation paths, deliver relief, identify incoming threats, and help create spaces where humans can move safely.

This isn’t just a dream; it’s a crucial and strategic necessity. Western democracies need to find ways to deter aggressors without putting lives at unnecessary risk.

This future could revolve around what we’re calling the Omni City. It’s a concept that envisions a democratic, integrated infrastructure where civil AI is merged into transportation, healthcare, energy, and defense systems. It’s not about surveillance; it’s focused on saving lives and ensuring peacekeepers can do their jobs effectively.

The United States has a pivotal role to play. It must set standards for cooperation, ethical data usage, and autonomous deterrence. AI-equipped drones and humanoid protectors could be employed in crisis regions like Gaza to maintain humanitarian corridors and enforce ceasefires under United Nations oversight.

These technologies should not be viewed as instruments of war; rather, they can act as facilitators of peace, striving for accountability through understanding rather than through violence.

This framework could extend well beyond conflict zones. Domestically, we could utilize these systems for rapid responses to natural disasters like floods or wildfires, ensuring that coordinated efforts save lives.

If the nuclear age was about preventing mutually assured destruction, then the era of civil AI must be about preserving lives.

People’s views on this matter are often shaped by portrayals in films like “The Terminator.” Skeptics argue that machines lack moral judgment and there’s a risk they could become unmanageable. While these concerns are valid, doing nothing also brings significant risks.

Neglecting to establish ethical guidelines for AI deployment could leave these decisions to authoritarian regimes already employing such technologies for repression. The real question isn’t whether or not we should use AI, but rather how we can make sure it serves humanity effectively.

The emerging “space race” is less about capitalism versus communism, and more about the battle between authoritarianism and civic responsibility in AI development. The nations that excel in creating ethical, interoperable systems will redefine the moral landscape of technology.

Up to now, President Trump has resisted calls for collaborative efforts with international partners in this venture. Nevertheless, the moment has come for the United States and its allies to establish an Omni City and deploy peacekeepers that protect human lives rather than replace them.

The era of citizen-driven AI is approaching. The real question is: will we take the lead, or will we just follow where it takes us?