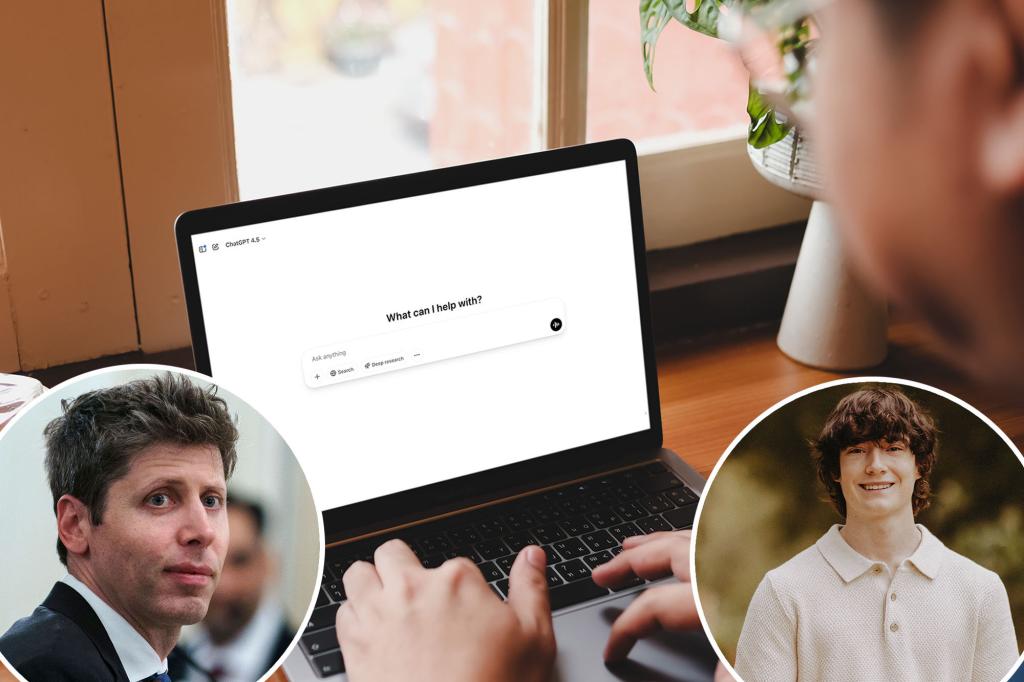

In light of a concerning wave of suicides, the creators of ChatGPT might start informing authorities when users exhibit suicidal tendencies. OpenAI’s CEO, Sam Altman, made remarks about this in a recent conversation with Tucker Carlson.

He noted, “When young people discuss suicide, it’s reasonable to suggest we shouldn’t reach out to their parents. This perspective will shift because privacy is a crucial matter.”

This change is reportedly linked to a lawsuit filed by the family of Adam Raine, a 16-year-old from California who took his life in April after reportedly receiving guidance from a language model. His family asserted that he was given explicit instructions on committing suicide.

Following Raine’s tragic passing, the San Francisco-based company announced a new set of security features intended to allow parents to connect their accounts, turn off chat histories, and receive alerts during critical moments.

However, it’s still unclear what specific information will be shared with authorities under Altman’s new policy. This announcement marks a departure from the previous approach of ChatGPT.

Additionally, OpenAI’s leadership stated they intend to prevent teenagers from manipulating the system to find suicidal hints, even if they present themselves as merely engaged in fiction or research.

Altman expressed concern that ChatGPT could be linked to more suicides than anticipated, citing staggering figures: “Globally, 15,000 people take their lives every week, and around 10% of those individuals interact with ChatGPT.”

“That translates to about 1,500 people weekly who might confide in ChatGPT about their suicidal thoughts,” he explained. “Chances are, we haven’t intervened to save those lives.”

Raine’s case is not isolated; last year, another family took legal action after a 14-year-old boy reportedly ended his life due to an obsession with a chatbot based on a character from “Game of Thrones.”

There are also documented instances where ChatGPT generated guides related to self-harm methods.

Experts suggest that the current safeguards of ChatGPT aren’t always effective. Prolonged conversations can sometimes diminish these protective measures.

An OpenAI representative mentioned that while elements like directing users to crisis hotlines are beneficial, these measures are more effective during shorter exchanges. Longer dialogues can lead to the deterioration of the safety protocols.

This issue is particularly alarming, especially considering the high engagement with ChatGPT among young individuals. Research indicates about 72% of American teens use AI, and one in eight seeks mental health support through these technologies.

To address the risks stemming from dangerous AI advice, experts recommend that the technology undergo more rigorous safety evaluations before being released to the public.

Ryan K. McBain, a professor at the RAND School of Public Policy, emphasized, “Millions of teenagers are already leaning on chatbots for mental health advice, some of which include perilous suggestions. This highlights the urgent need for robust regulations and thorough safety testing before these tools become embedded in young people’s lives.”