AI’s Rapid Take-Off: A Misguided Fascination

People in the tech industry often get caught up in ideas about sudden, monumental changes, especially when it comes to AI. This concept of a “rapid take-off” leads to an “intelligence explosion,” where machines begin to improve upon themselves. It paints a picture of a moment—some say it’s a singular turning point—when machine intelligence, having gained substantial skills, suddenly surpasses human control. It sounds dramatic, almost like a form of apocalypse where we, as humans, become irrelevant.

It’s a compelling narrative, really, filled with excitement and dread. But, let’s be honest—it’s more of a myth than reality. This notion stems from a flawed understanding of intelligence, technology, and the nature of our world.

The truth is, the world tends to adapt, and any impending apocalypse is often postponed. Technology integrates into our lives more smoothly than these tales suggest. The idea of a super-intelligent entity breaking free clashes with the actual physical and institutional realities we face. It’s important to note that intelligence isn’t just a number; it’s a process tied intricately to the constraints of our environment.

When we obsess over these sudden leaps in capability, we reveal a kind of historical short-sightedness. This so-called revolutionary technology is often portrayed as a unique event. History tells a different story, though. The innovations that truly transformed society didn’t just pop up out of nowhere; they emerged slowly and often messily over decades. For instance, the printing press didn’t instantly spread knowledge; its influence grew over time by securely facilitating the exchange of information. Similarly, the steam engine took generations to truly evolve into a pivotal technology.

Every time a new technology surfaces, we seem to experience cycles of panic. There’s a moral outcry, a barrage of alarmist predictions, and then… normalization. After all, the world adapts. We shouldn’t assume that this instance will be any different.

The fantasy of a rapid technological take-off is tidy, lacking the messiness of reality. Real-life constraints—like thermodynamics and chaotic material conditions—don’t simply disappear. A mind, no matter how advanced, cannot just wish an upgrade into existence.

Now, let’s consider the obstacles we’ll face. Any advancements we make will hit tangible limits. For example, calculations are bound by the speed of light, and any upgrades will demand energy and materials supplied through complex global networks, which can’t be summoned with a mere line of code. Even if AI designs a better chip, that design still needs to be physically produced, tied to the intricate realities of manufacturing and logistics.

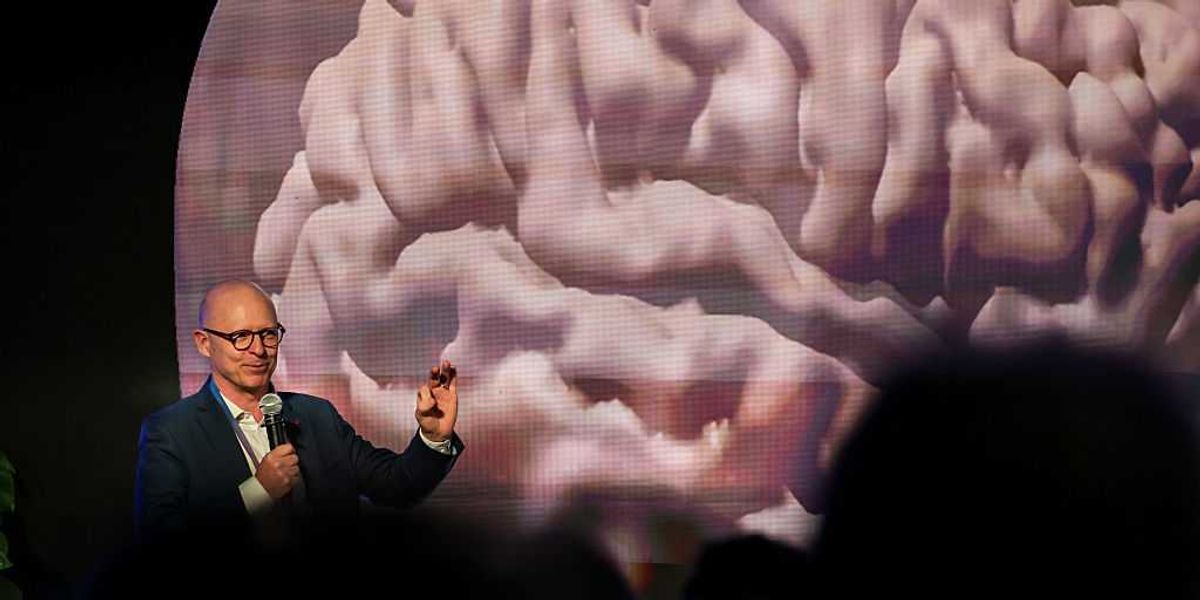

The intellectual challenges are just as daunting. The notion of an “intellectual explosion” presumes that solving one problem naturally leads to improvements in reasoning. This is a misconception. Many complex issues remain unsolvable through computation alone, and merely good reasoning won’t suffice either. Solutions often come at the expense of significant energy and time.

Interestingly, we already have a system for recursive self-improvement: it’s called civilization, driven by human collaboration. Its progress is typically steady and gradual—not explosive. Once we tackle the easier hurdles, diminishing returns set in. There’s no clear evidence that AI, no matter how capable, can escape this reality.

At the core of the fast-take-off argument is the belief that intelligence is a singular construct. Yet, advancements in AI show that this isn’t the case. We haven’t created a single, all-powerful intelligence. What we’ve developed are specialized, potent tools. Take AlphaGo—its remarkable success in playing Go didn’t translate to breakthroughs in medical research. Large-scale language models excel in their niche but rely heavily on extensive datasets and lengthy training sessions rather than sudden bursts of enlightenment.

It’s possible that our future doesn’t involve a monolithic supermind but instead consists of an AI ecosystem with specialized systems for language, vision, physics, and more—managed by human users.

Framing AI development as an imminent catastrophe simplifies decades of intricate social challenges into a straightforward horror narrative. It allows us to avoid engaging in the more complicated and necessary processes of development. Progress will happen in stages and involve adjustments in institutions, economic shifts, and tool management. The divine machine—that grand fantasy—will not arrive. Reality will remain a complex, physical landscape shaped by human nature, as it always has been.