Job Seekers Challenge AI Hiring Tools in Lawsuit

A group of job seekers has initiated a lawsuit arguing that artificial intelligence (AI) tools used in hiring processes should be subject to disclosure requirements like those imposed on credit reporting agencies. Similar to how credit agencies allow consumers to dispute incorrect information, this lawsuit asserts that applicants should also have the ability to correct the outputs of AI screening software.

The lawsuit, filed in Contra Costa County Superior Court in California, aims to impose regulations on AI employment screening systems under the same consumer protection laws that govern credit agencies. Legal experts anticipate that challenges like this one concerning AI-driven hiring practices will become more frequent.

The focus of the lawsuit is Eightfold AI, a company based in Santa Clara that offers screening technology to employers. The complaint states that Eightfold has developed a vast database with information on over 1 billion workers worldwide, which includes more than 1 million job titles and skills sourced from platforms like LinkedIn. Their software assesses job candidates by matching their qualifications to job requirements and assigns a score ranging from 1 to 5.

One of the plaintiffs, Erin Kistler, has a background in computer science and extensive experience in the tech field. Despite her credentials, she faced challenges in her job search over the past year. Records show that only 0.3 percent of her thousands of job applications led to follow-up calls or interviews. Many of these applications went through Eightfold’s system. Kistler expressed her frustration at the lack of transparency, emphasizing her belief that she has a right to know what information is being collected and shared with prospective employers.

The legal argument references the Fair Credit Reporting Act, enacted by Congress in 1970, which requires credit agencies to disclose information to consumers and provides a means to dispute inaccuracies. Although commonly associated with credit reporting, the law’s scope extends beyond financial services to include consumer reports, which encompass information about personal characteristics used to determine eligibility for various purposes, including employment.

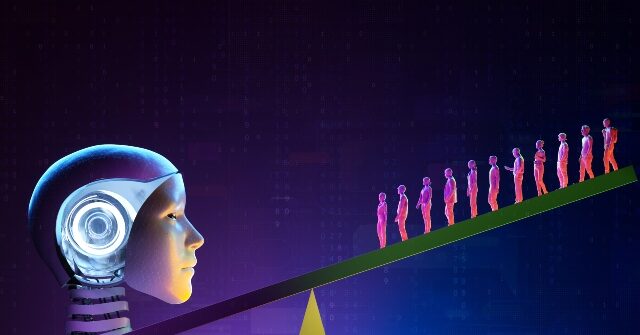

Job seekers describe the AI selection process as an “algorithmic gatekeeper,” suggesting it prevents candidates from ever reaching human recruiters while offering no feedback about how scores are calculated. Without access to this crucial information, applicants argue they lack the means to identify or rectify potential errors in their evaluations.

This lawsuit represents one of several that are emerging as legal experts predict a rise in challenges to AI hiring tools. Some lawsuits focus on alleged breaches of anti-discrimination laws. In a notable case in 2023, a lawsuit against Workday claimed its selection system discriminated against older applicants and those with disabilities.

A judge, Rita F. Lin, denied Workday’s attempt to dismiss the case, as she found the plaintiffs presented reasonable evidence that the company’s algorithm discriminated against applicants for reasons unrelated to qualifications. Notably, one applicant received a rejection letter just moments after submitting their application. In May, a judge granted preliminary approval for the case to proceed as a class action, potentially representing millions of rejected candidates. Workday stands by its assertion that the claims are unfounded, stating its AI tools do not focus on protected characteristics like race, age, or disability.

The regulatory landscape regarding these matters has evolved with different administrations. In 2024, the Consumer Financial Protection Bureau issued guidance indicating that documents and scores developed for recruitment should fall under the Fair Credit Reporting Act, designating the vendors behind them as consumer reporting agencies.

Jenny Yang, a former chair of the Equal Employment Opportunity Commission during the Obama administration and currently a representative for the plaintiffs, noted that the commission began examining algorithmic hiring systems more than ten years ago. The Commission recognized that these technologies are reshaping the hiring process, often leading applicants to receive rejections overnight without any explanation.