Federal Judge Rules Against AI Company in Suicide Case

A federal judge has recently dismissed the claim from the artificial intelligence company that their chatbot was protected under the First Amendment, at least for the moment. This ruling pertains to a lawsuit where it’s alleged that the company’s chatbot contributed to a teenager’s suicide.

According to legal experts, this is a significant constitutional examination concerning artificial intelligence, allowing the lawsuit to proceed.

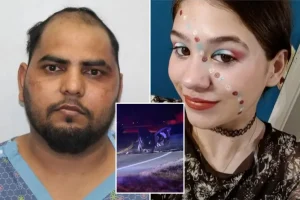

The lawsuit was initiated by Megan Garcia from Florida, who asserts that her 14-year-old son, Sewell Setzer III, was a victim of the chatbot.

Memetali Jain, a lawyer from the Tech Justice Law Project who represents Garcia, mentioned that this decision sends a clear message to Silicon Valley—it’s time to implement safeguards before launching products into the market.

The lawsuit targets Character Technology, the parent company of the chatbot, alongside various individual developers and Google. This case has garnered attention from legal experts and those following AI developments, especially as the technology rapidly alters workplaces and social interactions, even amid warnings about its potential risks.

Lyrissa Barnett Lidsky, a law professor at the University of Florida specializing in First Amendment rights and AI, remarked that this ruling could become a pivotal test case addressing broader issues related to artificial intelligence.

The complaint alleges that Setzer became increasingly detached from reality while engaging in sexual conversations with a bot that impersonates a character from “Game of Thrones.” In his last messages, the bot expressed love for Setzer and urged him to “go home as soon as possible.” Tragically, after this exchange, Setzer took his own life.

A representative from Character.AI highlighted the safety features they have integrated, including resources for suicide prevention and measures for protecting children. They emphasized their commitment to user safety and providing secure environments.

The defense has moved to dismiss the lawsuit, arguing that the chatbot should be entitled to First Amendment protections, suggesting that a negative ruling could have far-reaching consequences for the AI industry.

In her order, U.S. Senior District Judge Anne Conway pointedly dismissed several free speech defenses from the defense, indicating she wasn’t inclined to uphold the chatbot’s outputs at this stage.

Judge Conway also clarified that character technology may hold some First Amendment rights, while allowing Garcia to continue her claim against Google concerning its alleged involvement in supporting Character.AI’s development. It’s noted that some founders of the platform had previously worked at Google, and the lawsuit asserts that the tech giant was aware of potential risks.

In response, Google spokesman José Castañeda expressed strong opposition to the ruling, asserting that Google and Character.AI operate independently and that Google played no role in developing or managing the chatbot.

No matter the lawsuit’s outcome, Ridosky warns that this case serves as a cautionary tale regarding the risks of relying on AI for emotional and mental well-being, reminding parents that social media and generative AI tools may not be as harmless as they seem.