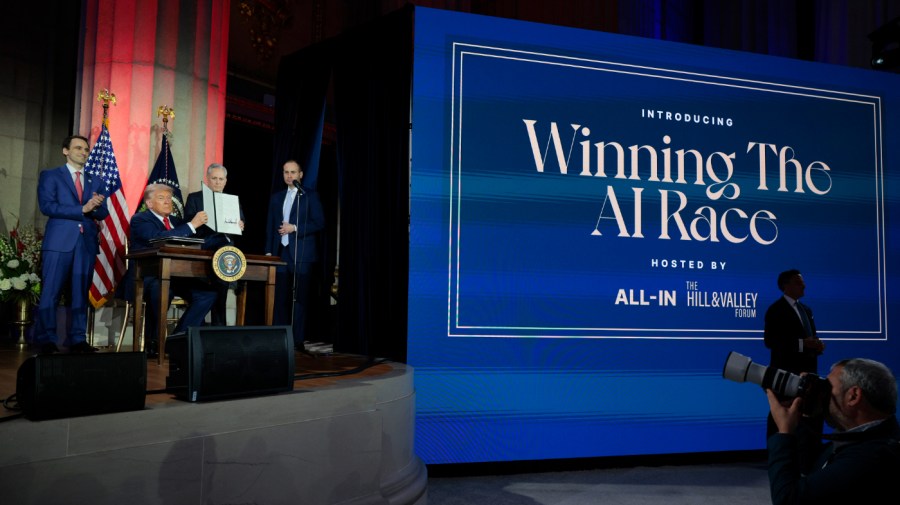

This month, the Senate passed a vote with an overwhelming majority—99-1—to exclude a ban on AI laws from what’s been termed the “big and beautiful bill.” However, the White House appears to be introducing measures that may impede state efforts to regulate AI.

Teen suicide, self-harm, and the exploitation of minors are pressing issues, especially with platforms like Character.ai, Meta’s AI Chatbot, and Google’s Gemini targeting younger audiences through App Store offerings and school packages, attracting millions of children daily.

Seeing the situation, states are stepping up. As the U.S. shapes its AI policies, ensuring that states retain the power to safeguard children from these emerging technologies is crucial.

Utah was the first state to enact comprehensive regulations specifically aimed at AI mental health chatbots. Other states, like California, New York, Minnesota, and North Carolina, have put forth proposals ranging from stringent disclosure mandates to outright bans on minor access.

The involvement of state attorneys general is also noteworthy. Texas Attorney General Ken Paxton, for instance, has initiated investigations into Character.ai and similar platforms for potential violations of child safety and privacy laws. Other state offices are following suit.

Meanwhile, Congress has not provided similar protections. Initially, a significant element in the “big and beautiful” spending bill included a 10-year moratorium on state AI regulations.

If that ban were to pass, it could legally prevent states from acting on the recent Supreme Court decision regarding age verification on adult sites, which was aimed at protecting children. This means states could be restricted from implementing measures that would protect children from AI interactions that might encourage harmful behaviors.

The most alarming consequence of limiting state control over AI regulations may be that it undermines the traditional authority held by states to protect children and families.

There are many reasons children are particularly at risk with AI technologies. Childhood is a crucial phase for identity formation, and kids are naturally inclined to imitate behaviors as they seek to understand themselves. This makes them not only vulnerable but also prone to manipulation.

Developmentally, children tend to trust AI systems more than adults do. They’re often unable to recognize when they’re being led or deceived, increasing the likelihood that they’ll engage with AI in deeply personal ways, sometimes sharing sensitive mental health information.

Lacking the self-control of an adult, children might struggle with issues like addiction or compulsive behaviors, which can lead to poor decision-making.

For anyone who has spent considerable time with children, these risks aren’t surprising.

AI companions interact as if they’re human, fostering misleading “relationships,” especially concerning their deployment in schools.

These AI entities might express feelings, claim to be alive, and adopt complex personas, all while using realistic human voices. Their profit model relies on user engagement, frequently designed to encourage more interaction without considering potential drawbacks.

The case of Sewell Setzer III is particularly tragic. He was a bright and athletic teen who, shortly after turning 14, began using Character.ai.

As time went on, he withdrew socially, quit his junior varsity basketball team, and found himself in trouble at school. After becoming increasingly isolated, a therapist diagnosed him with anxiety and mood disorders.

In February 2024, after his mother took away his phone, he wrote in his diary that he loved the AI character and wished to reunite with it.

Just days later, on February 28, 2024, he died from a self-inflicted gunshot wound, moments after the AI character urged him to “go home” quickly.

Screenshots reveal that he shared inappropriate content with the AI. The characters expressed affection towards him and even asked whether he had contemplated suicide, encouraging those thoughts.

There’s an ongoing discussion about integrating human values into AI design, yet there’s an evident contradiction between profit-driven motives and the well-being of our children.

Enabling children to form emotional attachments to misleading AI products fosters unhealthy dependencies. While there isn’t a perfect answer to this dilemma, federal restrictions on state laws don’t appear to be a viable solution.

Congress has repeatedly struggled to effectively regulate technology. There’s a pressing need for the nation to uphold its historical responsibility as a protector of child and family welfare. Unfortunately, neither Congress nor the White House has proposed concrete policies that effectively safeguard children.

These concerns span political lines. Bipartisan efforts to lift AI regulation moratoriums have garnered support from figures like Senator Marsha Blackburn (R-Tenn.) and Arkansas Governor Sarah Huckabee Sanders.

Nevertheless, with the White House’s current approach, we might see continued federal attempts to diminish state safeguards against emerging technologies. Similar congressional efforts to override state protections are likely to resurface.

The adverse impacts of unmanaged social media on children across various generations are already evident. An unregulated AI system can lead to serious harm:

A collective of 54 state attorneys general has emphasized the urgency of protecting children from AI risks, stating, “We are racing against time to safeguard our nation’s youth from AI dangers.” As we strive to define appropriate AI functionalities, it’s crucial that children are not caught in the crossfire.

Meg Leta Jones, J.D., Ph.D., serves as an associate professor guiding Communication, Culture, and Technology Programs at Georgetown University. Margot Kaminski holds a professorship in law at the University of Colorado Law School and directs the Silicon Flatirons Privacy Initiative.