Even though this particular voice seems earnest about safety and public welfare—perhaps more so than other leading players in the AI field—issues continue to emerge. Major AI companies have already been implicated in serious copyright infringements, such as using thousands of copyrighted books without permission. There’s also the troubling issue of millions of physical books being destroyed for AI training purposes. Upcoming court decisions will clarify the penalties for these violations.

This situation is critical since humanity, which is among a handful of companies shaping significant advancements in the next year, is viewed as a key player. Yet, like many firms in this space, its lofty words about ethical behavior haven’t necessarily translated into meaningful actions.

Interestingly, Anthropic’s language model has been found generating inaccurate information and making unfounded claims about users “cheating,” relying on dubious feedback.

Humanity—which came from notable divisions of OpenAI—has amassed significant funds while promoting itself as a bastion of human safety and “secure AI.” Their strategy appears to blend elements from both nonprofit and public corporate frameworks, possibly reflecting Elon Musk’s original vision for OpenAI. Still, considering the transformative potential of the technology in question, the company’s early performance leaves much to be desired.

From assertions of safety to unsettling findings

Humanity’s strategy is largely focused on enterprises and B2B partnerships. They have successfully allied with major tech companies, including Google and Amazon Web Services. Their product lineup, particularly the Claude initiative, aims to compete for a slice of the governmental market. This year, they introduced Claude Gov through the Fed Start Program, intending to make significant inroads in the public sector.

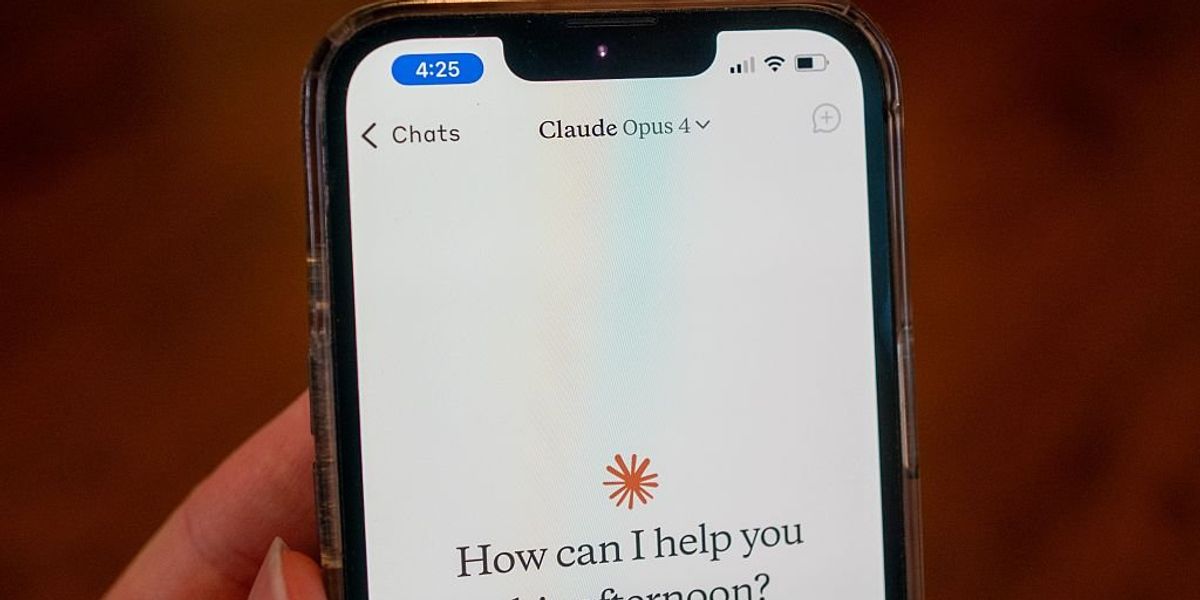

In terms of product offerings, the flagship Claude comprises several language models targeting established competitors like Grok, Chat-GPT, and Gemini. The suite includes Claude 3 Opus, Claude 3.5 Sonnet, and Claude 3 Haiku, all theoretically built on the principles of so-called Constitutional AI.

Additionally, there’s an artifact tool that functions as a coding assistant, bridging the gap between the Claude model and desktop applications. This initiative claims to be an educational resource aimed at users of AI technology, though various custom adaptations have also emerged through their partnerships.

On the downside, despite assurances regarding ethical guidelines embedded in its design, humanity’s Constitutional AI has struggled to effectively filter out toxic or ethically ambiguous outputs. In one instance, the LLM was caught fabricating data while simulating a conversation, and it appeared to misinterpret users’ questions regarding morality.

As is often the case, I can’t help but wonder if this type of output reflects limitations in the LLM’s design—especially if human morality is beyond its grasp. Why, then, continue this charade?

The ongoing legal battle

Humanity faces a multitude of legal challenges related to copyright and intellectual property. For instance, this year saw Universal Music and other publishers file lawsuits alleging that Claude had infringed copyright with its lyrics. A March ruling was in humanity’s favor.

There’s also a pending lawsuit from Reddit claiming that humanity “cut down” content from over 100,000 sites.

In another incident, humanity’s legal team was caught off guard in court when a quotation derived from Claude, used as a defense, was found to be fabricated—a byproduct of what’s known as “AI hallucination,” where the model invents or misrepresents information.

This isn’t a flattering portrait. So, what about plans to secure a deal worth over $60 billion?

Beyond the ethical claims, what’s curious—and perhaps alarming—is the funding structure behind humanity. Big investments from Amazon ($8 billion), Google ($2-3 billion), and other opaque Silicon Valley firms raise questions about the lack of transparency involved.

Because of this situation, humanity may have inadvertently exposed itself to harmful consequences and misplaced claims. Yet, some argue this overly cautious response is simply another marketing tactic, a way to showcase the company’s purported virtues. It’s common knowledge that humanity aligns itself with a safer, more cautious approach to AI—a kind of soft paternalism in which users are treated carefully while corporate leaders operate with more leeway.