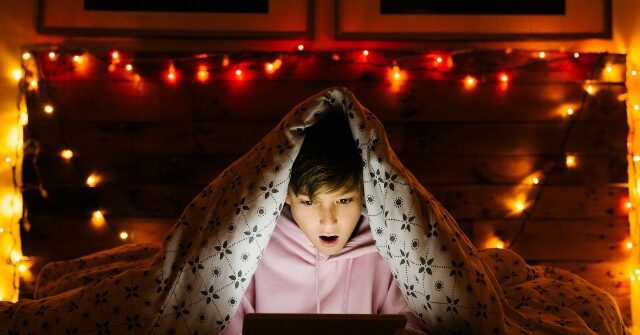

Video creators are increasingly using AI tools to quickly produce low-quality videos targeted at children on YouTube, raising concerns about the impact of such content on children. .

wired report The world of children’s entertainment on YouTube is facing new challenges. It’s the growing influx of AI-generated videos aimed at the youngest viewers.according to wired Research shows that more YouTube channels are leveraging AI tools like ChatGPT, text-to-speech services, and generative AI features to automate the creation of animated videos for kids.

These channels often advertise themselves as educational or rely on familiar aesthetics like popular channels. cocomelon The series is churning out videos at an astonishing rate. He has one channel called Yes! Neo, which has more than 970,000 subscribers, has released new videos every few days since its launch in November 2023, with titles like “Ouch!” “Baby Got a Boo Boo” and “Poo Poo Song.”

Ben Colman, CEO of deepfake detection startup Reality Defender, analyzed some of these channels and found evidence of AI-generated scripts, synthetic voices, or a combination of both. “Generative text-to-speech is now becoming more and more common in YouTube videos, and it’s clearly aimed at children as well,” Colman says.

Current trends in AI video seem to be focused on leveraging YouTube’s recommendation algorithms and monetization opportunities. Tutorials promising to make money from AI-generated videos for kids have flooded the platform, with titles like “He made $1.2 million from AI-generated videos for kids?” and “$50,000 a month!”

Experts have warned that a wave of hastily assembled AI-driven content could have a negative impact on young viewers. David Bickham, director of research at Boston Children’s Hospital’s Digital Wellness Lab, expressed skepticism about the educational value of such videos, saying, “They are produced entirely to catch the eye; “You wouldn’t expect it to have a positive beneficial effect.”

The rapid pace of AI video production has raised concerns about the lack of human oversight and quality control. Tracy Pizzo-Frey, senior advisor for AI at Common Sense Media, emphasized the importance of “meaningful human oversight, especially generative AI,” and suggested that the responsibility should not be placed entirely on families.

YouTube is preparing to introduce content labels and disclosure requirements for AI-generated content, but questions remain about the platform’s ability to effectively monitor and regulate this emerging trend. The potential impact on children’s cognitive and emotional development, along with the risk of exposure to inappropriate or harmful content, calls for greater accountability and ethical considerations in the use of AI in children’s entertainment. is increasing.

read more wired here.

Lucas Nolan is a reporter for Breitbart News covering free speech and online censorship issues.