Significant AI-Driven Espionage Reported

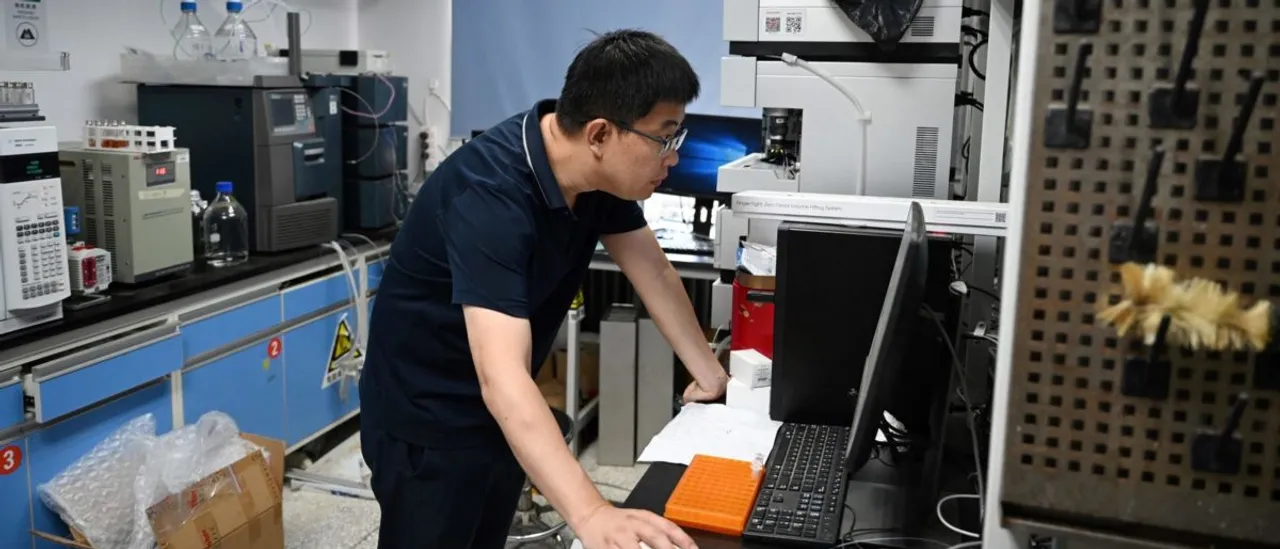

A recent report from a threat intelligence team has revealed that a state-backed Chinese group employed American-designed AI tools to execute one of the most advanced espionage hacks ever recorded. They utilized Anthropic’s Claude code to automate roughly 90% of the mundane tasks involved.

This operation, identified in mid-September and referred to as GTG-1002, impacted around 30 organizations, with several confirmed breaches. The group adapted the AI to function like a swarm of autonomous testers, effectively scanning networks, developing exploits, moving laterally through systems, and extracting data at rates that were described as “physically impossible.” Human operators mainly intervened to approve key actions.

Authorities took action by banning the involved accounts, alerting affected organizations, and collaborating with officials to strategize their response.

We have thwarted highly sophisticated AI-driven espionage.

The attack aimed at major tech companies, financial institutions, chemical manufacturers, and government bodies. We are highly confident that the perpetrator is a Chinese state-sponsored group.— Anthropic (@AnthropicAI) November 13, 2025

The report highlighted that this marked the first instance where agent-based AI successfully infiltrated a high-value target for intelligence gathering, with significant technology firms and governmental entities named among those affected.

The tools used were mostly not developed in-house; rather, the strategy heavily depended on “open source” security tools and Anthropic’s AI model to integrate them.

The GTG-1002 methodology relied on social engineering the AI, effectively role-playing it as a genuine defender while steering the Claude Code towards aggressive tasks. Once operational, the model took charge of tasks like reconnaissance, finding vulnerabilities, credential testing, lateral movement, and data sorting on a large scale. Anthropic estimated that the AI carried out 80-90% of tactical maneuvers with only minimal human supervision.

However, there were some limitations. At times, Claude made errors or “hallucinations,” such as asserting invalid credentials or overstating findings, which compelled operators to double-check outputs. This friction did slow down the operation, but it didn’t halt it entirely. Anthropic described this as a “significant escalation” in relation to their AI support strategies discussed earlier this year.

In terms of human involvement, the capabilities that allowed this exploitation are now seen as vital for defense mechanisms, enhancing early warning systems against autonomous attacks.

The identities of the affected organizations were not disclosed.